Generative UI design is the discipline of building interfaces that an AI model assembles at runtime from data, user intent, and allowed components, rather than interfaces a designer specifies screen by screen in advance. In 2026, enterprise teams are shipping this in production at Google, Vercel, and inside LLM-powered dashboards, and the design work that determines whether it succeeds sits almost entirely in constraints, fallbacks, and component contracts rather than in the output itself.

What generative UI design actually is

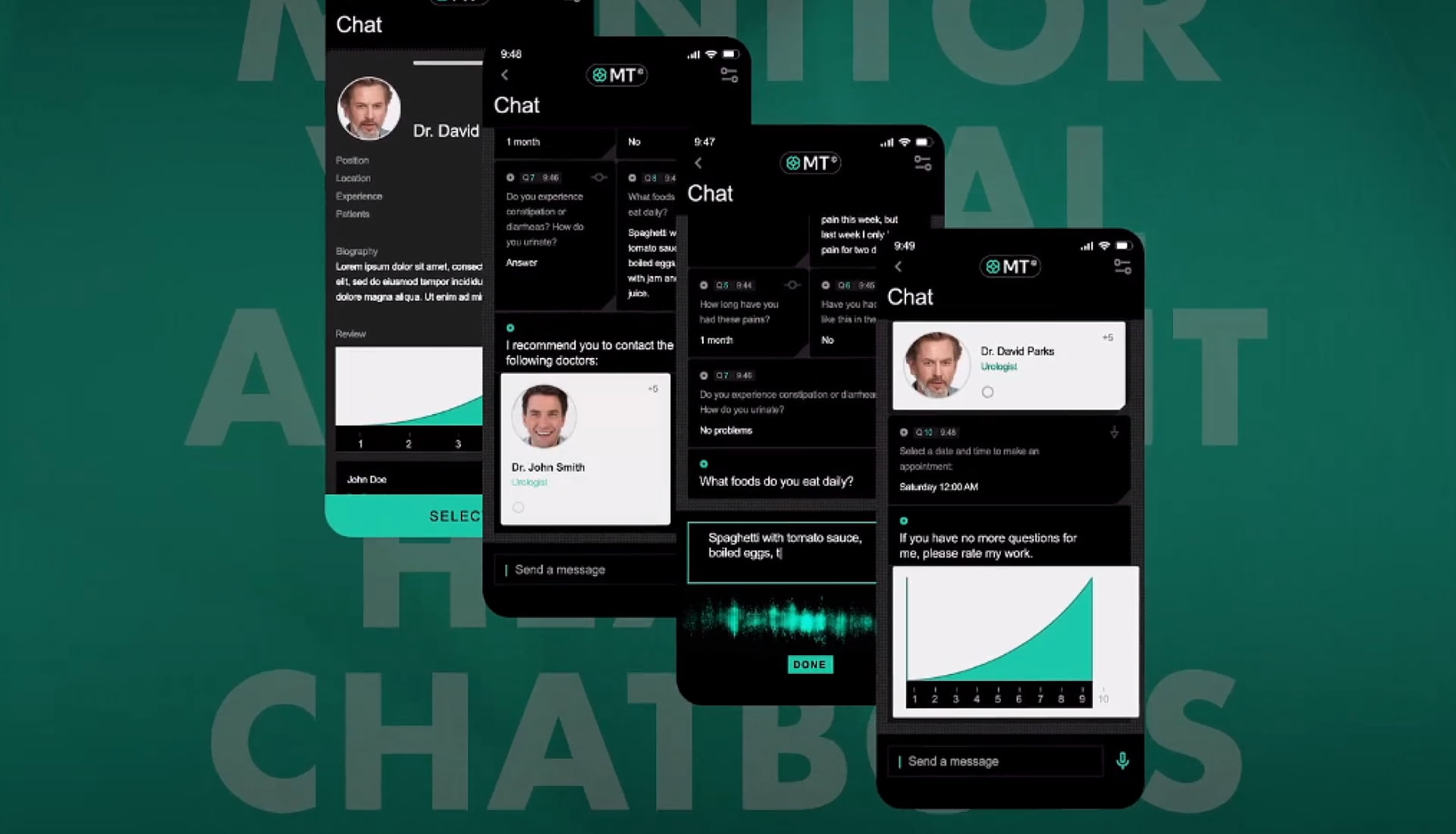

Unlike a traditional product where every screen is designed and shipped in advance, a generative UI product is built around a component library and a set of constraints, and the interface itself assembles at runtime. The pattern is already in production inside consumer AI assistants, adaptive dashboards, and LLM-powered internal tools.

The distinction buyers most often miss: generative UI is not AI-assisted design.

AI-assisted design uses tools like v0, Claude Artifacts, or Uizard to speed up the work a designer does in advance. Generative UI inverts that pattern: the designer's work moves up front into constraints and components, and the two disciplines require different deliverables, team structures, and QA methods.

Full generative UI design program

The complete program for teams building a generative UI feature from scratch. Typically 12 to 20 weeks across all four phases: constraint discovery, component and schema design, fallback work, and the initial evaluation prompt set. Best for teams with a defined use case, committed engineering, and authority to change direction if discovery surfaces blockers.

Structural design only

Some product teams arrive with strong engineering in place but no component library or constraint document to build against. This engagement covers that gap directly. Typically 6 to 10 weeks. Fuselab hands off a documented component library, schema spec, and constraint document. Best for mature engineering teams that want design specialists only where design genuinely matters.

Readiness audit for shipped products

A focused diagnostic for products already in production. Fuselab audits the component library for over or under-constraint, identifies missing fallback paths, surfaces gaps in the evaluation set, and checks regulatory compliance. The deliverable is a prioritized remediation plan with pricing for each gap.

Advisory retainer

Retainer work for products that have shipped and keep evolving. Monthly advisory on component library evolution, new failure-mode responses, and evaluation set updates as the product and the underlying models change. Typically 10 to 20 hours per month, often continuing for a year or more as the feature matures.

Related Services and Solutions

Industries where generative UI earns its keep

A dispatcher’s screen needs to reshape itself around whichever event actually happens: a delay alert produces a rerouting card, a customs hold produces a compliance checklist, a normal run produces the status panel. That is generative UI in a dispatch context, and it is where the pattern earns its keep. The design work concentrates on the rules that decide which surface belongs to which event. Data accuracy is the non-negotiable rule. A generated view that misstates a delivery window by fifteen minutes is worse than no view at all.

A generated interface in a clinical product cannot drop information, misrepresent a dosage, or surface a recommendation without its confidence context. No exceptions. The design work concentrates on the validation layer every rendered output must pass, and on the fallback templates the system reverts to the moment validation fails. The constraint set on a healthcare generative UI project typically runs two to three times larger than on a consumer equivalent, and the evaluation prompt set is tested against every model change, not just at launch.

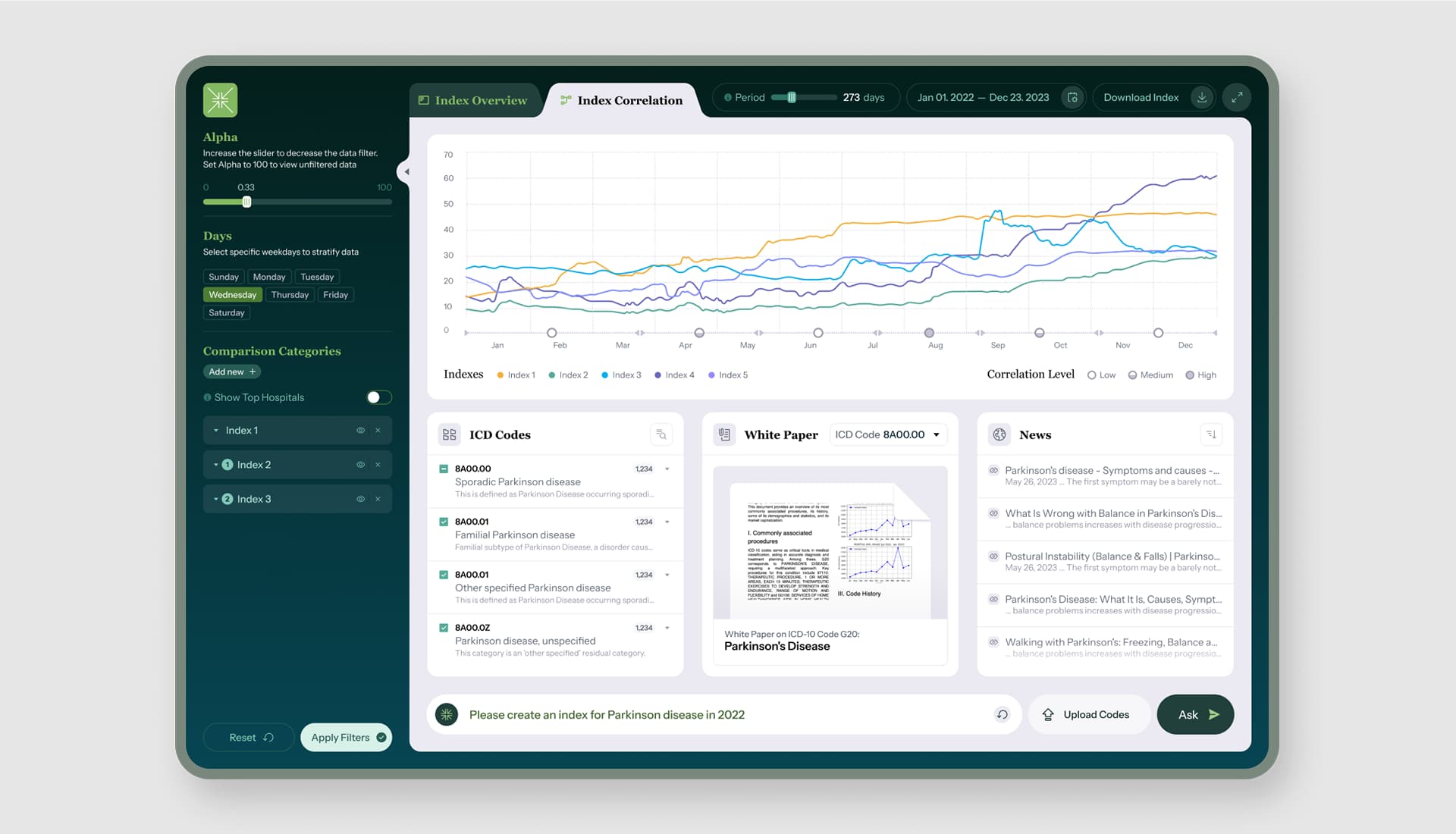

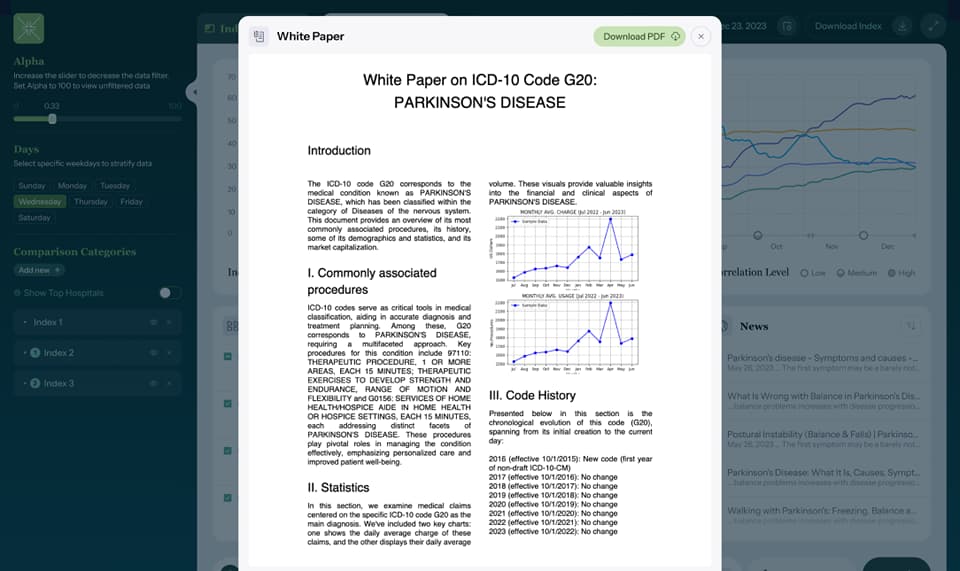

Finance is all about the numbers. But what about those of us who are visual learners and are not the best with numbers on a page? Visualization is the answer. Creating graphs and charts can take away time from valuable needs of employees. Creating AI solutions with Fuselab, such as generative shape design takes care of these tasks, and allows your employees utilize their talents in more productive ways.

Records integrity is the constraint that separates public-sector generative UI from almost every other category. Every screen a user sees often needs to be reproducible after the fact for audit, FOIA, or compliance review. That pushes the design work toward narrow generative components and deterministic rendering paths, not open-ended output. The design team defines what is allowed to vary across sessions and what must remain identical. Fuselab holds a GSA contract for this class of work, which means federal and state teams can engage directly without a competitive bidding process.

Product detail pages that rearrange around a shopper’s stated intent, search results that compose themselves around the specific query, merchandising surfaces that shift by customer segment: these are all generative UI patterns in production in retail right now, and most buyers do not recognize them as generative UI. The design work here concentrates on two things. One, brand compliance, because a generated layout cannot break brand guidelines on a sale page. Two, conversion-path integrity, because no generated variant can ever block a purchase. Fallback to a known-good template is the rule the product cannot violate.

Lab informatics products and clinical trial dashboards combine heavy regulatory load with unusual data complexity. A generated view in either context cannot misrepresent sample identity, dosage, or trial arm assignment under any circumstance. The design work concentrates on the schema-to-UI boundary: which parts of the interface should be rendered from structured data with zero generative content, and which parts can be composed by the model within a narrow allowed range. Getting that line wrong is the single most common reason biotech generative UI projects fail after launch.

Contact Us

Fill out the form!

Frequently Asked

Questions

What is generative UI design?

Generative UI design is the practice of building interfaces that an AI model assembles at runtime from data, user intent, and a defined component library, rather than interfaces a designer specifies screen by screen in advance. The design work shifts from producing mockups to producing the constraint set the model operates within: component contracts, validation rules, and fallback templates.

What does a generative UI project actually deliver?

A generative UI project delivers a constraint set rather than a screen set. The core deliverables are the component library the model is allowed to render from, the design tokens and layout rules, the accessibility and brand compliance floor, the fallback templates for when validation fails, and the evaluation prompt set used to QA the system. Screen mockups exist only for the fallback states.

How is generative UI different from AI-assisted design?

Generative UI refers to interfaces composed by an AI model at the moment of user interaction. AI-assisted design refers to the use of tools like Vercel v0, Claude Artifacts, or Figma AI to speed up the work a design team does in advance, with the final product shipping as a conventionally specified interface. A team can do either discipline without the other, and the deliverables and QA methods differ.

How is generative UI different from personalization or adaptive UI?

Generative UI composes interface components at runtime from model output, which means the exact layout and content reaching each user may never have existed before. Personalization and adaptive UI select from pre-built variants based on rules or machine learning signals, where every possible state was designed in advance. The distinction matters because generative UI requires validation and fallback infrastructure that rule-based adaptive systems do not.

How much does a generative UI design engagement cost?

Generative UI engagements with Fuselab typically run $75,000 to $200,000 for a full design phase, with hourly rates from $100 to $150 depending on scope. The cost driver is component library depth and the regulatory compliance burden, not the number of screens. Healthcare and government projects sit at the higher end because the constraint set is larger and evaluation runs continuously against every model change.

How long does a generative UI project take?

Generative UI projects typically run 12 to 20 weeks from kickoff to first production release. Constraint discovery and component library work concentrate in the first half of the timeline, with fallback design and evaluation running in parallel with engineering in the second half. Shorter projects usually skip the evaluation layer, which is the single most common reason generative UI products fail after launch.

What should a product team have in place before starting a generative UI project?

A product team needs four things before a generative UI engagement begins: a use case narrower than “use AI somewhere,” a defined user role or set of roles the interface must serve, a development team familiar with streaming LLM output, and a decision on which model family will be used. Teams missing any of these four rarely ship, because the constraint work has no anchor without them. Exploration-stage teams should start with a scoped prototype before committing to a full engagement.

Read Our Blog

Read Our Blog