The Future of AI-Constructed Design

Is machine learning ready to take adaptive user interfaces into The Future?

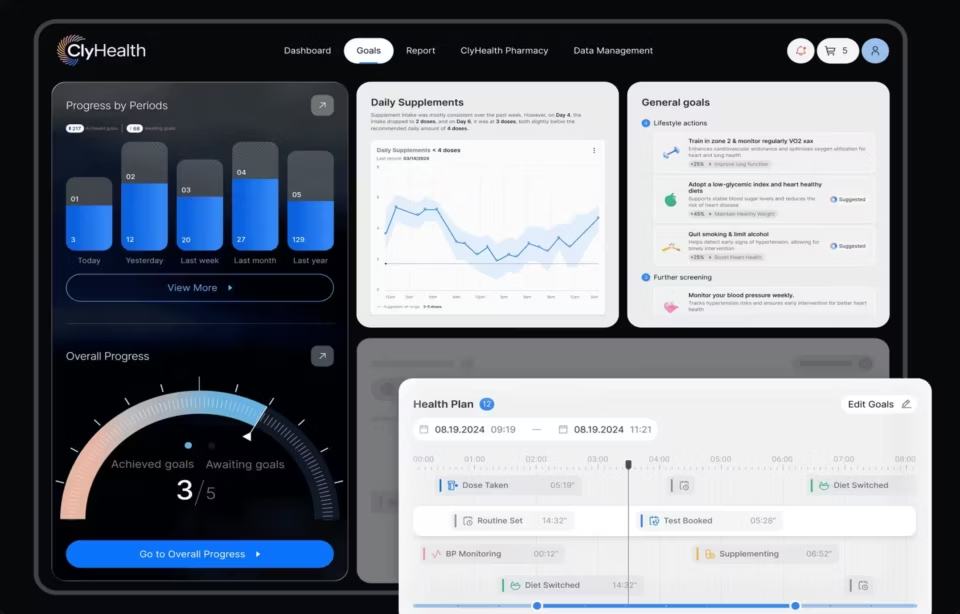

Adaptive UI design is the practice of building interfaces that use machine learning to reorganize themselves based on how individual users behave, surfacing the most likely next action and filtering content to match a person’s context without manual configuration. In 2026, generative AI pushed adaptive interfaces into every enterprise product conversation, and predictability, the oldest question in the field, became a regulatory matter under the EU AI Act rather than only a UX concern.

The idea is not new. Recommendation engines, predictive text, and personalized feeds have existed for two decades. What changed is scope. Where adaptive UI once meant Netflix guessing which thumbnail to show, it now means generative copilots writing, analyzing, and acting on a user’s behalf inside enterprise software, with every output shaped by prior interactions the user may not remember and cannot always see.

This scale shift surfaced a design tension that was easy to dismiss in the recommendation era. When an adaptive interface gets something subtly wrong, the user has no way to know why, and no way to correct the underlying model. The stakes were low when the output was a movie suggestion. They are not low when the output is a clinical recommendation, a legal summary, or a loan decision.

Screens remain the primary entry point into AI-driven environments. As enterprise AI adoption accelerates through 2026 and 2027, the data collected right now about how users interact with adaptive systems, what they accept, what they override, what they abandon, will define how the next generation of interfaces is built. Adaptive UI powered by machine learning is the scaffolding underneath all of it.

What is adaptive UX design?

Adaptive design refers to interfaces whose layout, content, or available commands change in response to observed user behavior rather than being configured manually. The machine learning layer watches how a user interacts with the interface, builds a pattern from those interactions, and reorganizes what the interface surfaces so the most likely useful elements appear first while rarely-used elements recede.

An established example is Siemens PLM program NX, a CAD software that uses machine learning to predict which commands an engineer is likely to need next based on the sequence of actions taken in the current session. More recently, agentic coding interfaces such as GitHub Copilot and Cursor extend this pattern to entire development workflows, adjusting not only which command appears first but what an entire panel of tools looks like based on the task the engineer is working on.

A rough schematic model of a machine-learning-based AUI might look like this:

It is useful to think of AUIs as a more sophisticated version of a command-based digital assistant like Alexa. Adaptive UI thinks, learns, and anticipates. It surfaces what a user needs before they articulate it, in the same way a seasoned assistant knows what a longtime manager wants before they ask. The difference is scale, because a single machine learning layer can anticipate for millions of users simultaneously while a human assistant can only anticipate for one.

To power an adaptive UI, machine learning algorithms watch interactions with a screen, a browser, or a piece of software. The commands and sequences of actions the user takes set a context for later use. A behavioral pattern emerges, and an IUI powered by AI understands that pattern and reorganizes the interface based on the user’s preferred context. Plenty of adaptive UX examples exist in the market today, and they are only scraping the surface of what enterprise AI interfaces will require in the next phase.

Adaptive UX design: how it differs from adaptive UI

Adaptive UX design adjusts the user experience across devices, channels, and sessions, where its UI counterpart focuses on personalizing what appears on a single screen to match a user’s preferences. UI/UX experts coordinate all user experience activities, from research and information architecture to interaction design and accessibility. The discipline uses machine learning to shape how the experience unfolds for each individual, rather than simply reacting to a fixed rule set.

Take, for example, websites optimized for certain browsers or screen sizes. Adaptive user experience sets parameters for graphic elements such as layout and visibility, as well as information presentation, deciding what content to display for which type of context. At an enterprise level, a platform serving field service technicians on a mobile device surfaces different controls than the same platform serving managers reviewing the same data on a desktop dashboard.

Adaptive UX powered by machine learning is an aid, not an agent. Its prime objective is to help the user sort through the volume of data, information, and possibilities available to them by presenting only options that are likely to be relevant. The algorithm observes interactions, builds patterns from the data, and re-prioritizes information on a screen in a way that aligns with the user’s most likely goal.

Where machine learning for content personalization is about spurring conversions and influencing user decisions, adaptive UX is the precursor. It sets the graphical and layout elements that presage a conversion by simplifying a user’s ability to interact with on-page elements in a personal way.

Most readers are already familiar with adaptive user experience through several common patterns. Recommendation lists suggest the next movie to watch or a product to consider. Personalized deals and special offers follow a user’s clicks, past purchases, and browsing history. One-click shortcuts surface the most likely next action. Ad visibility controls let users decide what they want to see and what to turn off.

AUI informs AUX, and the two work together. With machine learning algorithms, the goal is to make digital environments, whether apps, internal platforms, or AI copilots, simple and beneficial to use. That is the theory. In practice, users still experience limitations with machine-learning-powered adaptive interfaces, and the limitation is rarely about power or accuracy. It is about transparency.

How adaptive UI actually works: the four dimensions

Adaptive interfaces operate along four dimensions that machine learning acts on: generating new knowledge, entering data or information, filtering information, and optimization. Each dimension answers a different user need. The design decision in every enterprise project is which of the four dimensions the interface should support, in what combination, and with how much user control over each.

Generating new knowledge is what most consumers recognize first. Netflix’s “You Might Also Like” list draws attention to content related to what a user has already watched. In enterprise contexts, this pattern appears in platforms that surface accounts with similar behavior to accounts the user has already closed, tools that flag research papers or regulatory filings that match an analyst’s current interests, and AI search tools like Perplexity that present related queries alongside the primary answer.

Entering data or information covers predictive keystrokes, form-filling, and command suggestions. A smart search engine deploys machine learning to spot interaction patterns that enable a search experience based on filters, recent or related searches, or federated searches. Current examples include Gmail’s Smart Compose, which predicts the rest of a sentence as the user types, and coding interfaces like GitHub Copilot and Cursor, which predict the next block of code rather than the next character.

Filtering information is where a program such as NewsWeeder learns about a user’s preferences from their ratings and builds a user profile that refines future output. Today this pattern runs enterprise BI dashboards that highlight anomalies without being asked, Slack’s prioritized inbox, and clinical decision-support platforms that surface only the lab results relevant to a specific patient encounter. The quality of the filter determines whether the user trusts what has been hidden from them.

Optimization is where route advisors or layout and adaptive design elements reorganize based on a user’s interests, intended actions, and even visual or optical tracking. Google Maps adjusts ETA live as traffic shifts. Warehouse picking systems reorder aisle routes based on current congestion and SKU priority. Field service dispatch platforms reassign technicians mid-shift when upstream data changes. Each case involves the interface making a decision the user would otherwise make by hand, and earning or losing trust based on whether that decision holds up.

Adaptive design versus responsive design: the distinction that matters

Responsive design adjusts a layout based on the size and shape of the device viewing it. Adaptive design adjusts layout, content, or available commands based on observed user behavior. They solve different problems, and a product can use both at the same time, but the two are not interchangeable.

Responsive design is a technical discipline about rendering. A marketing site reflows to fit a phone screen. A dashboard stacks its columns vertically when viewed on a tablet. The trigger is device properties, and the system knows how to respond before any user behavior is observed.

Adaptive design is a personalization discipline about behavior. A platform interface reorders its command list based on which commands this specific user hits most often. A dashboard surfaces the charts this analyst opens every morning. The trigger is the user’s history, and the system cannot respond until it has data to work with.

Most serious enterprise products use both. Responsive design handles the device-level adaptation that every user expects in 2026. Adaptive design handles the individual-level personalization that differentiates the product from competitors. Confusing the two leads to product teams specifying responsive requirements when they actually need adaptive behavior, or investing in adaptive features on a site that has not yet passed basic responsive testing.

The Black Box Model Holding Back Adaptive User Interfaces

The black box model is the design problem that occurs when a machine-learning-powered interface produces an output without showing the user which inputs or patterns led to that output. When users cannot predict what the interface will do next or understand why it did what it just did, they stop trusting it, regardless of how accurate the underlying model actually is.

When interacting with machine-learning-powered user interfaces, the inputs are deceptively simple, but which input matters and why is not always clear. Similarly, the outputs produced are not always useful, beneficial, or even consistent with user interaction. This raises the question: why?

Tech giants such as Uber, Google News, Facebook, Instagram, Netflix, and Apple all face consistency issues with their adaptive user interfaces because the entire industry operates on the black-box model of machine learning.

Ostensibly, when users look for changes to their interface or digital environment, it is because elements within the environment are irrelevant to them. On the surface, this seems to occur because the hidden layers of deep neural networks or proprietary algorithms are still learning and expanding those networks. Low precision, then, appears to be par for the course. It is not.

A good user experience for adaptive interfaces creates a specific expectation about how the algorithm should behave. When actual behavior disrupts that mental model, the result is confusion rather than usefulness. One example of obscure predictive layouts is the low-precision or completely irrelevant Netflix suggestions on the “Because You Watched” lists. The first few suggestions have a clear pattern. Scrolling further surfaces recommendations that leave the user wondering whether the algorithm misread an earlier signal.

Black-box models of machine learning also present notable biases in so-called personalized offers or seemingly decentralized search results that run counter to engineers’ initial intentions:

This 2019 moment became one of the first publicly-visible examples of adaptive-algorithm bias operating at consumer scale. Goldman Sachs was later cleared by the New York Department of Financial Services, but that finding itself illustrates the design problem rather than resolving it. Regulators found no bias, and users still did not trust the system. What were the parameters of inputs that mattered? Did David Heinemeier Hansson’s wife interact with Apple Card differently from him? The answers are obscured by design. The issue feels academic when the stakes are a credit limit, and it feels urgent when the stakes are clinical, financial, or legal decisions.

The next stage of adaptive UIs is not only about operability or accuracy. It is about explainability. Adaptive user interfaces linked to adaptive user experiences do not only present a pre-made iteration of a layout, content, recommendations, or ads. They need to make room for transparency into which of a user’s inputs actually count, and allow users to take further action or adjust the system’s behavior when the output is wrong.

Mitigation interrupts the user experience, because personalization comes at the price of increased user effort. To take the Netflix example once more, personalization of layout and recommendations based on user, session, and device produces a very specific and granular adaptive user experience, right down to the thumbnails. But browsing, adding, and watching often create an environment riddled with near-duplicates, which increases interaction cost and degrades usability at its most fundamental level.

How regulation is reshaping adaptive UI design in 2026

Regulation moved adaptive UI transparency from a UX best practice to a legal requirement between 2023 and 2026. The EU AI Act, the Colorado AI Act, NYC Local Law 144, and the Air Canada chatbot tribunal ruling collectively established that companies are accountable for what their adaptive interfaces tell users, and that affected users must be able to understand and challenge the system’s output. This changed the brief for every enterprise design team shipping AI features.

The Air Canada case was the first to test the principle in court. In Moffatt v. Air Canada, decided by the British Columbia Civil Resolution Tribunal in February 2024, the airline was ordered to honor a bereavement fare refund that its customer-service chatbot had promised but which the airline’s own policy did not allow. The tribunal rejected the argument that the chatbot was a separate legal entity from the airline. The ruling established that a company is accountable for what its adaptive interface tells users, even when the interface produces output the company did not intend.

The EU AI Act entered into force in August 2024. Its high-risk system provisions require that affected systems be designed with enough transparency for users to interpret and use the output, that the system’s limitations and accuracy levels be communicated clearly, and that users be informed when they are interacting with AI rather than a human. These requirements phase in between 2025 and 2027 for systems already on the market.

US state and city rules moved in parallel. The Colorado AI Act, signed in May 2024 and effective in 2026, requires developers and deployers of high-risk AI systems to use reasonable care to avoid algorithmic discrimination and to notify consumers when AI is used in consequential decisions. New York City Local Law 144, effective July 2023, requires bias audits and candidate notifications for automated employment decision tools used within the city. Similar rules are moving through legislatures in California, Illinois, and Washington.

The design consequence is straightforward. Enterprise products shipping in 2026 need to surface, in the interface itself, which inputs the system used, what the confidence level of the output is, and how the user can challenge or override the output. Adaptive interfaces that withhold this information are moving from “inelegant UX” to “compliance risk” in the industries that use them.

Common pitfalls in enterprise adaptive UI design

Most enterprise adaptive UI projects fail in one of five predictable ways: personalization that does not match user intent, adaptive features that cannot be turned off, output that changes between sessions without explanation, machine learning integration that takes longer than design, and user research that does not include the moments when the algorithm is wrong. Each pitfall has a common root, which is that the product team specified personalization when they needed prediction, or requested automation when they needed assistance.

The first pitfall, personalization without intent alignment, is the Spotify DJ problem. A recommendation engine can be technically accurate and still feel wrong, because the user’s actual context, for example working versus exercising, is invisible to the model. Enterprise versions of this show up in sales intelligence platforms that keep recommending the same account tier regardless of which quarter the seller is working, because the model never learned that Q4 behavior differs from Q1 behavior.

The second pitfall, adaptive features that cannot be turned off, shows up when the design team adds personalization without adding a switch. Microsoft’s rollout of Copilot in Windows 11 and Office surfaced this broadly in 2024, with IT administrators asking for enterprise controls that the initial release did not provide. Users tolerate adaptive behavior they can control, and reject adaptive behavior they cannot.

The third pitfall, output changing between sessions without explanation, erodes trust faster than any other failure mode. Google Search AI Overviews faced sustained complaints through 2024 and 2025 about answers changing between queries the user thought were identical. When the system’s reasoning is invisible, users cannot tell whether the variation is meaningful or random, and most assume random, which means they stop relying on the output.

The fourth pitfall, ML integration taking longer than design, is a scope problem that becomes a timeline problem. A design that depends on a personalization signal the ML team has not yet shipped leaves the interface in a broken state, showing either placeholder output or nothing at all. Fuselab’s experience partnering with enterprise ML teams, including work for an AI and machine learning company documented in Clutch reviews, confirms a pattern the client described directly: technical ML products often lack in-house design leaders, which means design and ML work diverge unless the agency bridges them actively.

The fifth pitfall, research that skips the wrong-answer moments, is the most common and the most expensive. Enterprise UX research tends to focus on the happy path, where the algorithm gets it right and the user takes the suggested action. The harder and more important research is what happens when the algorithm gets it wrong. Does the user notice? Can they correct it? Do they learn to distrust the system globally, or only on this specific task? Projects that skip this research ship interfaces that look good in demos and degrade in production.

The Making of the Metaverse

Whether the immersive environments originally described as the metaverse arrive this decade or the next, enterprises using sophisticated ML-powered adaptive intelligent interface design in user experience still have real problems to solve first. The path forward is less about VR hardware and more about building adaptive interfaces that earn trust today, because the same transparency requirements apply whether the output is a web recommendation or a fully-immersive AI copilot.

Before immersive computing scales, even the largest technology companies will need to refine interactive elements of the adaptive user experience. The black box model is not inevitable, no matter what the industry narrative suggests.

Four clear paths forward exist. The first is giving users clear and consistent insight into which of their actions contribute to the output of ML algorithms. The second is allowing users to reorganize and resort elements of the output in ways that are easier for them, and tracking that reorganization as data to inform future predictive layouts and recommendations. The third is frontloading descriptions and headlines so users can scan the data, gain context quickly, and decide which actions to take. The fourth is personalizing based on a persistent user profile rather than varying the layout or experience by session or visit.

Each path shares one common thread: control. Adaptive interfaces that give the user meaningful control over the adaptation itself earn trust and sustain usage. Adaptive interfaces that withhold that control feel invasive regardless of how accurate their predictions are. That tradeoff is the most important design decision enterprise product teams will make through the rest of the decade. Agencies building enterprise AI interface design projects in regulated industries navigate this tradeoff on every engagement.

Frequently asked questions

What is adaptive UI design?

Adaptive UI design is the practice of building interfaces that use machine learning to change their layout, content, or available commands based on observed user behavior. The interface adjusts automatically so that the most relevant elements appear first, without requiring the user to configure preferences manually. The goal is to reduce friction for each individual user while the underlying data model improves over time.

How does adaptive UI differ from adaptive UX?

Adaptive UI focuses on what appears on a single screen and how it is arranged for an individual user. Adaptive UX focuses on how the experience unfolds across devices, channels, sessions, and over time. The two work together: adaptive UI is a component of adaptive UX, but adaptive UX extends beyond any single interface to cover the full journey.

What is the difference between adaptive design and responsive design?

Responsive design adjusts a layout based on the device or screen size. Adaptive design adjusts layout, content, or interface behavior based on observed user behavior or context. Responsive design solves a technical constraint about rendering. Adaptive design solves a personalization problem using data the user generates as they interact.

Why do adaptive interfaces fail even when the underlying model is accurate?

Adaptive interfaces fail when users cannot predict what the interface will do next or understand why it did what it just did. Accuracy alone does not create trust. Users need to see which of their actions shaped the output and have a way to correct the system when it gets something wrong. Explainability, not accuracy, is the design gate.

How long does an adaptive UI design project typically take?

Adaptive UI design projects typically run 12 to 24 weeks from research through design handoff, with longer timelines in regulated industries such as healthcare and financial services. Research and pattern discovery usually take 4 to 6 weeks, design iteration 6 to 10 weeks, and design system documentation another 2 to 4 weeks. Projects that include machine learning model work alongside the interface work extend beyond this range.

What should I look for in an agency to build an adaptive UI?

Look for an agency that has shipped production adaptive interfaces, not only concept work, and that can explain its approach to explainability and user control. Ask to see a named enterprise project where adaptive behavior was part of the scope, and ask specifically how the agency handled cases where the algorithm’s output was wrong. Agencies without a direct answer to that question have not built the difficult parts of adaptive UI before.