Trusted by NASA, NIH, DHCS, Fiserv, Uber. US-based UX research agency built for healthcare, government, and regulated-industry products.

What UX research services include:

User interviews, usability testing, field studies, prototype testing, surveys, and card sorting. Each engagement is scoped to the specific product decisions your team needs to make, not to a default set of methods. Every engagement begins with detailed kickoff meeting, led by a senior researcher named in the contract, not handed to junior staff after the pitch.

Not sure if research is the right next step?

Tell us what's wrong with the product and we'll tell you honestly whether research can fix it.

Testing with the wrong user population

The most common failure Fuselab encounters in new client engagements is testing with the wrong user population. A healthcare product tested only with physicians misses how nurses and pharmacists interact with the same interface under different time pressures. On the DHCS Medi-Cal project, caseworkers needed eligibility-form behavior that supported rapid processing while applicants needed clarity enough to complete it without help. Testing both populations together would have produced findings that worked for neither.

Testing on prototypes instead of production environments

Sample data lies. A prototype loaded with placeholder values catches layout and flow problems but hides the issues that only appear at actual data volume, actual permissions logic, and actual error states. Products that pass in prototype and fail at launch fail on production behavior the test environment cannot simulate.

Delivering findings too late to change direction

A PDF report delivered three weeks after testing ends is research the product team will not use. Fuselab shares session recordings and preliminary patterns within 48 hours of each testing round so the design team can adjust direction while research is still running.

Choosing methods before defining questions

The most expensive engagements are the ones that ran every available method without first agreeing what the data was supposed to prove. A two-week interview round followed by usability testing followed by a survey produces a lot of data and no clear product decision. This is what separates a UX research agency that scopes work around methods from one that scopes around decisions.

The right method depends

on the right question

UX research methods we deploy

A UX research agency that runs every method on every project has stopped doing research. Fuselab selects from six methods based on what the question demands: usability testing, in-depth interviews, field studies, prototype testing, surveys, and card sorting paired with tree testing.

Moderated and unmoderated usability testing

Moderated testing sessions, where a facilitator guides a participant through tasks, produce the deepest qualitative insights because the facilitator can follow up on unexpected behaviors in real time. Unmoderated testing scales better for validating specific hypotheses across a larger participant pool without the scheduling overhead of one-to-one sessions. The choice between them depends on whether the research needs depth or breadth at that stage of the project.

User interviews

In-depth interviews are foundational in every Fuselab research engagement because they reveal the reasoning behind user decisions that behavioral data alone cannot explain. A user who abandons a checkout flow and a user who completes it reluctantly look identical in analytics. An interview distinguishes between the two and identifies what the reluctant user almost gave up on, which is where the highest value design improvements hide.

Field studies and contextual inquiry

Field studies move research outside the lab and into the environment where users actually work. Observing how someone uses a product at their desk, under interruption, with three other tools open alongside it reveals constraints that controlled testing cannot simulate. The DHCS engagement showed caseworker workflow friction that lab-based testing missed, and the NIH project applied the same approach across clinical tablet and patient mobile contexts.

Prototype testing

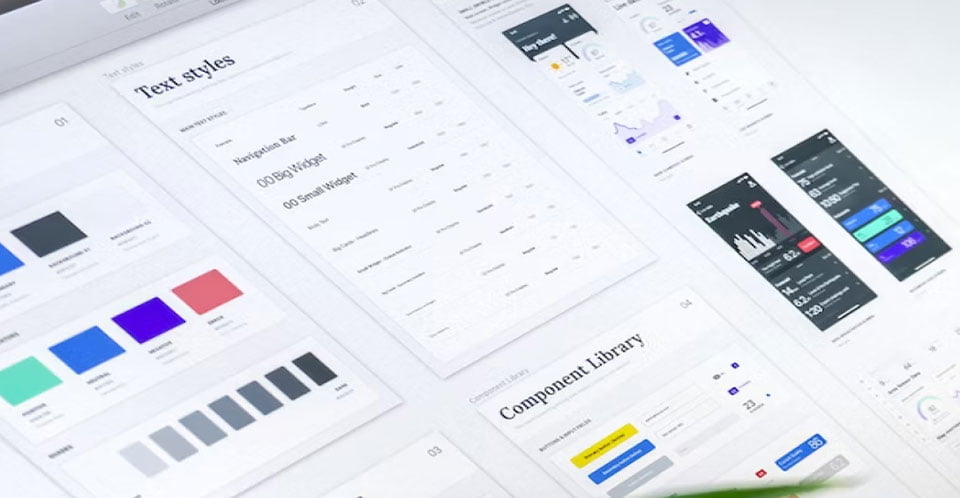

Testing interactive prototypes with real users before development begins catches structural problems when changes are still inexpensive. The critical distinction is testing task flows, not visual design. A prototype that looks rough but lets users complete real tasks produces more useful findings than a polished mockup that only demonstrates appearance. Fuselab builds clickable task-flow prototypes for every major engagement and tests them before any design enters the development pipeline.

Surveys and structured feedback

Surveys produce data at scale, but only when the right questions are asked at the right moment. A post-task micro-survey deployed immediately after a key workflow surfaces the specific friction that interviews would miss, because users do not retroactively remember small frustrations. Long cold surveys produce mostly noise. The harder question is timing: when in the user's experience the question is asked tends to determine whether the answer is useful.

Card sorting and tree testing

Card sorting reveals how users mentally organize categories and labels. Tree testing confirms whether users can find specific content within a proposed navigation structure. Both methods take days rather than weeks to complete and prevent costly information architecture restructuring after development starts. Fuselab uses both on every project involving navigation design or content reorganization, because architecture errors discovered after launch require a full structural rebuild rather than a simple content edit.

What a UX research engagement looks like

A typical Fuselab UX research engagement runs six to ten weeks from kickoff to final deliverables. The first week focuses on goal alignment and existing data review with the client team. Testing and data collection run in the middle weeks with live client access throughout. Analysis, recommendations, and implementation support close the engagement.

A UX research engagement begins by defining research objectives, reviewing existing analytics, user feedback, and design documentation, then identifying the specific questions the research must answer. Testing should not begin until the team agrees what a successful outcome looks like.

Research sessions run on recorded platforms where the product team can observe live or review recordings within hours. Methods deployed, whether moderated testing, field observation, interviews, or surveys, depend on the questions agreed at kickoff. Preliminary patterns surface continuously, not in a final report.

Healthcare, government, and fintech products require a regulatory review in parallel to user testing to identify where compliance frameworks shape interface decisions that general usability testing cannot surface. Accessibility issues users silently work around are flagged here, not after launch.

Analysis uses affinity mapping and thematic analysis to identify patterns across the data. Deliverables include a prioritized recommendation list, journey maps, personas, and testable prototypes. Each recommendation traces back to the specific user behavior that produced it.

Implementation work extends past the deliverable handoff. A UX research engagement translates research evidence into interface changes, information architecture adjustments, and testing protocols, working directly with the development team. Engineering review before build prevents mid-development revisions that slow delivery.

4 to 8 weeks after implementation, a follow-up evaluation measures whether the design changes achieved what the research predicted. User behavior data, task completion rates, and support ticket volume are compared against the baseline captured at kickoff to confirm which observations still hold.

UX research project case studies

UX research by industry

UX research requirements vary by industry because the regulatory context, user populations, and task complexity differ at a structural level. A healthcare research protocol cannot be applied to a fintech product without adjustment. Fuselab's UX research services concentrate on four industries where domain knowledge directly shapes methodology: healthcare, data visualization and dashboards, fintech, and AI and machine learning.

Clinicians switch user roles mid-task, patients reviewing results have ten to thirty seconds of attention, and administrators process sensitive data while managing interruptions. Commercial UX testing methodology does not transfer to these conditions, which is why healthcare engagements require custom research protocols. HIPAA and Section 508 are the baseline, not the differentiator. The harder question is whether the interface supports clinical decision-making when the user has seven seconds to choose, which is where most healthcare usability testing stops short.

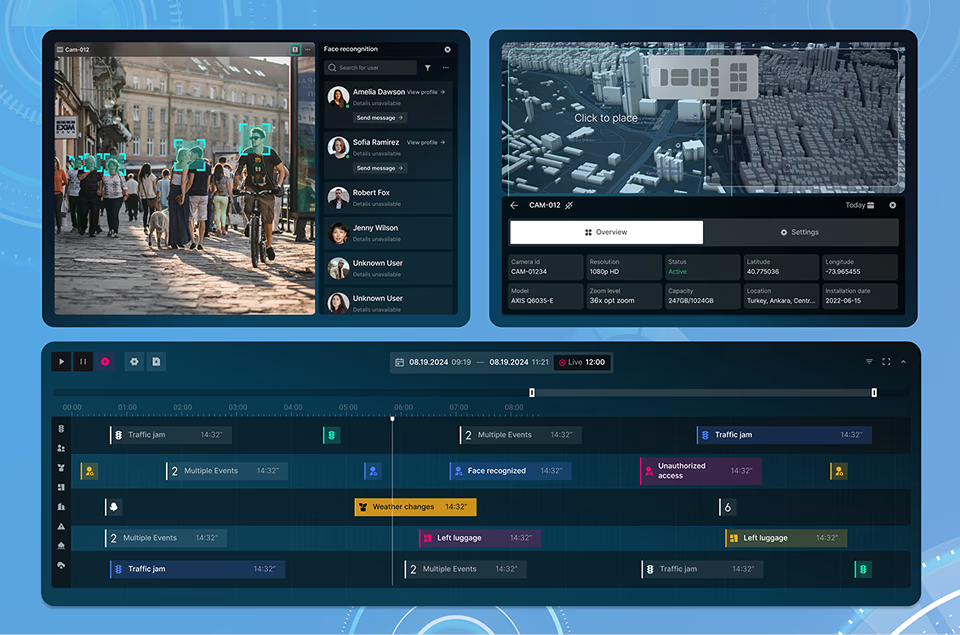

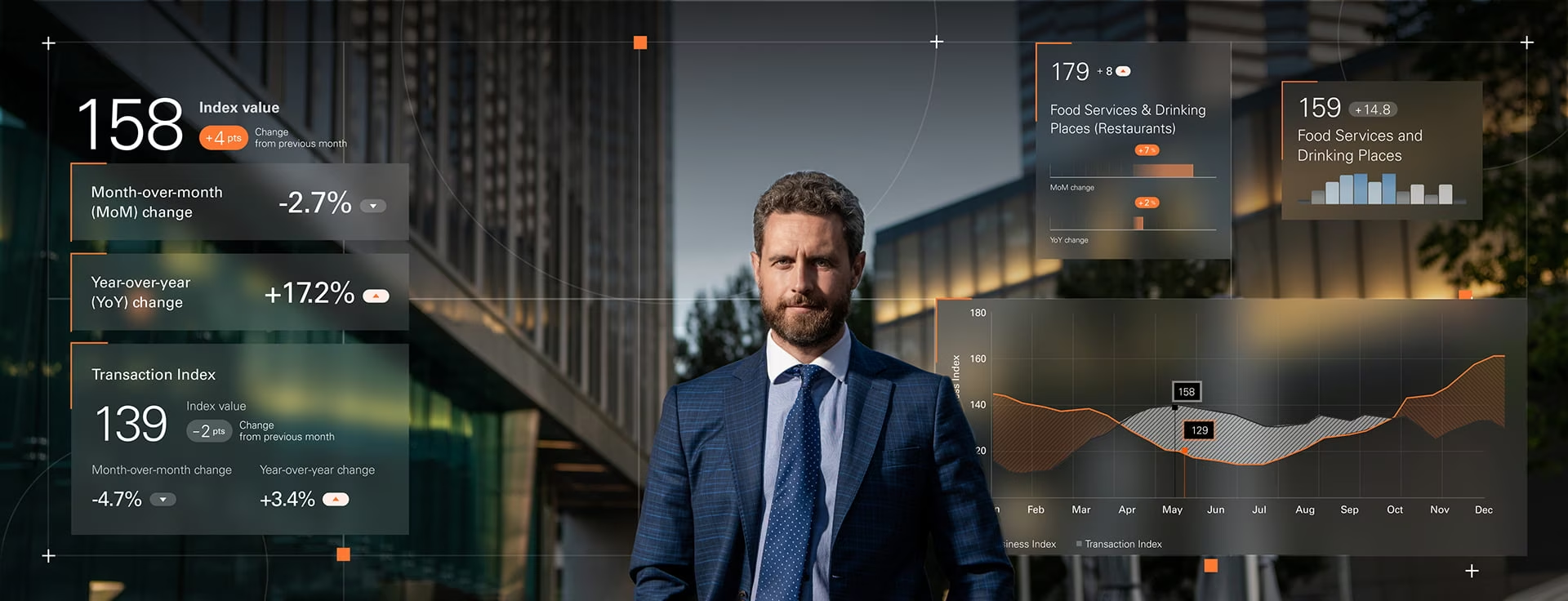

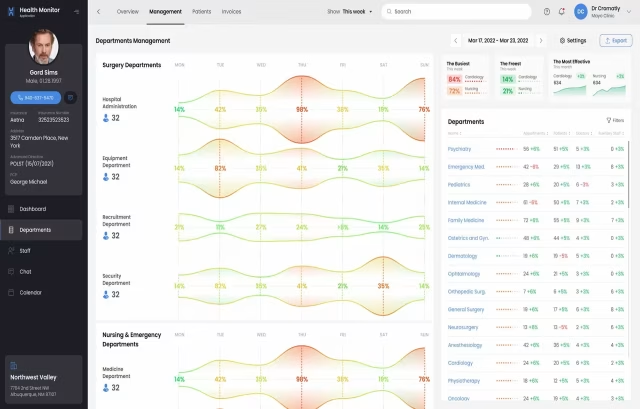

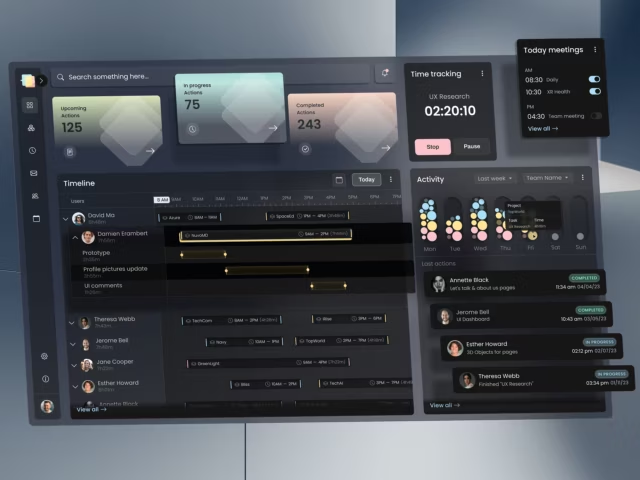

Users reading charts, filtering large datasets, and drilling into anomalies face a different research problem than users completing transactional tasks. The testing is about pattern interpretation rather than task completion, and most UX research frameworks built around transactional flows do not handle information density well. Dashboard research covers chart-type selection logic, information density thresholds, and how the interface handles edge cases in the underlying data.

A user entering bank account details abandons the task at the first sign of interface uncertainty. Financial research has to test trust and transaction confidence alongside standard usability, because the stakes change what users notice and what they tolerate. KYC sequencing, transaction-state communication, and error recovery patterns decide whether users complete onboarding or drop at verification. The hardest fintech UX research problem is not usability. It is how the interface behaves when the transaction fails and the user cannot tell why.

General UX testing does not cover the three things that matter most for AI products: users must understand what the model is doing, calibrate trust in its outputs, and know when to override recommendations. Research here tests how the interface communicates confidence levels, handles failure gracefully, and lets users provide corrections without requiring them to understand the underlying system architecture.

Interfaces used under motion, time pressure, and environmental distraction cannot be evaluated in a controlled testing lab. Field research is essential because in-vehicle and warehouse environments introduce variables that prototypes cannot simulate. A telematics dashboard that tests flawlessly on a laptop can fail within minutes when mounted in a moving vehicle under vibration and changing light conditions, which is why Fuselab tested the Automatize Platform in actual fleet environments rather than simulated workloads.

Trained operators, power users, and administrators perform the same tasks hundreds of times per week. They tolerate friction differently than first-time users because friction that feels minor in onboarding compounds into real cost when repeated daily. UX research for enterprise products focuses on keyboard shortcuts, bulk operations, and error recovery patterns rather than discovery flows or visual appeal.

Who leads the research team

Our team

Fuselab's UX research work is led by Marc Caposino, CEO and Founder, who has directed research engagements for the NIH, the California Department of Health Care Services, ClyHealth, Fiserv, and NASA across 20 years in regulated-industry UX. The senior team brings specialized backgrounds in healthcare, government, fintech, and AI interface design.

Don't Listen to Us, Read What Our Clients Are Saying.

We know that trusting an outsider with your vision can be scary. This is why if you're not satisfied with us after the first two weeks, you can walk away owing us nothing.

"We went from prototype to usable software lightening fast, and our customer reviews have never been better."

"Their creativity and mastery of UX UI design has made our years of working together enjoyable and incredibly successful!"

"If you need to re-think your product and need some truly unique design talent , Fuselab Creative design team is your answer."

"We needed a nimble team of UI UX designers to work with our development team and they quickly became one of our most vital resources and far exceeded our expectations."

Ready to have a conversation?

Contact our UX Design team

by filling out the form below!

Frequently Asked

Questions

Fuselab Creative has been creating user-friendly and visually appealing digital interfaces for over a decade, and we still feel like we've only touched the surface of our potential.

What is a UX research agency?

A UX research agency runs structured studies of how users behave with a digital product, then translates findings into product decisions the design and engineering teams can act on. The work covers methods like usability testing, interviews, field studies, prototype testing, and card sorting. Specialist agencies focus on healthcare, government, or fintech, where domain constraints shape every research decision. A UX research firm that works across all industries without domain depth will produce thinner findings on regulated-industry products than a specialist UX research company focused on healthcare, government, or fintech.

How much does UX research cost?

UX research services from US-based specialist firms typically range from $25,000 to $75,000 for a full engagement, with hourly rates between $100 and $250 depending on scope and regulatory complexity. Healthcare, government, and fintech projects cost more because compliance review adds structural work to every phase. Offshore generalist agencies charge less but rarely have the domain expertise that regulated-industry products require.

How long does a UX research project take?

A full-scope UX research engagement runs 6 to 10 weeks from kickoff to final deliverables, while rapid validation projects with narrow scope complete in 2 to 3 weeks. The variable that most affects timeline is participant recruitment, not analysis. Products with specialized user populations like clinicians, compliance officers, or logistics operators take longer to recruit than products with general consumer users.

What is the difference between UX research and market research?

Market research studies what people say they want through surveys, focus groups, and demographic analysis. UX research studies what people actually do with a product through direct observation, task-based testing, and behavioral data. Market research answers whether demand exists; UX research answers whether the product works for the people using it.

What deliverables do we receive at the end of the engagement?

UX research engagements deliver a prioritized list of design recommendations grounded in specific user observations, along with artifacts the product team uses after the engagement ends. The standard deliverable set includes journey maps, validated personas, user flow documentation, indexed session recordings, and testable prototypes that demonstrate the recommended changes. Every recommendation traces to a specific observation in the data, not to a general best practice the team could have read online.

Can UX research be done on a product that is already live?

UX research on live products is often more valuable than research during redesign, because testing on production systems captures real performance, real data, and real user behavior that prototypes cannot simulate. A dashboard loaded with sample data behaves differently from the same dashboard pulling live records across multiple API sources. Research on live products identifies the problems users actually encounter, not the problems a prototype predicts.

Who will actually lead our UX research engagement?

A principal researcher with experience in the client’s specific industry leads every Fuselab engagement from kickoff and is named in the contract. This protects the client from the common pattern where the senior researcher who pitched the work disappears once the contract is signed.

Read Our Blogs

UX Research is the Foundation for all UX/UI Design