How User Interface Design is Changing

To try and explain the “emerging” field of UI/UX design 10 years ago, early adopters, practitioners, and dabblers liked to use this image:

- UI and UX practices draw on many intersectional areas of expertise. In the last 10 to 15 years, the explosion of boot camps and post-secondary programs dedicated to the legitimization of UI has only served to make its boundaries clear. The result?

UI Design and Web Strategy

Today, UI services have become an integral part of web strategy. By traditional standards, that’s fast. By digital standards, this speed of progress is par for the course. Already, we’re asking about (and seeing) the “future” of interface design. Of course, the jury’s still out on this one, but here are 10 trends that indicate exponential leaps and changes in the making.

Future of Interface Design

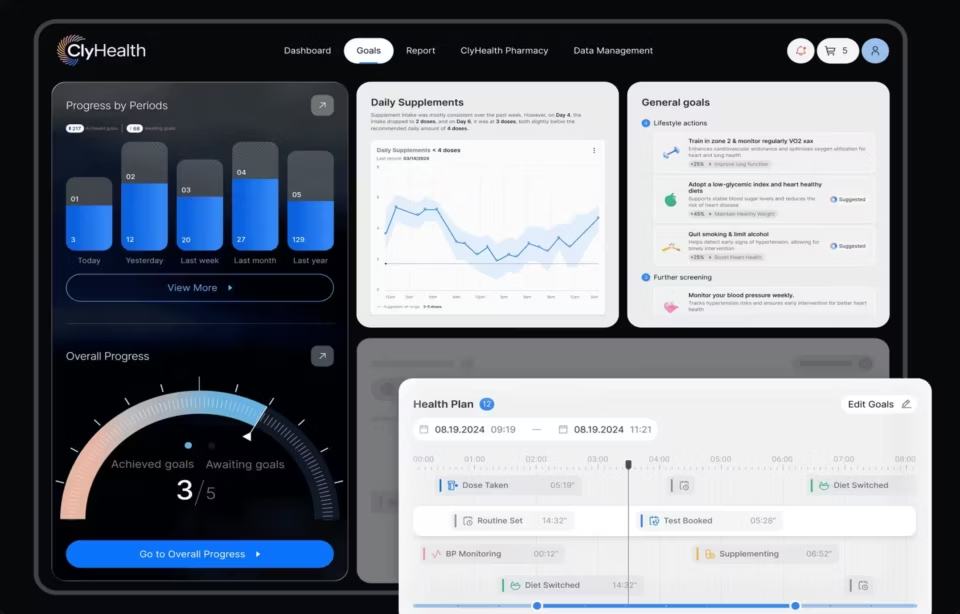

1. Data-Driven

Being “data-driven” is increasingly becoming a key standard for practices in digital marketing, spanning from copywriting to consumer behavior and even resource management.

The future of user interfaces takes this new standard to the next level, allowing consumers to personalize a visualization and customize their views.

This also means that users will be able to “self-select” the functionality, information, and processes that matter most to them or that will fulfill their end goal.

This asks UI specialists to rely on and harness deep learning from analytical and AI platforms which will provide insight to even smaller businesses, rather than remaining confined to the realm of “Big Data”.

2. Screens Will Disappear

Everyone knows about software. It runs our lives. But what about “smartware”?

Software is moving to the cloud and becoming a service. Meanwhile, in the land of user interface, terminals are being eliminated.

Perhaps the most significant shift in user interfaces is the fact that screens have the potential to disappear with “smartware” entirely.

Smartware is the name given to devices or computing systems that “require little active user input, integrate the digital and physical worlds, and continually learn on their own” (UX Matters).

From 3D printers and VR headsets to delivery platforms like “Google Glass,” the user interface is going to shift from delivering information interaction and moments of engagement via screens to experiences that are integrated into our lives through these smarter devices.

3. Intelligent Assistant Integrations

The key in “UI” is the “I”: that is to say, the ability of humans (or “users”) to be able to interface and interact with their computers. This relies on repetitive and learned behavior by the user, over time, to create a reliable process. With the rise in digital assistants, however, the way we now interface with our terminals is undeniably changing.

Intelligent digital assistants are currently our “middlemen”, acting as a go-to, first-stop for information and actions or commands being carried out. However, as digital assistants are listening and doing, they are also learning. In the next five years, this collective “learning” will result in higher cognitive and intuitive computing that allows us to interact seamlessly, using highly colloquial and informal language and commands.

Read also our article about IUI trends.

4. This is Your Brain on Bots

Speaking of emerging technology, UI’s entire focus will begin to shift from visual identities, including color, copy, and placement, and begin to move towards integrating a new source of interaction: chatbots.

If you haven’t noticed by now, the larger themes that keep recurring in the evolution of UI are deep learning and data tracking to support a deeper insight into customer behavior — all with the end of arriving at a more intuitive and integrated experience. To that end, NLP or neuro-linguistic programming is:

“A technological process that allows computers to derive meaning from user text inputs. In doing so, it attempts to understand the intent of the input, rather than just the information about the intent itself…In the context of chatbots, integrating NLP means adding a more human touch”— Chatbots Magazine

This “human touch” goes one step further with chatbots. Instead of simply programming preset and default responses, the use of NLP in UI practices will allow chatbots to be “trained” to streamline responses and bring interpretation to the communication mix.

5. Moving From Touch to Voice & Facial Recognition

Speech recognition has been under development since 1952 when Bell Labs first designed a prototype known as “Audrey.”

IBM followed up this initial foray 10 years later in 1962 with “Shoebox” which moved from Audrey’s parsing of single digits spoken by a single person to 16 English words as part of its “recognized” vocabulary. Obviously, with Siri on iPhones, we’ve come a long way since then.

Read also our article about the intelligent interface for voice.

Intelligent user interfaces that are of the future include voice and speech recognition as part of their standard functionality. According to VoiceLabs, approximately 24.5 million voice-driven devices will become a part of households in 2017.

As this new form of interaction evolves over the next few years, we’ll see a greater level of specialization, considering how to “design” voice as an entirely personal “experience.”

6. A New Kind of “Network”

That’s one small step for man, one giant leap for — well, device-kind. The “Internet of Things” (IoT) is a significant shift towards that inevitable moment of digital integration — a trend we’re seeing from intangible things like user interaction to physical, tangible computer terminals.

UI practices in the future will have to take into account that devices are linked, inextricably, and increasingly, in a network where they can indeed speak to each other. In this environment, what do users expect? How can this network streamline tasks?

7. Personalized Views

Personalized views in user interaction are aiming to put the control back into the hands of users. Today, this means user-centric dashboards where users can decide what information is the most relevant and “personalize” their top-down views.

The future of UI on this front foresees a more granular level of control that allows us not only to change the way we see things but manipulate the experience of, for example, a website’s functionality.

8. Real-Time Responses

This trend borders on a larger ethic of “agile” development.

Agile software development is a way to approach the creation and project management of software and development projects in small, lean teams.

The idea is to ship the bare bones or “minimum viable product” and then iterate to improve, based on how users are interacting with the shipped version.

This means that development is occurring in a “real-time” and ongoing way.

Real-time responses are a significant aspect of the benefits of using Big Data in the analysis of large-scale trends to support decisions. The future of UI will be incorporating this “real-time” aspect to data, allowing decisions about the design of interfaces to be made on the fly. Data, similarly, will be tracked in real-time, bringing machine learning to predict patterns.

9. Designing for Warmer Engagement

In the beginning of 2018, Facebook declared a major change to its News Feed aimed at making its user’s experience of engagement more authentic and personal, rather than being focused on advertising and brand presence.

But how do you design for “meaningful social interactions“?

The future of UI could actually include some civic good, optimizing experiences not just for converting customers but actually connecting users, thereby mitigating some of social media’s more “isolating” effects.

These features could include a “cool-off” period after commenting, asking users if they’re sure they want to post, or putting in a “delay” for posting, especially in the case of an influx of negative comments.

10. Interfaces and Instincts v.s. Behavior and Experiences

Besides the larger threads of machine learning, cognitive computing, and intuitive experience, the change in UI practices will focus on designing for a new kind of human behavior.

Instead of being “instinctual,” human behavior is going to become a matter of habit. Users will be learning their new environments and platforms, as the platform itself is learning their behavior and codifying it.

Learn more about what our UI agency provides in terms of design services.

This means that there will be a greater focus than ever before on user testing so that there is a better rate of predictability to how a user will respond to a new design. This testing will draw on aspects of NLP, real-time data tracking and other UI trends we’ve seen here.