Intelligent Interface User Design: When AI & UI Converge

To understand how intelligent user interface (IUI) merges AI (artificial intelligence) and UX, first, it’s important to understand the purpose of IUI. Its purpose is to improve communication between humans and computers. With IUI, the objective for developers or designers is to enhance flexibility, usability, and the relevance of the interaction.

Intelligent User Interfaces

While there is much demand for better UX in almost any type of interaction between humans and screens, IUI is sometimes forgotten for its ability to, not only address UX but also to integrate AI into the equation. Often IUI has an “agent,” which is an intelligent virtual guide, there to improve the naturalness of human-machine interaction. The “agent” does so with human-like verbal and nonverbal behavior. These agents can now understand gestures, which are humans’ nonverbal communication.

When you break down IUI, it’s the merging of three words: intelligent, user, and interface. It was first used in 1988 during a workshop on architectures for intelligent interfaces. From this workshop came a completely new field of study. By 1991, the founders of the workshop had written a book about IUI. However, that was decades ago, before the proliferation of smartphones and digital assistants. To understand how IUI has expanded, it’s important to remember its roots came long before UX and AI were such a regular part of life.

The Impact of AI on UX

To understand how UX and AI merge, you need to look at AI’s influence on UX. UX was sitting around on its own before it became intertwined with intelligence. UX soon became a buzzword that suddenly every designer and developer needed to embrace. Not until more recently has AI had an impact on UX.

Learn also about our AI interface design services.

AI is such an integral part of everyday life and the technology in which we interact. These interactions between humans and computers have come a long way. Just think of all the many things you can do today that would have been just a fantasy a few years ago. Most of that interaction is now an intelligent user interface because of the collaboration between AI and UX.

Read also our article about AI-powered design.

So, how has AI influenced UX? Computers can now see, perceive, and make decisions. There are autonomous cars. AI in UX can be seen in something as simple as social media when you use the visual search on Pinterest.

AI is now less likely to be pre-programmed and more likely to be embedded into technology so that it can keep learning and so decisions can be more personalized based on this learning.

AI in no way has the ability to take over for humans. In the example above, the user took a picture of something he/she was interested in and let the computer find it. It’s best for both parts of the conversation—humans and computers—to do what they do best.

AI’s powerful influence on UX is that it helps solve problems. Humans have to first identify the problems, but AI brings insights and discovery.

It can be argued that only humans have empathy for other users and can understand their needs better.

However, it can also be said that it’s better to remove subjectivity from the UX equation so the experience is based on data, not assumptions.

The Emergence of the IUI Designer

From this deep influence by AI, UX has become multi-disciplinary—one of which is now IUI. So UI/UX designers now have the ability to shift to IUI and take UX to the next level.

IUI designers have a singular focus—increasing the intelligence of computer and human interaction. They may look at research subjects like natural language understanding, brain-computer interfaces, or gesture recognition.

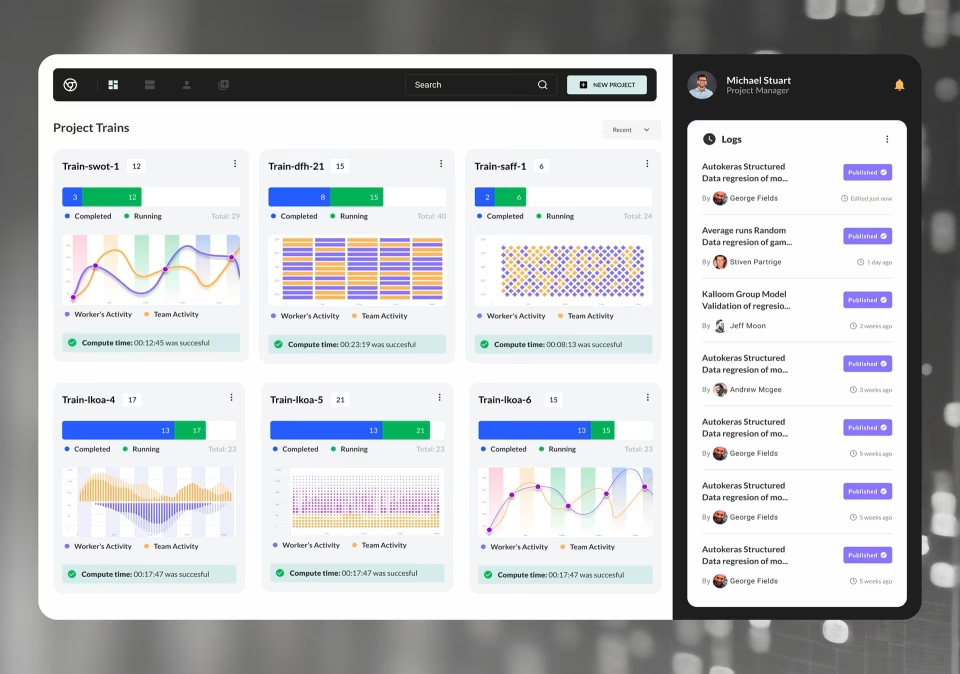

When IUI brings about the convergence of UX and AI, it looks something like this example from MIRALab at the University of Geneva. This example involves real-time data captured while an athlete is running. A wearable device is attached to the knee, which measures the knee’s activity during the run.

This data is processed and can then report real-time concerns about running. The wearable is connected to the runner’s smartphone so they can receive a message to slow down or stop. AI is interpreting the physiological data to better communicate with the person so they can make smarter choices during their exercise.

Another example comes from the Intelligent User Interfaces Lab at Koc University. One project in the lab relates to a “smart” pen. Once a person sketches something, the pen recognizes the shape.

Then appropriate, relevant objects are added to the sketch. The IUI is attempting to understand what the user is trying to do and then help him or her.

Can IUI Design be for the Individual?

As we become more and more dependent on computers, we expect them to “act” more human, which is the objective of IUI. However, every user is an individual, and their actions can be unique to their circumstances.

Being user-centric in design isn’t easy when you have so many different types of users to contend with, but for better or worse, most designers group these users. This grouping then is the foundation of the interface. However, to be 100% user-focused, designers should treat each user as an individual.

When designers and developers think on the individual level, however, this creates challenges that UX and interface design services can’t solve—not without AI. The traditional methods of UX are still valuable to the product design process and will be the catalyst for decisions, but AI takes this to a new level by always calibrating design based on the user’s actions.

AI can recognize patterns from the user and make small changes to the UI to account for these. Over time, these small changes will have a compounding effect, ultimately delivering the ideal interface to accommodate the individual.

The current state of IUI is, of course, in flux because we live in a world of rapid technological advancement.

This means that IUI will continue to be where UX and AI meet, so much so that eventually UX will always be integrated with AI. Our user interface design agency can help with the main activities including user flow mapping, prototyping, wireframing, and interaction design.

Read also our article about machine learning UI.