Conversational AI design and the machine learning shift

Conversational AI design is the practice of building interfaces where users interact with a machine learning system through natural-language dialogue, organized around how the underlying model handles uncertainty, recovery, and confidence. The field now spans three architectural eras that coexist in production: rule-based chatbots, LLM-powered assistants, and agentic systems, each demanding different UX patterns even when the surface looks the same.

After nine years of building conversational interfaces for healthcare, government, and enterprise clients, we keep seeing the same pattern: product teams design for the wrong architectural era. The model is fine. The interface is wrong for what the model can do.

What machine learning changes about conversational AI design

Machine learning changes conversational AI design in three concrete ways. Outputs become probabilistic rather than deterministic, meaning the same question can produce different responses across sessions. Capabilities expand without explicit programming, making the surface harder for users to scope. And failure modes shift from “the system did not match my intent” to “the system gave me a confident answer that was wrong.”

The shift from deterministic to probabilistic output is the change most product teams underestimate. In a rule-based system, every input is mapped to a programmed output, and design validation meant walking through scripted flows. In a machine learning system, the same input can produce different outputs across runs, which means the interface must be designed to accommodate variance, not fight it. Designers who skip this shift ship products that pass demo testing and, sadly, break in the first week of production.

The expansion of the capability surface is the second change. A rule-based bot knew exactly what it could answer because someone wrote each intent by hand. An LLM-powered assistant has a capability surface that no one explicitly programmed, expanding with every model update. Users do not know where the boundary is, and the model frequently does not know either.

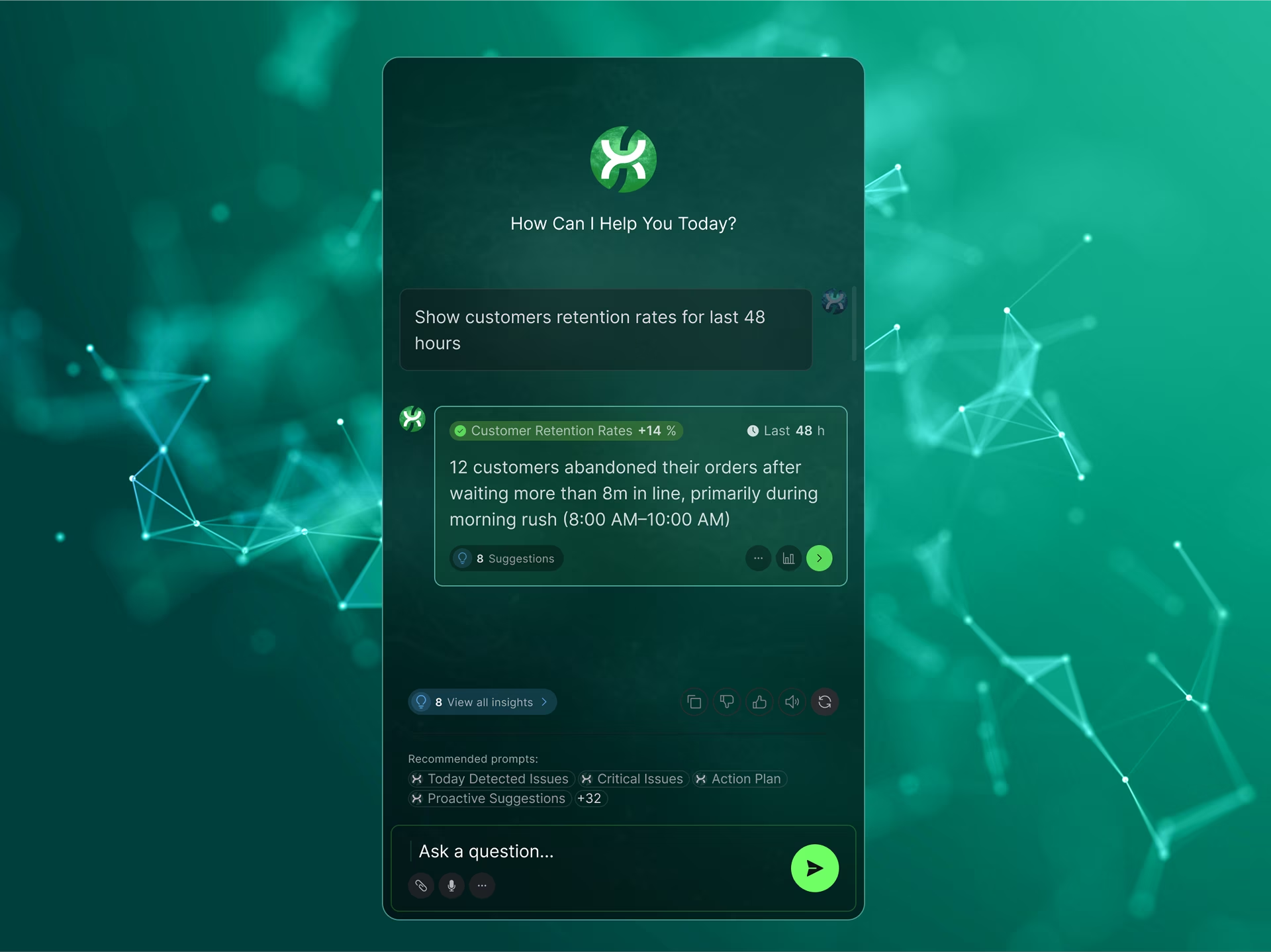

The third change is in how failure presents itself. A scripted bot failed visibly with a fallback message that signaled the limit. A machine learning system can fail invisibly by returning a confident response that happens to be wrong. The design implication is that confidence signaling becomes a first-order feature rather than a polish item. Without it, users either trust the system too much or stop trusting it entirely. There’s nothing worse than investing time in a bot chat, only to realize you aren’t going to achieve your goals here and will have to start over with a live person.

The three architectural eras of conversational AI

Conversational AI design now spans three architectural eras: rule-based bots running scripted intents, LLM-powered assistants generating responses from learned patterns, and agentic systems that plan and execute multi-step tasks across tools. Each era requires distinct design patterns. Treating them as a single design problem is why most enterprise conversational AI deployments stall after the demo.

The rule-based era produced design patterns most product teams still default to: scripted intent flows, quick-reply buttons that constrain user input, and fallback to human handoff when the bot hit its limit. The interface design was about constraint. Users were shown what the bot could answer, and the design’s job was to keep them on the rails. Failure modes were predictable, and the interface signaled capability through structure.

The LLM era changed the substrate underneath the interface. The system can now generate plausible responses to almost any input, including inputs the designer never anticipated. Design now needs to communicate which questions the system can answer reliably versus which ones it might fabricate. The interface must surface confidence calibration, source attribution where the answer is factual, and clear paths back when generation fails. The constraint-based patterns from the previous era over-restrict the model and waste its capability.

The agentic era shifts the design problem again. The system now takes actions, not just produces text, and a correct response means correct execution of a multi-step plan involving tools, APIs, or external systems. Designing for agentic systems means designing for state visibility between turns, reversibility controls on consequential actions, and confirmation prompts calibrated to the cost of getting a step wrong. Stanford HAI’s 2025 AI Index Report found that AI agents outperform human experts four to one on short, two-hour tasks but fall behind by two to one at 32 hours, which is exactly why those specific design properties matter: agentic systems are strong at narrow execution and brittle at long-horizon judgment.

The cost of mismatched design is where most enterprise projects break. A rule-based UI pattern dropped onto an LLM-powered backend over-restricts the model and frustrates users who can sense the capability behind the constraint. An LLM pattern dropped onto an agentic backend leaves the user blind to what the system is doing between turns. The deployments that stall almost always stall at this mismatch, not at the model itself.

Confidence, recovery, and fallback: the design properties that change most

Confidence display, recovery patterns, and fallback behavior change more across machine learning architectures than any other design property. A rule-based bot’s “I don’t understand” message is a fundamentally different problem from an LLM assistant fabricating a confident wrong answer, which is again different from an agent executing three correct steps and a fourth wrong one before any human can intervene.

Confidence display is binary in rule-based systems. The bot either matched an intent or fell to a fallback. In LLM-powered systems, the model can produce a confident-sounding response even when its internal probability is low, and the design problem is to surface that gap to the user. Patterns that work include source citations on factual claims, hedging language calibrated to the model’s actual reliability rather than to a tone of voice, and visible reasoning where the answer required inference. A system that returns every response with equal confidence loses user trust the first time a confident answer turns out to be wrong.

Recovery patterns determine what happens when the system fails to answer well. In our work on the Stardog Voicebox conversational AI interface, the recovery pattern was redesigned after the first version showed users abandoning queries the system could actually have answered. The change replaced a generic “I didn’t understand” with structured query suggestions scoped to the user’s domain, surfaced inline when query parsing failed. Abandonment dropped substantially. Recovery is design work, not error handling.

Fallback behavior is where architectural era matters most. In a rule-based system, fallback was a handoff to a human. In an LLM-powered system, fallback often means narrowing scope: asking the user to disambiguate, surfacing options, or admitting uncertainty rather than fabricating an answer. In an agentic system, fallback becomes harder because the agent has already taken steps. Designing for agent fallback means deciding which steps are reversible, which require confirmation, and how to surface state to the user mid-flight.

Measuring success in conversational AI design

Measuring conversational AI success in 2026 looks fundamentally different from measuring chatbot success in 2020. Containment rate and deflection were the right metrics for rule-based bots that either matched intents or fell to a fallback. LLM-powered assistants and agentic systems require different measurements because they fail in different ways, and the metrics chosen during the brief shape every downstream design decision.

Measurement is the question product teams ask us most often after a conversational AI pilot, usually after the first metrics review has produced confusing numbers. Rule-based conversational systems are measured on containment rate, the share of queries resolved without escalation, and deflection rate, the share of inbound queries the bot prevents from entering the support queue. These metrics make sense when the bot’s capability is fully defined in advance. They become misleading the moment the underlying system can generate responses to unscoped queries, because containment now silently includes confidently-wrong answers users acted on without realizing the limit had been reached.

LLM-powered systems require quality measurements that did not exist in the rule-based era. Factuality captures whether the model’s response is accurate when the answer is verifiable, and calibration captures whether the model’s stated confidence matches its actual reliability across a sample of responses. The harder measurement is user-rated quality, the experiential dimension that quantitative metrics miss entirely. None of these can be measured from logs alone, and each requires a human evaluation loop the design must accommodate from the first sprint.

Agentic systems shift measurement again. The unit of success is no longer a response but a completed task. Multi-step completion rate, recovery rate when a step fails, and time-to-resolution across the full plan are the metrics that matter. A system that completes nine of ten steps correctly is still a failure if the tenth step is irreversible and the user did not have time to intervene. The Gartner cancellation data reflects exactly this measurement reality.

Why most enterprise conversational AI deployments fail

Most enterprise conversational AI deployments fail not because the model is wrong, but because the interface does not match the architecture. Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls. The interface is usually where those risks first become visible to users, and where they could have been prevented if the design had been calibrated to the architecture.

The first failure mode is over-trust. A confident-sounding interface combined with an LLM that occasionally hallucinates produces users who accept wrong answers because the design did not signal uncertainty. The damage is highest in regulated contexts: legal research, clinical decision support and financial advice. A disclaimer at the bottom of every response does not stop a user from acting on a confident, wrong answer. The fix is calibration in the response itself, not warnings around it.

The second failure mode is scope confusion. Users do not know what the system can answer, so they either ask too narrowly and miss capability or too broadly and get fabrications. The interface should surface scope before the first message, not after the user has hit the limit twice and given up. Three named, domain-scoped example queries drive adoption more than any onboarding tutorial. Scope clarity is what lets users self-select into queries the system handles well, thereby protecting both adoption and brand trust.

The third failure mode is what Gartner calls agent washing: vendors rebranding RPA tools, chatbots, and AI assistants as agentic without the underlying capability. The downstream design problem is that buyers commission UX for an “agent” that is actually a chatbot dressed up in different clothing. The result is a product that looks autonomous in the demo and breaks the first time a user asks for an action that the system cannot actually take. In the pitches we have reviewed, this gap between what a vendor calls an “agent” and what the underlying system actually does is the single most common source of wasted design budget in 2026. Verifying the architecture before commissioning the design avoids this entire category of failure.

Conversational AI in regulated industries

Conversational AI in regulated industries follows different rules from consumer chatbot design. HIPAA constrains what data can appear in conversation logs. Section 508 requires conversational interfaces to work with screen readers and assistive technology. SEC and FDA frameworks limit what an AI system can advise without explicit human oversight. The design implications shift across eras of conversational AI, and the regulatory cost of getting the design wrong scales with the domain’s stakes. In other words, this is no walk in the park for the most skilled teams.

Healthcare conversational AI faces three constraints simultaneously: HIPAA-protected health information cannot appear in conversation logs without strict controls, HL7 and FHIR data structures shape what the system can reliably retrieve from clinical records, and clinical decision support delivered through dialogue triggers FDA considerations depending on how directive the recommendations are. In the ClyHealth clinical AI work, the interface surfaces a single recommendation per workflow step rather than a ranked list. That design choice was partly regulatory: limiting the system’s apparent authority over clinical decisions reduces friction when operating within FDA guidance for advisory tools.

Government conversational AI must account for Section 508 accessibility from the first conversation flow, not as a remediation layer added after launch. Screen reader compatibility means message structure, semantic markup, and turn-taking signals work without visual cues. Audit trail requirements mean every conversation, including the model’s confidence at each turn, must be reconstructable months after the interaction. These constraints make rule-based and LLM-powered systems easier to deploy in government than agentic systems, where multi-step action sequences create accountability questions that current frameworks were not written to answer.

Financial conversational AI faces a different set of constraints: SEC disclosure requirements for any system that provides investment recommendations, suitability rules governing personalized financial advice, and SOC 2 controls on the data flowing through the conversation. Citation and source attribution become first-order design features in finance because users must verify the basis for any recommendation before acting on it. However, the challenge here is clutter, which may possibly cause more confusion. The Stardog Voicebox interface mentioned earlier illustrates how citation trails work in practice when the underlying data structure supports them, which is the foundation that regulated financial AI design depends on.

How to decide whether your product should use conversational AI at all

Conversational AI fits products where users have ambiguous queries with no fixed schema, where structured UI would require dozens of filters, or where the domain knowledge that resolves the query is too vast to predefine. It does not fit products where users have clear schemas, where consistency across runs matters, or where the cost of a wrong answer is high. The selection comes before any design pattern decision.

Use conversational AI when the task has open-ended exploration of a large or unstructured dataset, when users do not know the right vocabulary to find what they need, or when most queries fall into a long tail no structured navigation could practically cover. Customer support over a deep knowledge base is the canonical case. Internal search across enterprise documents is another. So is data exploration where the user knows what question they want answered but not how to phrase it in a query language.

The same approach fails on tasks with fixed schemas, auditable actions, or consistency requirements that demand the same answer to the same question every time. Compliance work, structured data entry, and most regulated workflows fall in this category. A conversational interface adds friction to these tasks rather than value, and design should match the task structure, not the technology trend driving the procurement cycle.

The combined model is where most enterprise products land. A structured interface handles the predictable majority of the workflow, and a conversational surface handles the ambiguous minority. The combination works when each surface has a clear scope and the user can tell which they are using. A typical engagement at Fuselab begins with a workflow audit that establishes which interactions belong in each bucket before any AI chat interface design work begins. Skipping that step is how products end up with a chatbot bolted onto a workflow that did not need one.

The architecture decision precedes the design decision

The conversational AI problem in 2026 is not whether to use the technology but which architecture to design for. The team that picks rule-based patterns for an LLM-powered product, or LLM patterns for an agent, will ship something that demos well and fails in production. The architecture decision comes first, and the AI design decisions follow from it. In the projects we have audited where adoption stalled, the diagnosis almost always traced back to that one mismatch. The team that gets that order right ships products that work in production. The team that doesn’t ships demos.

What is conversational AI design?

Conversational AI design is the practice of building interfaces where users interact with a machine learning system through natural-language dialogue. The discipline covers confidence display, recovery patterns, fallback behavior, and capability transparency, and it now spans rule-based chatbots, LLM-powered assistants, and agentic systems as distinct architectural categories with distinct design requirements.

What does machine learning add to conversational AI?

Machine learning makes the system’s outputs probabilistic rather than deterministic, which means designers must communicate confidence, handle generation failures gracefully, and surface scope to users who cannot otherwise tell what the system can reliably do. Rule-based conversational interfaces did not face these problems because every response was explicitly programmed, and the interface signaled capability through structure rather than calibration.

How is LLM-powered conversational AI different from a rule-based chatbot?

LLM-powered conversational AI generates responses from learned patterns and can answer queries no one explicitly programmed. Rule-based chatbots only respond to predefined intents and fall to a fallback message outside that scope. The design implications differ sharply: rule-based interfaces communicate capability through structure, while LLM interfaces must communicate confidence and uncertainty in every response.

What is the difference between conversational AI and agentic AI?

Conversational AI focuses on dialogue between a user and a machine learning system. Agentic AI plans and executes multi-step tasks across tools, often without a human in the loop for each step. Most agentic systems include a conversational interface, but the design problem extends beyond dialogue into action surfaces, reversibility controls, and mid-execution state display that no chatbot ever needed.

Do I need a designer who has worked with LLMs specifically?

Designers who have only worked on rule-based chatbots will default to constraint-based patterns that waste the capability of an LLM and frustrate users who can sense more is possible. The reverse mismatch is just as costly. Hiring criteria should be specific: at least one shipped product on the same architecture being deployed, not a generic portfolio of chat UI work.

How long does a conversational AI project take?

Conversational AI projects typically run eight to sixteen weeks from kickoff to design handoff, with most of the time spent on conversation flows, edge-case handling, and confidence display patterns rather than visual surface. Agentic systems require longer engagements because the design surface includes both dialogue and action sequences across multiple tools or integrations, and the testing surface is larger.

What should I look for in a conversational AI design agency?

A conversational AI design agency should be able to point to at least one shipped product where the agency designed the conversation patterns, confidence display, and recovery logic for a machine-learning-powered system, not only the visual surface of a chat window. The portfolio matters more than the deck. Agencies without a named conversational AI project in their work have done chatbot UI, which is a different and narrower discipline.