Heuristic Evaluation in UX: Complete Guide to Expert Usability Review

A heuristic evaluation is essentially an expert review of a product’s usability. Instead of waiting for real users to struggle with an interface, experienced UX practitioners systematically inspect it using established usability principles. The goal is simple: identify design problems early, quickly, and efficiently before they become expensive mistakes.

Let’s take a stab at explaining our process with a real-world example: an ultra-modern luxury hotel!

You walk into a stunning lobby, all marble floors, ambient lighting, and expensively scented candles that welcome you, along with the smiling face of an immaculately dressed doorman. The room is gorgeous; something straight out of an interior design magazine cover.

But as you start settling down, certain things begin to annoy you. You want to turn off the lights to sleep, but instead of a simple switch, you are left staring at a complex touch panel with fifteen buttons. You step into the shower, and the ultra-sleek faucet has no “Hot” or “Cold” markings, so you spend five minutes alternately freezing and scalding yourself. The toilet is straight out of a futuristic Japanese dream. You get the picture; lovely to look at but not so comfortable to live in!

This is exactly what happens in the digital world every day. Companies invest millions in branding, high-res imagery, and marketing hype. But when the customer actually tries to use the app or website, they find themselves fumbling in the dark.

In the early days of the web, usability studies routinely found that over 70% of interfaces contained serious usability problems that prevented users from completing tasks. What’s surprising is not that these issues existed, but that most of them were obvious once someone with experience looked closely enough. That insight led to one of the most powerful techniques in UX design today: heuristic evaluation; essentially an expert review of a product’s usability.

In this guide, we will explore

- What is heuristic evaluation?

- Heuristic evaluation Vs. usability testing

- 10 usability heuristics for interface design

- When to conduct a heuristic evaluation

- Benefits and advantages of heuristic evaluation

- How to conduct a heuristic evaluation: A step-by-step guide

- Best practices for effective heuristic evaluation

- Common mistakes to avoid

- Tools and templates for heuristic evaluation

- Real-world heuristic evaluation examples

What is Heuristic Evaluation?

At its core, a Heuristic Evaluation is a systematic inspection of a user interface to find usability problems. Notice the word systematic. It is not just experts clicking around and saying, “I don’t like this button.” Instead, they measure the interface against a set of established, time-tested principles – heuristics.

In a typical heuristic evaluation, several UX experts independently examine the product and compare its design to a set of heuristics, guidelines derived from decades of usability research. These heuristics serve as a checklist, helping evaluators systematically assess whether the design supports good usability. When possible, we conduct these evaluations in person to better understand the user’s entire environment, not just their reactions to the platform.

Think of it like a building inspector walking through a house. When you construct a house, you don’t wait for residents to move in before checking whether the wiring is safe or the plumbing works. Inspectors follow a set of standards to identify problems before granting permission for people to enter. Heuristic evaluation works the same way for digital products.

The method was originally introduced by Jakob Nielsen and Rolf Molich in 1990. Their work formalized the idea that experienced evaluators could reliably identify usability problems by inspecting interfaces against their Ten Usability Heuristics

A well-conducted heuristic evaluation can uncover dozens of usability issues in just a few hours. Because the evaluation relies on expert knowledge rather than recruiting participants, it can be performed quickly and repeatedly during the design process. Evaluators usually look for issues such as:

- Confusing navigation

- Poor feedback from the system

- Inconsistent design patterns

- Error-prone interactions

- Missing guidance for users

Heuristic evaluation remains one of the most widely used methods in UX evaluation. Design teams use it during early prototyping, design reviews, product audits, and even competitor analysis.

Definition and Purpose

A heuristic evaluation is a method in which usability experts examine a product’s interface and assess its compliance with recognized usability principles, known as heuristics.

The word heuristic comes from the idea of a rule of thumb, a practical guideline derived from experience. So when we talk about heuristic evaluation, we are really talking about a systematic expert review guided by these principles.

The primary purpose of heuristic evaluation is to identify usability problems quickly and cost-effectively. Instead of conducting large-scale user testing sessions, teams can rely on expert evaluators who understand how users interact with interfaces and where common problems typically arise.

What’s important to understand is that heuristic evaluation is not a casual critique session. It’s a structured methodology. Evaluators review screens systematically, map each issue to a violated heuristic, and assign severity ratings to prioritize fixes.

The result is a clear list of usability problems, along with recommendations for improving the design.

History and Origins

Heuristic evaluation methods are rooted in the work of Jakob Nielsen and Rolf Molich, who developed the framework in 1990. At a time when software was becoming increasingly complex with lab-based usability testing, they saw the need for a discount version of usability testing that didn’t sacrifice quality. They were searching for a faster method that still produced meaningful insights.

The evolution of heuristic evaluation saw a significant refinement in 1994, where Nielsen narrowed the principles down to the famous “10 Usability Heuristics” based on a factor analysis of 249 usability problems. Since then, the Nielsen Norman Group, which continues to publish guidance on usability evaluation methods, has ensured these usability principles remain relevant as we move from desktops to mobile and beyond. Today, this method has become a global industry standard, used by startups and Fortune 500 companies alike to ensure their digital interfaces are grounded in validated research.

Heuristic Evaluation vs Usability Testing

In the design world, people often use the terms “Heuristic Evaluation” and “Usability Testing” interchangeably. Often, clients are unfamiliar with the term heuristic evaluation altogether and mistake it for usability testing! While both are essential tools for a systematic inspection of a product, they serve very different purposes and produce very different types of data.

Think of it like building a bridge. Heuristic Evaluation is an expert review. It is the structural engineer who comes in with a clipboard to check whether the steel is high-grade, whether the bolts are torqued per code, etc. In the UX world, these are experienced evaluators who inspect an interface against usability principles to identify potential design problems.

Usability Testing, on the other hand, is the group of commuters who actually drive their cars across the bridge for the first time. In the UX context, this involves observing real users as they attempt to complete tasks with the product. Instead of experts predicting problems, the team watches users interact with the system directly.

Both approaches uncover different kinds of problems.

A heuristic evaluation tends to identify predictable usability violations, things like inconsistent navigation, missing feedback, unclear labels, or error-prone workflows. Because it relies on expert knowledge, it’s extremely efficient during early design stages.

Usability testing, however, reveals the unexpected. Real users often behave in ways designers never anticipate. They take unusual paths through an interface, or abandon tasks entirely for reasons that aren’t obvious during expert review.

When used together, they form one of the most powerful combinations in UX research and product design.

10 Usability Heuristics for Interface Design

When people talk about heuristic evaluation, they are usually talking about a specific framework: the 10 Usability Heuristics introduced by Jakob Nielsen in 1994.

They are NOT strict rules; rather, they are practical design principles that help identify usability issues in digital interfaces.

Since its launch, Nielsen’s heuristics have become one of the most widely used frameworks in UX design. Teams across industries, from fintech startups to healthcare platforms, rely on them to guide heuristic evaluation and improve usability.

The reason for the appeal of Nielsen’s heuristics is simple: they reflect recurring patterns in human behavior and are rooted in how the human brain perceives and processes information. When interfaces violate these principles, you can be sure users are struggling to interact with them.

In practice, evaluators conducting a heuristic evaluation inspect each screen of an interface and check whether the design aligns with these principles. If a screen violates one of the heuristics, for example, by hiding critical information or requiring users to remember unnecessary information, it is flagged as a usability issue.

Heuristics evaluation covers the entire spectrum of interaction design – system feedback, user control, consistency, error handling, efficiency, and support – for everyone.

Before we dive deeper into the method of heuristic evaluation itself, it’s important to understand these principles. They form the backbone of almost every UX evaluation process used today.

The ten heuristics include:

- Visibility of system status

- Match between the system and the real world

- User control and freedom

- Consistency and standards

- Error prevention

- Recognition rather than recall

- Flexibility and efficiency of use

- Aesthetic and minimalist design

- Help users recognize, diagnose, and recover from errors

- Help and documentation

By applying these heuristics, we can systematically identify design flaws that lead to user frustration. Whether ensuring the visibility of system status or maintaining consistency and standards, these principles serve as a safety net. In the following sections, let’s explore them in two groups: the core heuristics that govern interaction clarity, and the advanced heuristics that refine efficiency and usability.

Core Heuristics

The first five heuristics focus on the fundamentals of interaction design. They ensure that systems communicate clearly, behave predictably, and prevent usability problems before they occur.

Visibility of System Status

The system should always keep users informed about what is happening through appropriate feedback within a reasonable time. Example: A progress bar during a file upload tells the user exactly how long they need to wait.

When systems remain silent, users feel uncertain and may assume something is broken. A classic example: an upload button without a progress indicator leads users to click multiple times, resulting in duplicate uploads.

Match Between System and the Real World

Systems should use familiar concepts, phrases, and workflows that mirror how people think in the real world, and avoid technical jargon. For example, an e-commerce site would use a Shopping Cart icon instead of a Database Entry Bin. Or a banking app that uses terms like transfer and balance instead of something that exposes internal system terminology like the fund allocation process.

User Control and Freedom

Users often choose system functions by mistake and need a clearly marked emergency exit to leave and get back on track. Example: Providing a simple Undo or Cancel button after an accidental deletion.

This heuristic emphasizes undo, redo, and cancel options, and easy navigation paths, without which users might feel trapped.

Consistency and Standards

When elements behave differently across screens, for example, if a button changes position or icons mean different things, users get confused and waste time constantly relearning the interface.

Following platform standards to keep designs predictable is a core heuristic. Users expect certain conventions, such as a magnifying glass icon to open search, a trash can icon to delete, or a hamburger menu to open navigation.

Error Prevention

Even better than good error messages is a careful design that prevents a problem from surfacing in the first place. For example, a travel site that grays out past dates in a calendar so a user cannot select an invalid flight day. Other common usability examples we see every day are validating forms before submission or showing password requirements in advance. Rather than allowing mistakes and showing error messages afterward, interfaces should guide users toward correct actions from the start.

Advanced Heuristics

The remaining five heuristics address the design’s efficiency and how the system can take on and execute more complex usability challenges.

Recognition Rather Than Recall

Interfaces should minimize the amount of information users must remember. The user should not have to remember information from one part of the dialogue to another. For example, showing a list of recently used files is far easier than forcing users to remember filenames.

During heuristic evaluation, recall-heavy systems often appear in complex enterprise tools where users are expected to remember command structures.

Flexibility and Efficiency of Use

Great systems work well for both beginners and experts. This heuristic encourages design patterns that support efficiency for advanced users while remaining accessible to newcomers.

Examples include keyboard shortcuts, saved preferences, custom dashboards, or automation features.

In many SaaS platforms, power users crave shortcuts that reduce repetitive tasks. Adding these features dramatically improves productivity and usability.

Aesthetic and Minimalist Design

Interfaces should present only the information users actually need. When screens are cluttered with unnecessary content, cognitive load increases and decision-making slows. A good example would be a clean, white-space-driven homepage that focuses only on the primary Call to Action.

A good heuristic evaluation often reveals dashboards overloaded with widgets, menus filled with rarely used options, and forms requesting excessive information.

Help Users Recognize, Diagnose, and Recover from Errors

Error messages should be expressed in plain language, precisely indicate the problem, and suggest a solution. For example, instead of a plain Error 404, the error page can say, “your payment failed because the card expired. Please update your payment details.” The second example guides the user toward a solution.

During heuristic evaluation, vague or technical error messages frequently emerge as usability issues.

Help and Documentation

Ideally, systems should be intuitive enough that users rarely need help. But when assistance is required, it should be easy to access and easy to understand.

Effective help systems include searchable documentation, tooltips, onboarding guides, and interactive walkthroughs. The best documentation answers questions exactly when the user needs them. In modern product design, this often takes the form of contextual help integrated directly into the interface.

When to Conduct Heuristic Evaluation

Think of heuristic evaluation like routine health checkups for a product’s usability. The earlier problems are discovered, the easier they are to fix. You can schedule a heuristic evaluation:

Early in the Design Process: A quick evaluation of early designs can reveal issues like confusing navigation, missing feedback, or poor workflow logic.

Before Usability Testing: Another ideal moment for heuristic evaluation is right before conducting usability testing with real users. Expert reviews can identify and resolve obvious usability issues, allowing real user sessions to focus on deeper behavioral insights.

Before Product Launch: Many teams run a heuristic evaluation shortly before release to catch last-minute usability issues that slipped through development.

After Major Design Changes: Whenever a product undergoes significant updates such as a redesigned dashboard, a new checkout flow, or a major feature release, it’s wise to run another heuristic evaluation. An expert review helps ensure that improvements in one area don’t create confusion elsewhere.

As Part of Regular UX Audits: Many organizations conduct periodic usability reviews as part of product maintenance.

During Competitor Analysis: Heuristic evaluation can also be used to analyze competitor products. It can often reveal not only usability weaknesses but also opportunities where your product can outperform others in design and usability.

When Time or Budget Limits User Testing: Sometimes, recruiting users for formal testing isn’t feasible. In these cases, heuristic evaluation offers a practical alternative for quickly identifying usability problems.

For Legacy System Assessment: Many organizations rely on older software systems, and running a heuristic evaluation on these systems often reveals extensive usability issues that can guide redesign priorities.

Benefits and Advantages of Heuristic Evaluation

Cost-effective: For any business, the primary appeal of a heuristic evaluation is its incredible cost-effectiveness. Because no user recruitment is required, you eliminate the overhead of incentives and the logistics of scheduling.

Fast: You get results extremely quickly, often within hours or days rather than the weeks required for lab-based studies.

Leverage expert insights: UX experts recognize patterns in usability problems that inexperienced teams might miss. They have seen similar design failures across many products and can quickly identify risky interaction patterns. In many cases, the evaluation not only identifies problems but also suggests practical solutions.

Shorter development cycles: Early detection of design flaws means you catch issues before development, saving costly redesigns later in the product lifecycle.

Flexible: Heuristic evaluation can be applied at almost any stage of product development. Teams use it to evaluate wireframes, prototypes, live products, and even competitor interfaces. The flexibility of the evaluation method makes it useful across different contexts and industries.

Systematic approach: While a casual observer might miss subtle friction points, an expert review provides comprehensive coverage of the entire interface. Statistically, the impact is clear: having 3-5 evaluators identify 75% of problems yields a massive leap in quality at a fraction of the cost.

Of course, like any method, it has limitations. Expert reviews cannot fully replace insights gained from observing real users. But when combined with user testing, heuristic evaluation becomes an extremely powerful tool for improving UX design.

How to Conduct Heuristic Evaluation: Step-by-Step Guide

Knowing the theory behind heuristic evaluation is useful, but the real value comes from applying it systematically. While every product is different, the evaluation process tends to follow the same structure.

A typical heuristic evaluation takes anywhere from a few days to two weeks, depending on the product’s complexity. It isn’t a race; it is a systematic deep dive. The process involves multiple evaluators, independent analysis, structured documentation, and a collaborative synthesis of findings.

Following a step-by-step approach ensures that the evaluator’s team provides a consolidated findings report that actually moves the needle on product quality.

What makes the method powerful is that it balances independent expert judgment with structured collaboration. Each evaluator brings their own perspective, and when those insights are combined, the usability issues become much clearer.

Below is a practical step-by-step framework that UX teams can follow to run an effective heuristic evaluation.

Step 1: Prepare and Plan

We start by setting the scope, specifying which interface or features you will evaluate. A narrow scope ensures evaluators stay focused.

Next, we choose the heuristics evaluation benchmarks that will guide the review. Most teams rely on Jakob Nielsen’s ‘10 Usability Heuristics’, but some domains, such as mobile apps or accessibility-related products, may benefit from specialized heuristics evaluation sets.

After this, we define typical user tasks or scenarios that the evaluators must test. We prepare the necessary materials (static designs, interactive prototypes, or the live product). Finally, we establish a timeline and deliverables, decide how findings will be documented, and what the final report should include.

It is also important to identify target user groups and any specific user tasks or scenarios to test.

Step 2: Assemble Evaluation Team

One of the most important decisions in a heuristic evaluation is selecting the right evaluators.

According to Jakob Nielsen’s research, the optimal team size is 3-5 evaluators. A single evaluator usually catches about 35% of the usability problems, but a group of five can catch roughly 75%.

The evaluators should ideally have:

- A solid understanding of UX and usability principles

- Familiarity with the selected heuristics

- Some knowledge of the product domain

Diversity among evaluators can also be beneficial. Designers, UX researchers, and product strategists may notice different kinds of problems.

One critical rule: evaluators must review the interface independently at first. Independent inspection prevents groupthink and ensures each evaluator contributes unique insights.

Step 3: Brief the Team

Before the evaluation begins, all evaluators should attend a short briefing session. The goal of the briefing is to make sure everyone is on the same page. During the briefing, the team typically reviews:

- The selected heuristics guiding the evaluation

- The product context and user groups

- The scenarios or tasks that evaluators will explore

- The severity rating system

- The documentation format for recording issues

This step ensures everyone evaluates the interface using the same framework. It is also the moment to answer any questions the evaluators may have and to clarify expectations.

Step 4: Conduct Independent Evaluations

This is the actual evaluation stage! Each evaluator independently inspects the interface while walking through the defined scenarios. They carefully examine screens and identify where the design violates established heuristics or causes usability issues.

Every issue should be documented with clear details:

- What the problem is

- Where it occurs in the interface

- Which heuristic is violated

- Assign an initial severity rating

- The potential impact on the user

- Screenshots or examples, if possible

Typically, an independent evaluation session lasts one to two hours per evaluator, depending on the product’s complexity. Importantly, evaluators should not yet discuss their findings with each other. This independence is crucial for uncovering the widest range of usability issues.

Step 5: Rate Severity

Once issues are documented, we assign them a severity rating. This scale helps the business decide which problem areas need immediate attention and which can wait, which is naturally useful for the optimal allocation of resources. Evaluators first rate these individually to ensure the final priority is based on a broad consensus.

Severity ratings range from 0 to 4:

- 0 = Not a usability problem

- 1 = Cosmetic issue

- 2 = Minor usability problem

- 3 = Major usability problem

- 4 = Usability catastrophe

When rating severity, evaluators consider several factors:

Frequency, i.e., how often will users encounter the issue?

Impact, which refers to how disruptive the problem can be to completing tasks

Persistence refers to whether the issue will repeatedly affect users.

Step 6: Consolidate Findings

After independent reviews are complete, evaluators come together for a consolidation session. This is the collaborative step that adds the most value.

During the meeting, each evaluator presents their findings, and the group identifies which issues appear across multiple evaluations. These group observations uncover the most serious usability problems. The team combines duplicate findings, discusses severity ratings, groups issues into clusters, and finally prioritizes usability problems.

This collaborative discussion adds depth to the evaluation, as different evaluators may interpret problems in slightly different ways. By the end of the session, the team produces a master list of validated usability issues.

Step 7: Report and Recommend

The final step of heuristic evaluation is to translate the findings into a clear and actionable report. A strong usability report typically includes:

- An executive summary spotlighting major findings

- A prioritized list of usability problems

- Detailed descriptions of each issue

- The violated heuristic principle

- Severity ratings

- Screenshots or examples

- Recommended design solutions

The key here is to present clear recommendations and solutions as well – that’s where the evaluators’ expertise really shines through and sets heuristic evaluation apart from tool-based or automated reports.

Once completed, the report should be presented to stakeholders, including product managers, designers, and developers. This ensures the findings influence future design decisions and improvements to overall usability.

Best Practices for Effective Heuristic Evaluation

To get the most value from a heuristic evaluation, one must look beyond the checklist and focus on the quality of execution. The method itself is simple. At Fuselab Creative, we find that the difference between a mediocre audit and a transformative one lies in how rigorously the evaluation is conducted. These are some of the best practices we follow:

Use Multiple Evaluators: Research by Jakob Nielsen shows that 3–5 evaluators strike the right balance between effort and coverage. Multiple perspectives reveal a broader range of usability issues.

Ensure Independent Evaluation. First, if evaluators discuss the interface before completing their individual reviews, the process becomes biased. Always conduct independent evaluations before any group discussion.

Choose the Right Heuristics for Your Context: While Nielsen’s heuristics are widely applicable, some products benefit from tailored frameworks. Adapting the heuristic evaluation to your product context improves relevance and depth.

Focus on Real User Tasks: Anchor the evaluation on specific user scenarios, such as onboarding and completing a purchase.

Document Clearly: Ensure that every usability issue is recorded consistently, including a description of the problem, its location, the violated heuristic, a severity rating, and a suggested solution.

Rate Severity Objectively: A disciplined severity framework ensures that design efforts focus on the most impactful usability problems first.

Support Findings with Evidence: Whenever possible, include screenshots, flows, or examples. Visual evidence makes findings easier to understand.

Provide Actionable Recommendations: Every heuristic evaluation should go beyond identifying usability issues and propose practical design improvements.

Combine with Other UX Methods: Don’t treat heuristic evaluation as a standalone feature. It works best when paired with other research methods, such as analytics data or usability testing.

Follow-Up After Fixes: After implementing changes, run a follow-up evaluation or usability test to confirm the issues have been resolved.

Turn UX Insights into Better Digital Products

Heuristic evaluation is just the beginning – real value comes from how insights are applied.

At Fuselab Creative, we turn expert analysis into intuitive, scalable product experiences designed to perform in the real world.

See how we design products that work.

Common Mistakes to Avoid

Even though heuristic evaluation is straightforward in theory, teams often make mistakes that undermine its effectiveness. Here are some that are most common:

- Using only one evaluator is the most common mistake we have seen in this field. A single evaluator cannot identify enough usability issues and has a single perspective.

- Allowing early discussion amongst evaluators can lead to groupthink, where opinions converge prematurely.

- Using the wrong heuristics, for example, evaluating a healthcare platform without considering accessibility or compliance requirements, can overlook critical usability issues.

- Writing vague problem descriptions, such as “this is confusing” or “bad UX,” reduces the value of the exercise. Every usability issue should be clearly defined, with context and explanation.

- Incorrect severity ratings or failing to prioritize usability issues.

- Not following up after evaluation to ensure changes are implemented or validating improvements.

- Treating heuristic evaluation as a replacement for user testing rather than as a complementary activity.

- Evaluator bias could also creep in when evaluators are too close to the product, such as when they are internal team members.

- A poorly structured report, which leads to usability issues being ignored or misunderstood.

- Not setting the correct user context/goals or scope. Scenarios or tasks that are either too narrow, too vague, or even too broad can lead to shallow insights.

Tools and Templates for Heuristic Evaluation

To execute a high-quality heuristic evaluation, you can lean on a well-known and tested set of resources to streamline your entire evaluation workflow.

Heuristic Evaluation Templates: Organizations such as the Nielsen Norman Group offer downloadable worksheets and templates to guide evaluators through the process. These templates typically include Heuristic checklists, severity rating scales, and documentation formats.

Digital Whiteboard Tools: Tools like Miro and Mural are excellent for collaborative evaluation sessions. They allow teams to cluster findings, visualize issues, and facilitate discussion during consolidation.

Spreadsheet-Based Tracking: Many teams use spreadsheets to document usability findings. These should include columns for the problem description, the specific heuristic violated, and a place for screenshots. Also, as teams mature, they often develop their own templates tailored to their workflows.

Domain-Specific Heuristic Sets: For specialized products, you should consider using domain-specific heuristic sets for mobile, VR, or voice interfaces if your product goes beyond the desktop.

Real-World Heuristic Evaluation Examples

Seeing how heuristic evaluation translates into business success is the best way to understand its power. Here are a few cases we have worked on at Fuselab Creative:

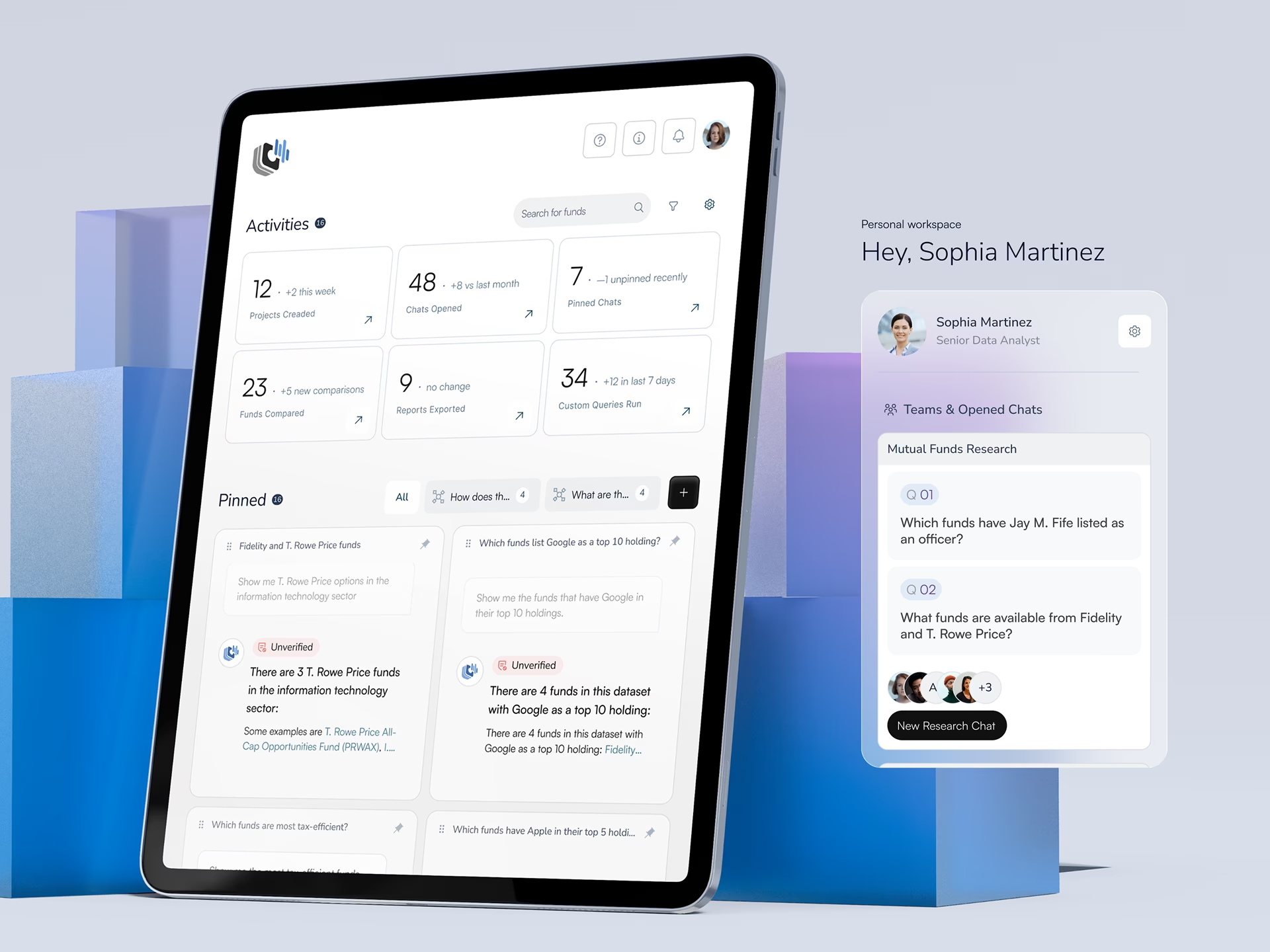

An e-commerce client came to us to test their checkout flow evaluation. Our expert team identified 12 usability issues. Three of these were major flaws: lack of progress indicators (violating visibility of system status), forced account creation (violating user control and freedom), and confusing error messages in payment forms. All three led to cart abandonment. By fixing these design errors, the company saw a 15% increase in conversion.

In another case, a Mobile app onboarding audit found several problems related to consistency and clarity. We found issues with Inconsistent messaging, a lack of guidance, and poor alignment with real-world user expectations. A redesign of the flow improved retention by 25%.

We have also seen heuristic evaluation work for complex B2B products; for a SaaS dashboard review, we discovered critical navigation and visibility issues. Identifying and rectifying the usability issues reduced support tickets by 30%. Additionally, in a healthcare portal audit, we found several accessibility violations. Our changes made the product 100% WCAG-compliant, protected the company from legal risk, and improved the experience for all users.

Conclusion

At its core, heuristic evaluation is about bringing discipline, structure, and expert insight into the process of improving usability.

At Fuselab Creative, we don’t treat it as a nice-to-have design exercise; for us, it is a powerful UX tool for quickly and cost-effectively identifying design problems.

However, remember, while this method is an incredible complement to user testing, it is not a replacement. The most successful teams combine expert evaluation with real user insights to create products that are both intuitive and effective.

If you are building products at scale, heuristic evaluation should not be an occasional exercise; it should be a regular part of your design process. Because great user experiences aren’t accidental, they are systematically designed, reviewed, and refined.

And that’s exactly where most teams hit a wall. Identifying usability issues is one thing; knowing which ones are actually hurting your bottom line, and how to fix them without breaking your system, requires a specialist’s touch.

That’s where we come in.

At Fuselab Creative, we conduct deep, expert-led heuristic evaluations that go far beyond surface-level audits. Whether you are refining an existing product, preparing for launch, or scaling a complex platform, we help you see your UX the way your users actually experience it and fix what’s holding it back.

Do get in touch if you want to know more about improving usability!

Ready to improve your product’s usability?

We help teams turn UX insights into clear, effective solutions