Chatbot UI design is the practice of designing the conversational surface, the visual signals for confidence and uncertainty, and the human escalation path through which users interact with an AI-powered assistant. It is distinct from chatbot development, which builds the underlying language model, retrieval logic, and integrations: most enterprise chatbot projects need both, but the failures that reach the user happen on the design side.

What a chatbot UI design engagement includes

A chatbot UI design engagement covers six concrete deliverables: the conversation flow design with decision trees for ambiguous inputs, the chat surface UI across every channel the bot will live on, the visual vocabulary for confidence and source attribution, the persona and voice guidelines, the human escalation interface, and the engineering handoff documentation. The work stops at the production model and retrieval pipeline. Those belong with the development partner.

Industries where chatbot UI design earns its keep

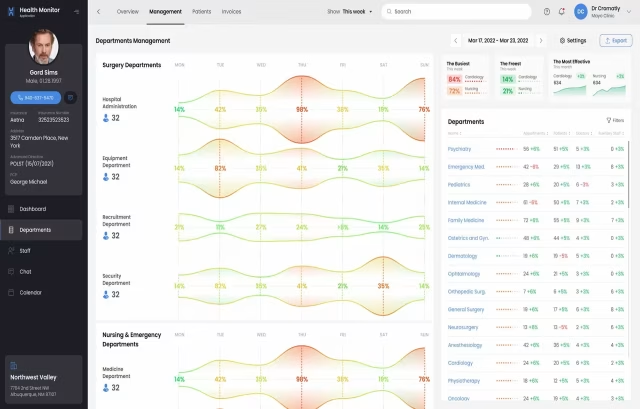

Chatbot UI design problems vary by industry. The visible interface decisions Fuselab makes on a clinical chatbot are not the decisions that show up on a federal records system or a fintech advisor bot. The six industries below are where chatbot UI design has the highest stakes and the narrowest margins for getting it wrong.

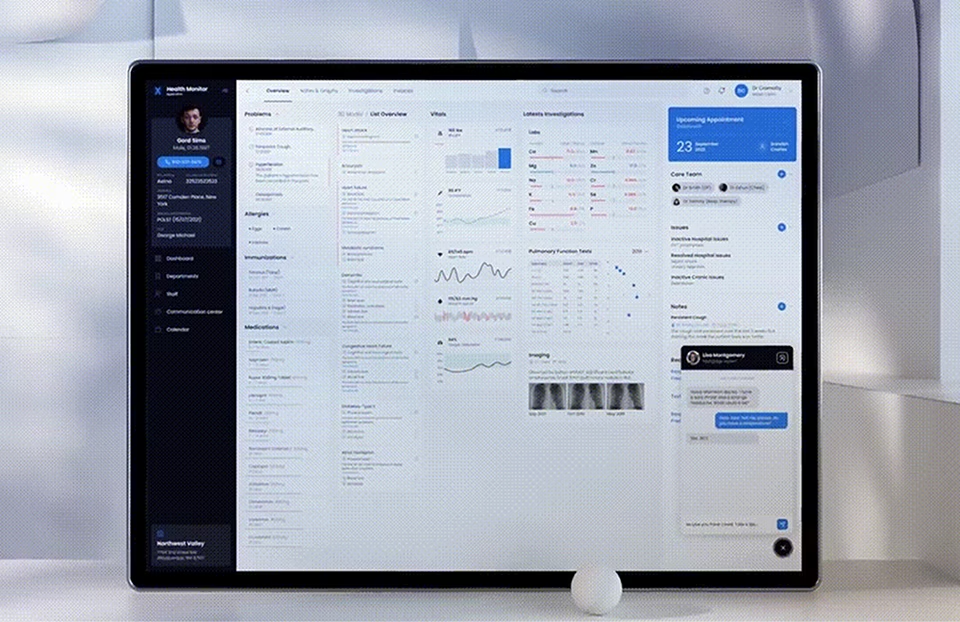

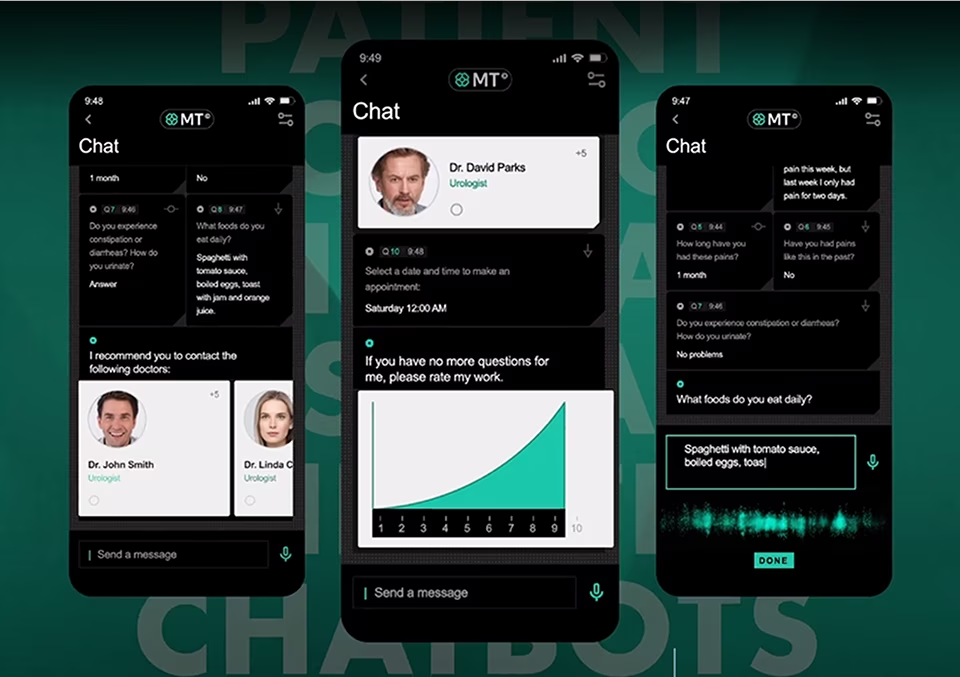

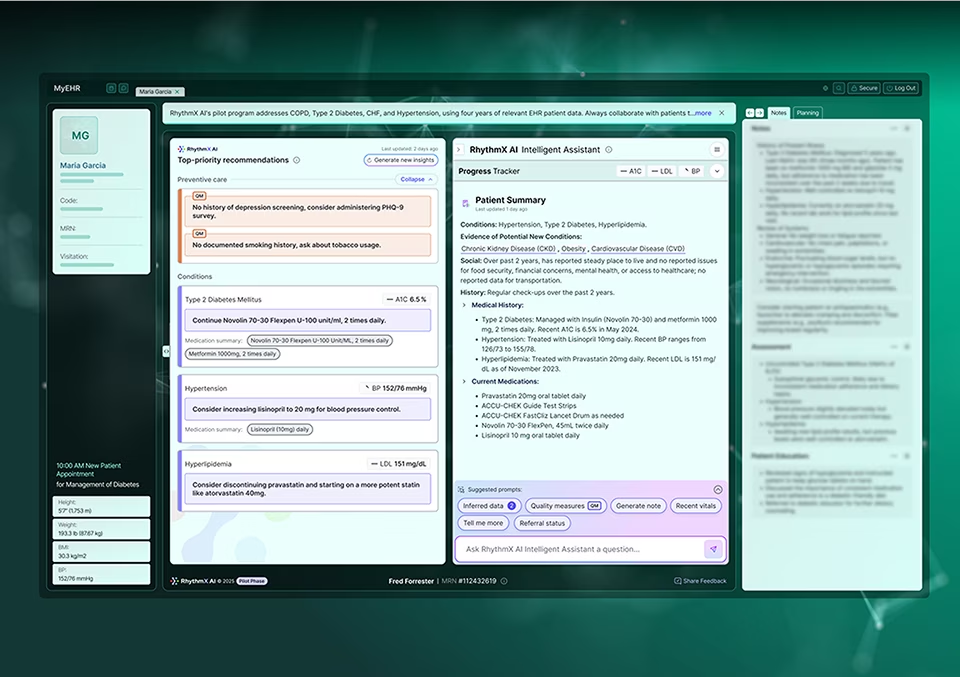

Clinical chatbot UI design carries a HIPAA exposure surface most consumer chatbots avoid entirely. The interface decisions that matter are the message retention disclosure shown as a persistent element in the chat header rather than buried in a privacy policy, the PHI redaction pattern made visible to the user when it triggers, and the explicit signalling of provider-versus-patient context because the same chat surface used by both audiences needs different defaults for what gets surfaced.

Federal and state agency chatbots under Section 508 carry stricter accessibility requirements than most agencies design for: keyboard navigation through message history, screen-reader-readable confidence indicators, sufficient contrast on every visual state including the typing indicator. The audit visibility requirement means conversation history needs to be exportable in a format that satisfies records-retention without exposing more than the user authorized. Fuselab holds a GSA contract for this class of work.

Banking and fintech chatbot interfaces design around regulatory disclosure and customer protection rather than around conversation feel. The interface needs to make clear when a chatbot response constitutes financial advice versus information, when escalation to a licensed advisor is required, and how the audit trail of the conversation is preserved for compliance review. Source attribution on financial data answers is non-negotiable: every number the chatbot returns needs a visible reference back to the underlying record.

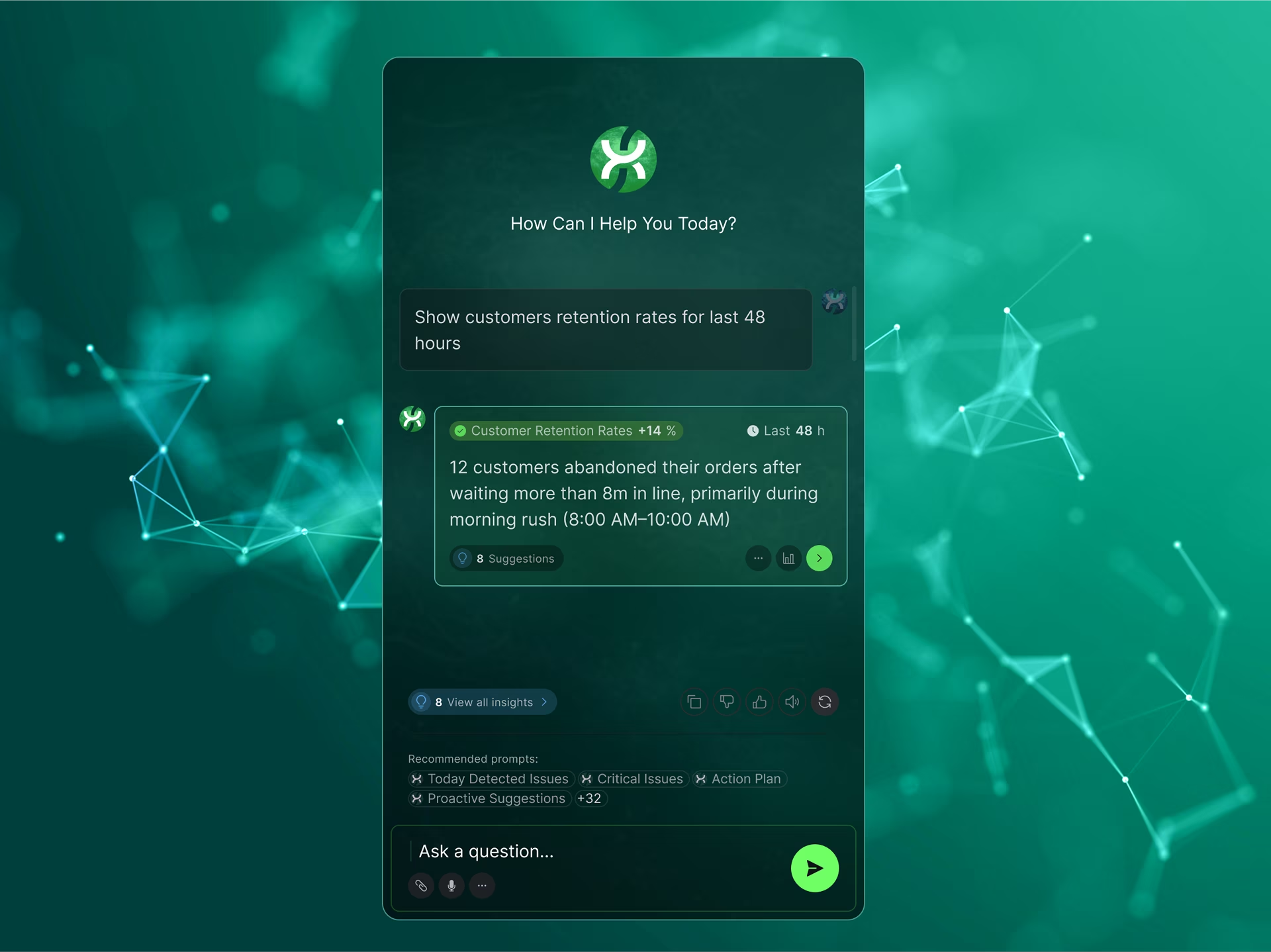

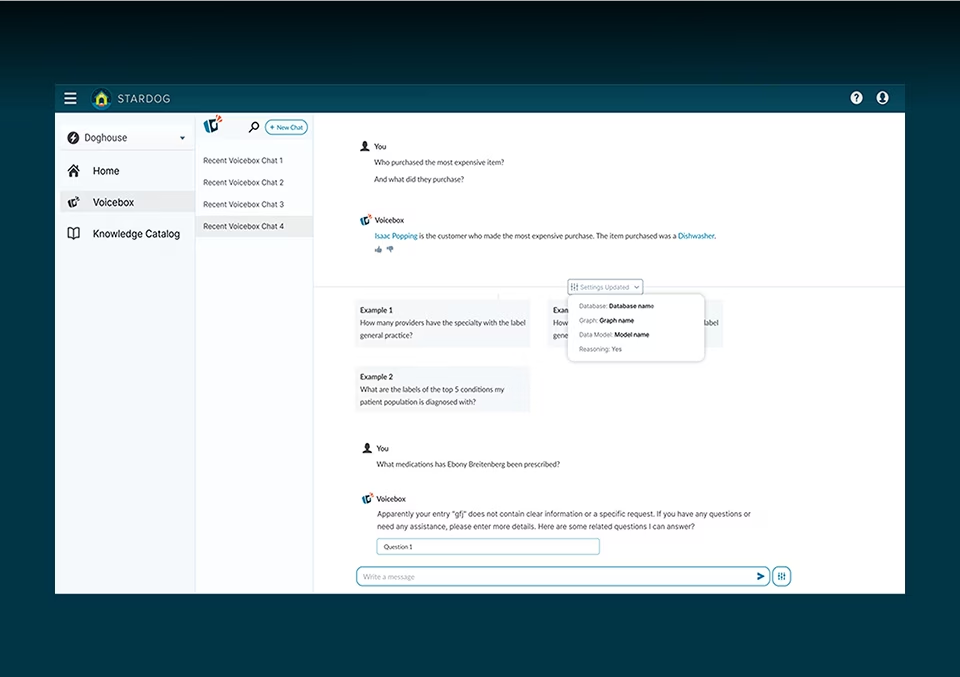

Product teams shipping AI and ML interfaces need chatbot UI design specifically around their model’s behaviour patterns, not generic chat UI templates. The confidence vocabulary, the source attribution treatment, the refusal pattern, the tool-use disclosure surface, and the human escalation path all need to be designed against what the model actually returns. Generic chat surfaces fail on AI products because they hide the uncertainty the user needs to see to trust the output.

Dispatcher-facing and customer-facing chatbots in this sector operate under data accuracy constraints most consumer chatbots do not face. A delivery window misstated by fifteen minutes is worse than no answer at all, because the dispatcher acts on the answer. The interface needs to signal when the chatbot is reading from live tracking data versus cached or estimated data, and the design has to make the freshness of every response visible inline rather than assumed.

Retail and direct-to-consumer chatbots design around the conversion path, not the conversation. The interface decisions concentrate on when the chatbot should hand the user back to the structured product page versus continue the conversation, how returns and exchange flows surface inside chat without losing the audit trail, and how the chatbot handles questions where a wrong answer creates a customer-service liability. Brand voice consistency is enforced through the persona design layer, not left to the model.

Related Services and Solutions

Frequently asked questions about chatbot UI design

The questions below come up in almost every chatbot UI design conversation Fuselab has with new clients. Each answer is grounded in the actual scope of a chatbot UI design engagement and the design decisions that determine whether a chatbot UI ships well or fails after launch.

What is chatbot UI design?

Chatbot UI design is the practice of designing the conversational surface, the visual signals for confidence and uncertainty, and the human escalation path through which users interact with an AI-powered assistant. It covers the chat surface UI across channels, the persona and voice guidelines, the source attribution and confidence vocabulary, and the handoff interface when the bot escalates to a human. Chatbot UI design is distinct from chatbot development, which builds the model and infrastructure underneath.

What does a chatbot UI design engagement include?

A chatbot UI design engagement at Fuselab includes six concrete deliverables: the conversation flow design with decision trees for ambiguous inputs, the chat surface UI across all channels the bot will live on, the visual vocabulary for confidence and source attribution, the persona and voice guidelines, the human escalation interface, and the engineering handoff documentation. The work stops at the production model and retrieval pipeline, which are the development partner’s scope.

How is chatbot UI design different from chatbot development?

Chatbot UI design covers the user-facing surface and the visible behaviour of the bot. Chatbot development covers the underlying language model, the retrieval pipeline, the integrations, and the production infrastructure. Most enterprise chatbot projects need both, but the failures users actually notice almost always happen on the design side: unclear confidence signals, broken escalation paths, wrong interaction patterns. A design partner and a development partner working in parallel produce better outcomes than a single shop attempting both.

How is chatbot UI different from chatbot UX?

Chatbot UI is the visual layer: the chat bubbles, buttons, input fields, attachment handling, and visual confidence indicators. Chatbot UX is the experience layer: how the conversation flows, when the bot asks for clarification, how it handles the user’s frustration, when it escalates. UI and UX are designed together in a chatbot engagement because they are inseparable in practice. The visual treatment of a confidence indicator is itself a UX decision about how to communicate model uncertainty to users.

How long does a chatbot UI design project take?

A project-based chatbot UI design engagement runs six to twelve weeks at Fuselab, depending on the number of chat surfaces, the regulatory context, and whether discovery is bundled. A discovery-led engagement that scopes the project before build runs two to four weeks. Multi-phase design partnerships span the full launch arc and the first 90 days post-launch, typically four to six months end to end.

What should I look for in a chatbot UI design agency for a regulated enterprise product?

Three signals separate a qualified regulated-context chatbot UI agency from a generalist. The agency has shipped at least one chatbot or conversational AI product in a comparable regulatory context such as HIPAA, Section 508, or financial compliance, and can name the project. The agency clearly distinguishes its design scope from chatbot development scope and does not pretend to do both. The agency can describe its approach to source attribution, confidence vocabulary, and human escalation specifically, rather than describing a generic six-step process. For specific patterns and production examples that demonstrate this approach, see our chatbot UI examples and design best practices guide.

Do I need both a chatbot UI design partner and a chatbot development partner?

Most enterprise chatbot projects benefit from engaging both, but not always at the same time. Discovery and design work happens first, with a design partner shaping the conversation flow, the chat surface UI, and the engineering handoff documentation. Development work then executes against the design with a development partner building the model, the retrieval pipeline, and the integrations. A single shop attempting both usually compromises one side. Fuselab can recommend development partners it has worked with successfully if a client does not yet have one.

Contact Us

Connect with Our Dashboard Development Team

AI UX/UI Design Blogs

Fuselab Creative Insights