An AI design agency specializes in designing interfaces for products where the underlying system is probabilistic rather than deterministic, and the core design problems are different from standard product work. How does the interface communicate model confidence without alarming the user? How does a verification marker work when the audience is a financial analyst acting on the output? How does a clinician override a recommendation they disagree with? Those questions define the discipline, and they show up across conversational AI platforms, ML experiment dashboards, clinical AI products, and enterprise data tools where outputs are generated rather than retrieved.

AI Interface Design Specializations

The design problems in AI interfaces cluster into five areas: communicating model confidence, handling failure states, giving users meaningful override control, visualizing probabilistic outputs, and setting accurate expectations about what the system can and cannot do. Each area requires different interaction patterns and different testing protocols than standard product design, and it is the combination of all five that defines what an AI design agency actually delivers versus a general UX firm applying standard methods to a non-standard product.

AI Design Agency Services: From Strategy to Development

Conversational Interfaces

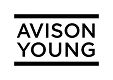

Conversational AI interfaces handle a design problem that button-based interfaces do not: the user can say anything, and the system has to respond to inputs it was not explicitly designed for. The interface must manage multi-turn context, handle ambiguity gracefully, and make it obvious when the system did not understand the request rather than guessing silently. On the Stardog Voicebox platform, this meant a dual-panel workspace where the conversation runs alongside the data output, so financial analysts can see both the query history and the generated analysis without switching views.

AI User Interface Design

AI-augmented interfaces pair machine-generated suggestions with user-produced content in the same workspace. The design challenge is making AI contributions visually distinct from user content without disrupting the workflow. Confidence indicators, inline attribution, and dismissible suggestion states all need to be designed so the user stays in control of the final output rather than passively accepting generated content that may contain errors.

Adaptive/Personalized Interfaces

Adaptive interfaces modify what they show based on accumulated user behavior, which creates a design problem standard interfaces do not have: the product looks different to every user, and testing has to account for personalized states rather than a single fixed layout. The interface also needs to make the adaptation visible and overridable, because a user who does not understand why their view changed will assume the product is broken.

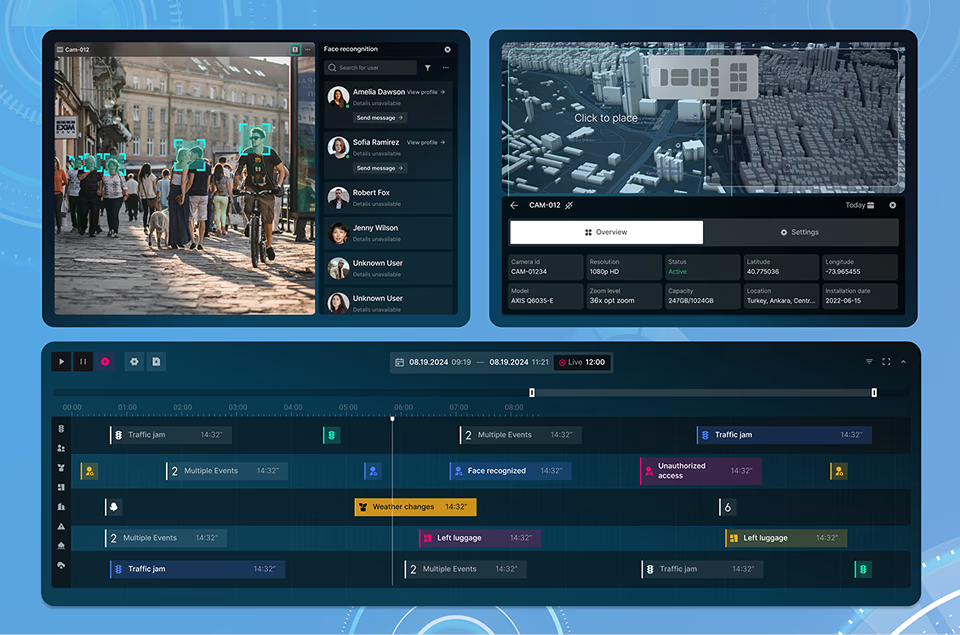

Ambient Intelligence Interfaces

Ambient intelligence interfaces operate in the background and surface information only when a threshold is crossed or an anomaly is detected. The design challenge is deciding what threshold justifies interrupting the user versus what should be logged silently. In monitoring dashboards for fleet management or solar grid operations, getting that threshold wrong means either alert fatigue from too many notifications or missed failures from too few.

Multimodal Interfaces

Multimodal interfaces accept input through voice, text, gesture, and visual channels within the same interaction. The design problem is not adding more input methods but deciding which input type is primary for each task and how the system responds when inputs from different channels conflict. An AR heads-up display that accepts both voice and gesture needs a clear hierarchy for which input takes priority during a simultaneous command.

Transparent AI Interfaces

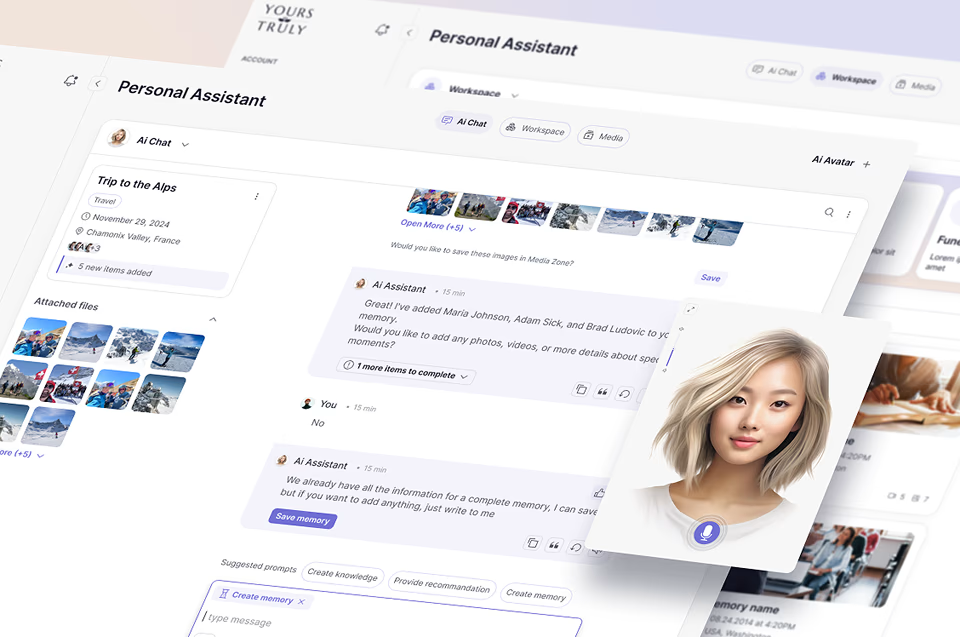

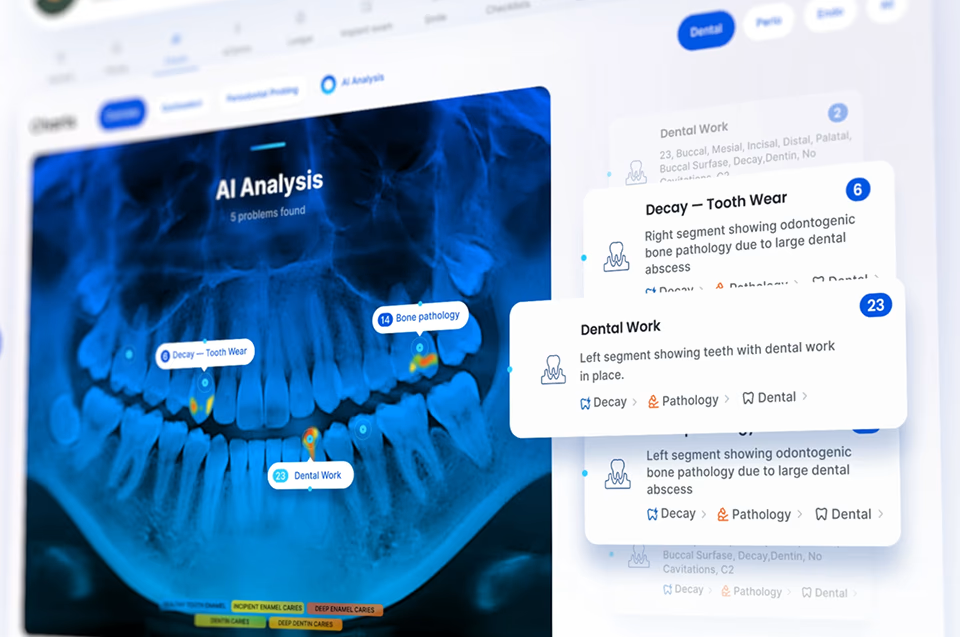

Transparent AI interfaces surface the reasoning path behind every generated output so the user can evaluate the conclusion rather than simply accepting or rejecting it. In healthcare, this means showing which patient data points contributed to a recommendation and what confidence level the model assigned. In financial analysis, it means linking each generated insight back to the specific data sources and calculations that produced it, so an analyst can audit the output before acting on it.

Designing AI Interfaces Users Understand

The core design problem in an AI interface is not the technology. It is trust. A user who cannot tell how the system reached a conclusion will not act on it. A user who cannot tell when the system is uncertain will either over-rely on it or dismiss it entirely. Every design decision on an AI product either builds or erodes the user's confidence in the system. Trust is a design material, not a side effect.

Making an AI system’s behavior visible to the user is a specific design problem, not a philosophy. It requires deciding what level of confidence to surface, how to present the source data alongside the generated response, and how to communicate uncertainty without undermining the value of accurate responses. On Stardog Voicebox, this meant verification markers on each AI response that distinguished high-confidence outputs from those warranting manual review. The calibration required extensive testing: markers too prominent undermined confidence in accurate responses, markers too subtle were ignored. That is the real work of AI transparency design.

Users who do not understand what an AI system cannot do will eventually encounter a failure they were not prepared for, and that single failure destroys the trust built by dozens of accurate responses. Setting accurate expectations is not a disclaimer problem. It is an interface design problem. The solution is not a help document. It is a first-load experience that surfaces real examples of what the system handles well, contextual cues that signal when a query is outside the model’s reliable range, and failure states that are designed as part of the product rather than as afterthoughts.

Automation is only valuable when the user retains the ability to override it without friction. An AI product that automates a decision the user disagrees with but cannot change will be abandoned. The design question is not how much to automate but how to make the automation visible and reversible. This means surfacing AI recommendations as recommendations rather than conclusions, designing override flows that are as fast as accepting the recommendation, and making the logic behind the automation readable enough that the user knows what to override and why.

Conversational AI interfaces fail when they try to simulate human conversation rather than designing for what the interaction actually is: a user querying a system that has limited context and variable reliability. The practical design problems are handling multi-turn context without losing the thread, surfacing when the system misunderstood a query rather than guessing silently, and managing response latency that varies with query complexity. On financial analysis platforms, a two-second delay on a simple lookup and a twelve-second delay on a complex calculation need different loading states, because applying the same spinner to both erodes confidence in the fast response and creates impatience on the slow one.

AI interfaces require a different iteration cycle than standard products because the system underneath them changes independently of the design. A model retrained on new data may produce different confidence distributions, different error patterns, and different response latencies. Nielsen Norman Group’s research on AI usability patterns reinforces that the interface needs to be tested against each model update, not just each design update. Confidence indicators and uncertainty signals should be designed as adjustable parameters that can be recalibrated when model accuracy shifts, without rebuilding the interface architecture.

Ethical AI design is a set of interface decisions, not a policy document. It means testing outputs across demographic groups to catch bias before users encounter it, designing consent flows that explain data usage in context rather than burying it in legal language, and building reporting mechanisms that let users flag outputs they believe are incorrect or unfair. The practical test is whether a user encountering a biased output has a visible, low-friction path to report it and whether the system communicates what happens after the report is submitted.

Ready to have a conversation?

Contact our AI/ML development

team by filling out the form below!

Our Work Examples

Latest projects

AI UX/UI Design

for Machine Learning Applications Simplified

Designing AI products requires a process that accounts for what standard product design does not encounter: model uncertainty, probabilistic outputs, user trust calibration, and edge cases that only appear when real users interact with a live model. Each stage below addresses a specific category of risk that, if missed, surfaces after launch instead of before it.

AI product design starts with understanding what the system is being asked to do and for whom. Before any interface work begins, the research maps the user’s workflow, identifies where AI adds genuine value, and defines what a correct versus incorrect AI output looks like to that specific user. This is different from standard UX research because the design has to account for model behavior, not just user behavior.

Concept development for AI interfaces focuses on how the product handles uncertainty before it handles success. The most important design decisions are what happens when the model is wrong, when it is uncertain, and when the user disagrees with its output. These scenarios are designed explicitly in the concept phase rather than discovered during testing.

AI prototypes test real model behavior with real users. The critical step is building prototypes that include actual model outputs rather than static placeholder responses, so user testing surfaces how people respond to real uncertainty, real errors, and real latency. The difference between a prototype with fake AI responses and one with real model outputs is the difference between testing the layout and testing the product.

Testing AI interfaces requires designing test scenarios around edge cases and failure states, not just successful interactions. Effective testing protocols include tasks where the model is uncertain, where the model is wrong, and where the user needs to override the AI recommendation. Designs that only work when the model is correct are not production-ready.

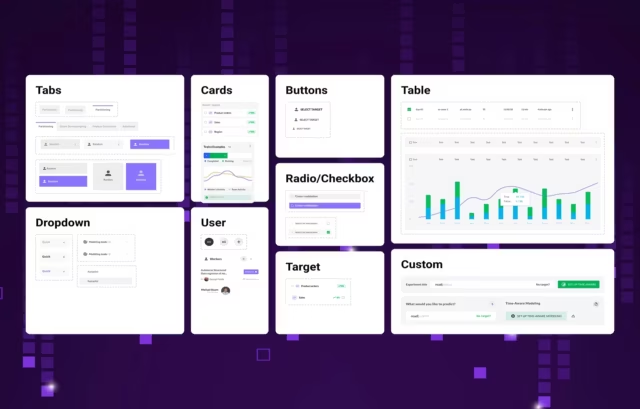

Design handoff for AI products includes specifications for every state the interface enters during a model interaction: loading, response, low confidence, error, empty, and override. Component documentation that covers all of these states allows development teams to build accurately without returning for clarification on edge cases that were not anticipated during the design phase.

AI products change as the underlying models improve. An interface designed around a model’s current accuracy level needs to be reconsidered when that accuracy improves significantly. Confidence indicators and uncertainty signals should be built as adjustable parameters that can be recalibrated when the model changes, without requiring the interface architecture to be rebuilt from the ground up.

User Consent

Consent mechanisms in AI products are frequently buried in legal language that no user actually reads, which means consent is technically obtained but not informed. The interface fix is contextual: explain data usage at the moment data is being collected, not in a policy document accessed from a footer link. Opt-in must be explicit rather than pre-checked, revocation must be accessible at any point in the product, and the user must be able to see specifically which AI features use their data and what happens when they withdraw permission.

Data Protection

Data minimization means collecting only what the AI system needs to function, not everything that might be useful later. The interface should make it visible what data is being collected and why, rather than operating on background data the user never consented to or forgot they consented to. For healthcare AI products, this intersects directly with HIPAA requirements, where the interface must enforce role-based data access and maintain audit trails for every data interaction that touches patient records.

Protecting Against Bias

Algorithmic bias in AI interfaces is difficult to address because it is often invisible until a specific user group encounters it. The interface-level response is threefold: test model outputs across demographic segments before launch, design reporting mechanisms that let users flag outputs they believe are biased or incorrect, and surface the data sources behind each recommendation so users can evaluate whether the input data itself is skewed. An AI interface that presents every recommendation as neutral without surfacing the basis for it gives the user no way to catch a bias the testing missed.

Accountability

Accountability in AI interfaces means three things at the design level: the interface clearly indicates which content or decisions were generated by AI versus entered by a human, every AI-generated output shows a confidence level or source attribution the user can evaluate, and there is a visible, low-friction path for users to dispute or override any automated decision. If a user encounters an output they disagree with and cannot find a way to challenge it within two clicks, the accountability design has failed.

AI Interface Design: Common

Questions

How do you design interfaces for ML-powered products?

As an AI design agency, we specialize in AI interface design that makes ML algorithms transparent and understandable to end users. Our approach begins with understanding your ML model’s capabilities and limitations, then translating complex outputs into clear visual hierarchies that communicate confidence levels, predictions, and decision-making logic. We conduct user research to identify what information users need to trust ML-powered systems, then create interface patterns that balance transparency with simplicity.

Can you design conversational interfaces for LLM-based chatbots?

Yes, conversational AI interfaces are a core part of Fuselab’s AI UX design work. We map conversation flows based on user intent research, design progressive disclosure patterns that reveal AI capabilities gradually, and build clear feedback mechanisms that help users understand what the LLM can and cannot do. Our designs include thoughtful error handling, context preservation, and escape routes when conversations go off track. Specific UI patterns and production examples are documented in our chatbot UI examples and best practices guide.

What makes designing for AI products different from traditional UX?

AI design requires addressing unique challenges like explaining ML model confidence, managing user expectations around AI-powered features, and designing for probabilistic rather than deterministic outcomes. We approach this by creating transparency layers that expose how AI systems make decisions, designing feedback loops that help AI systems learn from user corrections, and establishing clear mental models that help users understand AI capabilities and limitations. Our methodology includes testing with real users to validate that AI behaviors align with expectations.

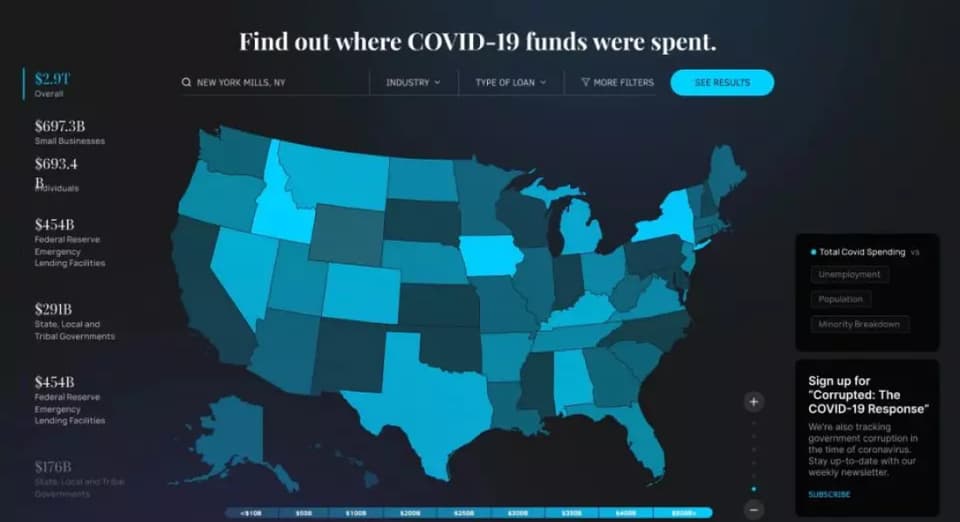

Do you design dashboards for AI analytics and predictions?

Absolutely—data visualization for AI interface applications is one of our core strengths, especially for ML-driven analytics and predictions. We design dashboards that layer information progressively, starting with high-level insights and allowing users to drill into model details, confidence intervals, and contributing factors. Our approach includes creating visual indicators for prediction accuracy, temporal patterns, and anomaly detection that help users make informed decisions based on AI outputs.

How do you handle designing for NLP and natural language interfaces?

Our AI interface design approach for NLP and conversational AI focuses on setting clear expectations, providing helpful error handling, and designing fallback experiences when natural language understanding fails. We create onboarding flows that teach users effective query patterns, design suggestion systems that guide users toward successful interactions, and build progressive enhancement strategies that improve as the NLP system learns. We also establish visual affordances that clarify when users are interacting with AI versus traditional interface elements.

Can you redesign an existing AI product to improve user adoption?

Yes, as an AI design agency we frequently improve AI UX for existing AI-powered products by identifying adoption barriers through user research and analytics analysis, then redesigning interfaces to build user trust and understanding. Our process includes auditing current AI interactions, mapping user pain points, and creating incremental improvements that increase transparency without overwhelming users. We focus on making AI capabilities more discoverable through contextual education and designing confidence-building experiences that demonstrate value early.

How long does it take to design an AI product interface?

AI design timelines typically range from 6-12 weeks depending on complexity, including AI strategy development, ML model integration planning, and iterative design refinement through our Lean UX methodology. We work in sprints that allow for continuous validation with your team and users, adjusting our approach based on technical constraints and user feedback. Our collaborative process includes regular check-ins with data science teams to ensure designs remain feasible while pushing for optimal user experiences.

Do you work with your data science team during the design process?

Absolutely—as an AI design agency, we collaborate closely with data science teams through embedded design sprints to understand ML model capabilities, LLM behaviors, and technical constraints that inform our design decisions. We facilitate workshops that bridge the gap between technical possibilities and user needs, create shared documentation that aligns design and development, and iterate designs based on model performance and accuracy considerations. This partnership ensures our designs are both user-friendly and technically feasible while optimizing for the best possible AI outcomes.

Can you help design AI features for our existing product?

Yes, we specialize in AI UX design and AI strategy for integrating AI-powered features into existing products without disrupting current user experiences. Our approach includes conducting user research to identify where AI can add the most value, creating design patterns that feel native to your existing interface, and establishing gradual rollout strategies that build user confidence. We help you prioritize which features to enhance with AI based on user impact and technical feasibility, ensuring each addition strengthens rather than complicates your product.

What deliverables do we get from your AI design process?

Our AI interface design deliverables include wireframes, interactive prototypes, comprehensive design systems, and ML-specific UI patterns that address unique challenges like model confidence visualization, loading states for AI processing, and intelligent error handling. You’ll receive detailed specifications for edge cases, design documentation that guides your development team through AI-specific interactions, and reusable component libraries that maintain consistency across your AI features. We also provide design principles and guidelines specific to your AI implementation to ensure quality as your product evolves.

Read Our Blog

Inspiration and knowledge to fuel your creative journey.