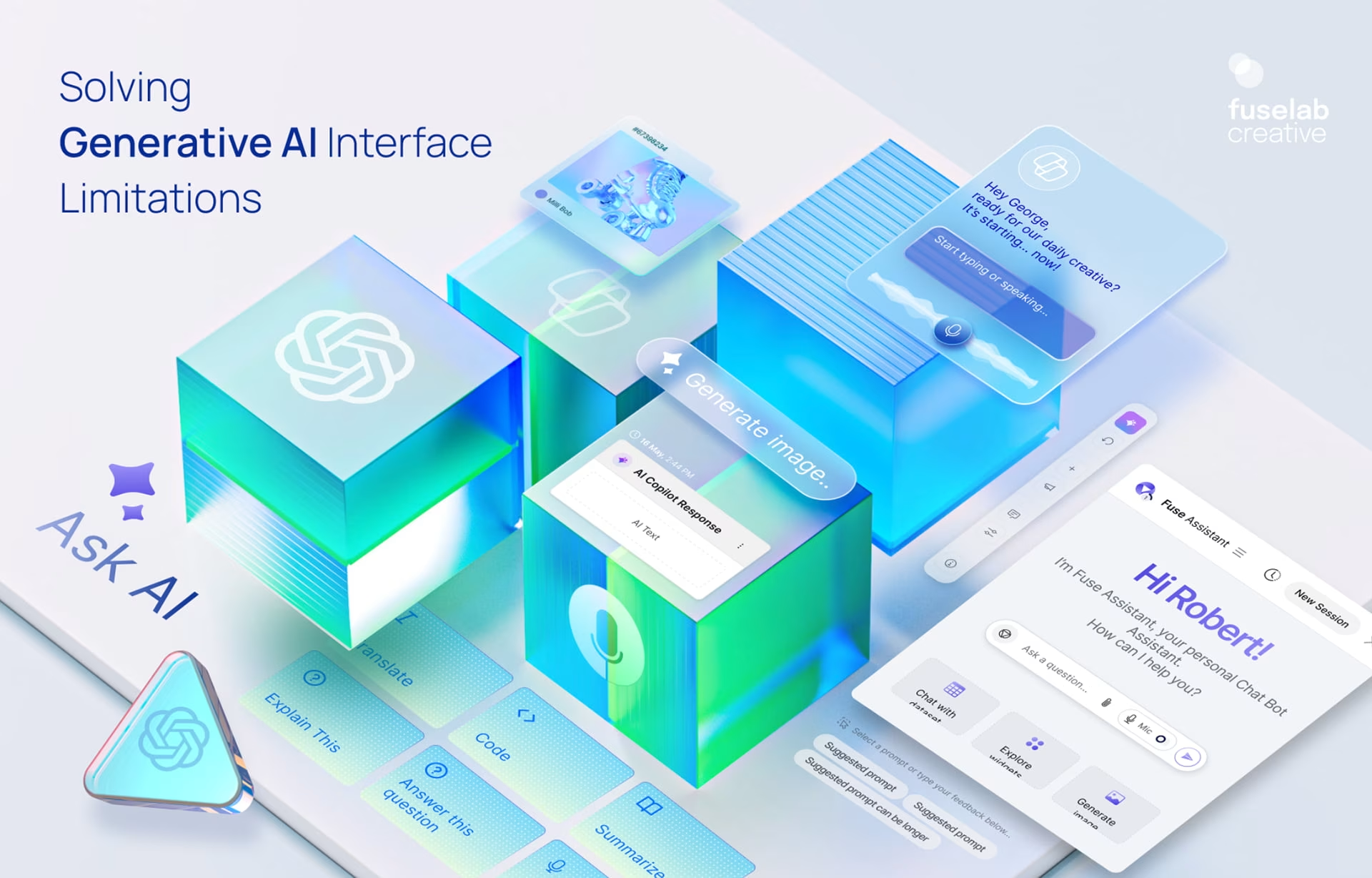

Solving Generative AI Interface Limitations: A Quick Guide

A subfield of artificial intelligence, generative AI has positioned itself as a powerful technology across industries and verticals. The generative AI interface helps users create new content—like text, images, and code—quickly changing how we work, learn, and use technology.

As its applications continue to multiply, developers are attempting to address its many limitations in hopes of widespread adoption. What are these common challenges in interacting with generative AI models? Are there any practical solutions to improve the user interface experience and the accuracy and relevance of generated outputs? How do we unlock the full potential of generating AI to solve complex real-world problems?

This brief guide covers it all.

What Are Generative AI Interfaces?

Generative AI is a kind of artificial intelligence that can create new content like text, images, audio, and video. Unlike traditional AI systems that focus on specific tasks, generative AI models first look at large sets of data to learn patterns and then produce original content similar to what they learned.

So, what are generative AI interfaces?

Interfaces act as the bridge between users and generative AI models. Put simply, they are the means for users to input prompts or requests and receive the output content that the AI generates.

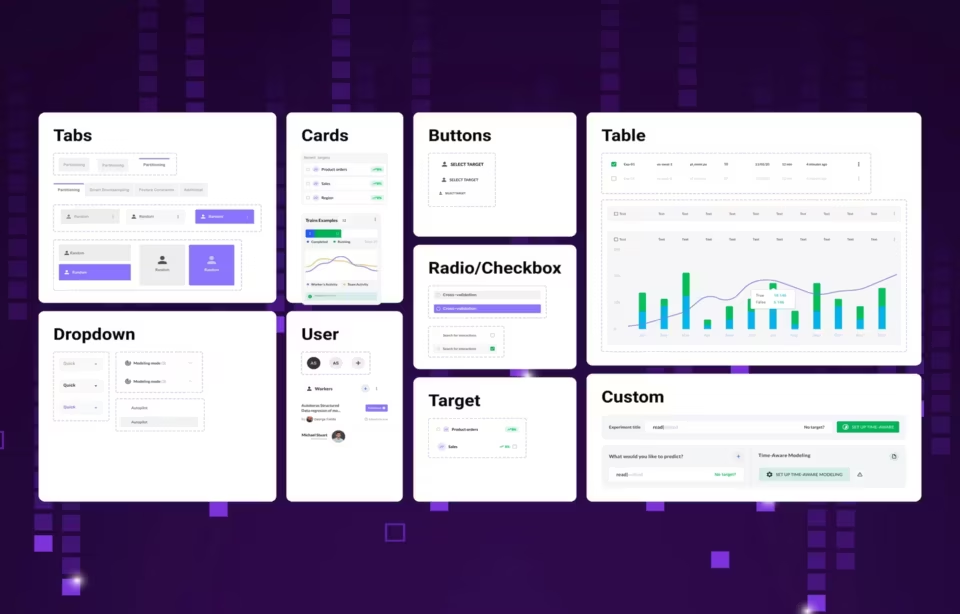

Think of interfaces as simple as a text box for entering prompts or as complex as a visually appealing interface for creating and changing images. Of course, the focus must be on the quality and usability of these interfaces for the effective adoption and use of generative AI technologies.

Besides, it is no hidden fact that generative AI has immense potential. It’s not surprising that experts predict the global market for this technology will hit an impressive $126.5 billion by 2031. This huge growth highlights the need to create easy-to-use interfaces that can make the most of generative AI models in the future.

Common Limitations of Generative AI Interfaces

While generative AI has made considerable strides with its vast capabilities, it is not without limitations. Interactions with generative AI interfaces present several hurdles.

#1 Lack of Contextual Understanding

One significant drawback of generative AI interfaces is that they struggle to maintain natural language understanding and broader context. Simply put, these models can easily handle and create text, but understanding the details of a conversation or the bigger picture of a topic is often very challenging.

This often results in confusing or unrelated answers because the AI struggles to link different pieces of information. To put this into perspective, consider that large language models typically have context windows of up to 4096 tokens. This means they can only process a limited amount of text at a time.

Because of this, these models have a hard time keeping track of long conversations and giving clear answers.

#2 Bias and Ethical Issues

Another major concern with generative AI interfaces is the potential for bias and ethical issues. Keep in mind that people provide the training data for these digital products, and this data may have biases that show up in the results.

For example, if a model trains on data that mostly represents one group of people, it might create content that reinforces those biases.

The result is in the form of inaccurate or wrong AI outputs, even spreading harmful stereotypes and discrimination. A recent survey shows that 43% of AI developers see bias as a big problem in AI development, highlighting how serious this challenge is.

#3 Limited User Control Over Output

No contesting exists that generative AI models can produce impressive results. However, users typically have limited control over the output. This can be frustrating for users who want to customize the content to their specific needs or preferences.

For example, a user might want the AI to create text in a specific style or tone, but the model doesn’t give the right result. This lack of control makes the AI interface less useful and slows down its use in different applications.

#4 Privacy and Security Concerns

Finally, privacy and security concerns are significant limitations that generative AI interfaces present. These models handle and keep a lot of data, so there’s always a risk of data breaches and privacy issues.

Also, because the AI might use sensitive information to train or create personalized content, we should ask ethical questions about how to manage user data. Addressing these concerns becomes important to ensure the responsible development and deployment of generative AI technologies.

Solutions to Overcome Limitations

You can unlock the true potential of generative AI models only after addressing such limitations. Developers need to implement effective solutions that enhance contextual understanding, mitigate bias and ethical concerns, and give users more control over the generated content.

Let’s see how.

#1 Enhancing Contextual Understanding

Improving the ability of the AI interface to maintain context calls for a strategic approach. Begin by adjusting the models using specific datasets or tasks, so they can learn patterns and connections that fit the intended context.

Next, you can add memory features like recurrent neural networks or transformers to help models remember information from past interactions and use it for future responses. Adding external knowledge sources, like encyclopedias or databases, also gives AI models access to a lot of facts, making it easier for them to create relevant content.

Let’s not forget that lengthy interactions only make maintaining context even more challenging. This is where you can use techniques like summarizing and topic modeling to find the main points of a conversation and focus on important information.

Summarizing past conversations can help the AI interface lighten the mental effort for users and keep it focused on the current topic. Using dialogue management systems is another useful way to guide the conversation and make sure the model stays on topic without going off on irrelevant tangents.

#2 Addressing Bias and Ethical Concerns

Mitigating bias and ensuring ethical AI development calls for using diverse datasets that represent a wide range of perspectives and experiences. Regular audits and evaluations of AI models can also help identify and address biases during development or deployment.

AI developers must follow ethical guidelines to create clear rules that ensure responsible use of the technology. Monitoring AI outputs is another crucial step in identifying and addressing ethical issues.

Developers who regularly review AI-generated content against transparent guidelines can better identify any biases or harmful stereotypes. This also allows you to handle issues promptly and effectively. A recent study shows that regular bias checks can cut AI errors by up to 35%, helping to ensure ethical AI development.

#3 Improving User Control and Customization

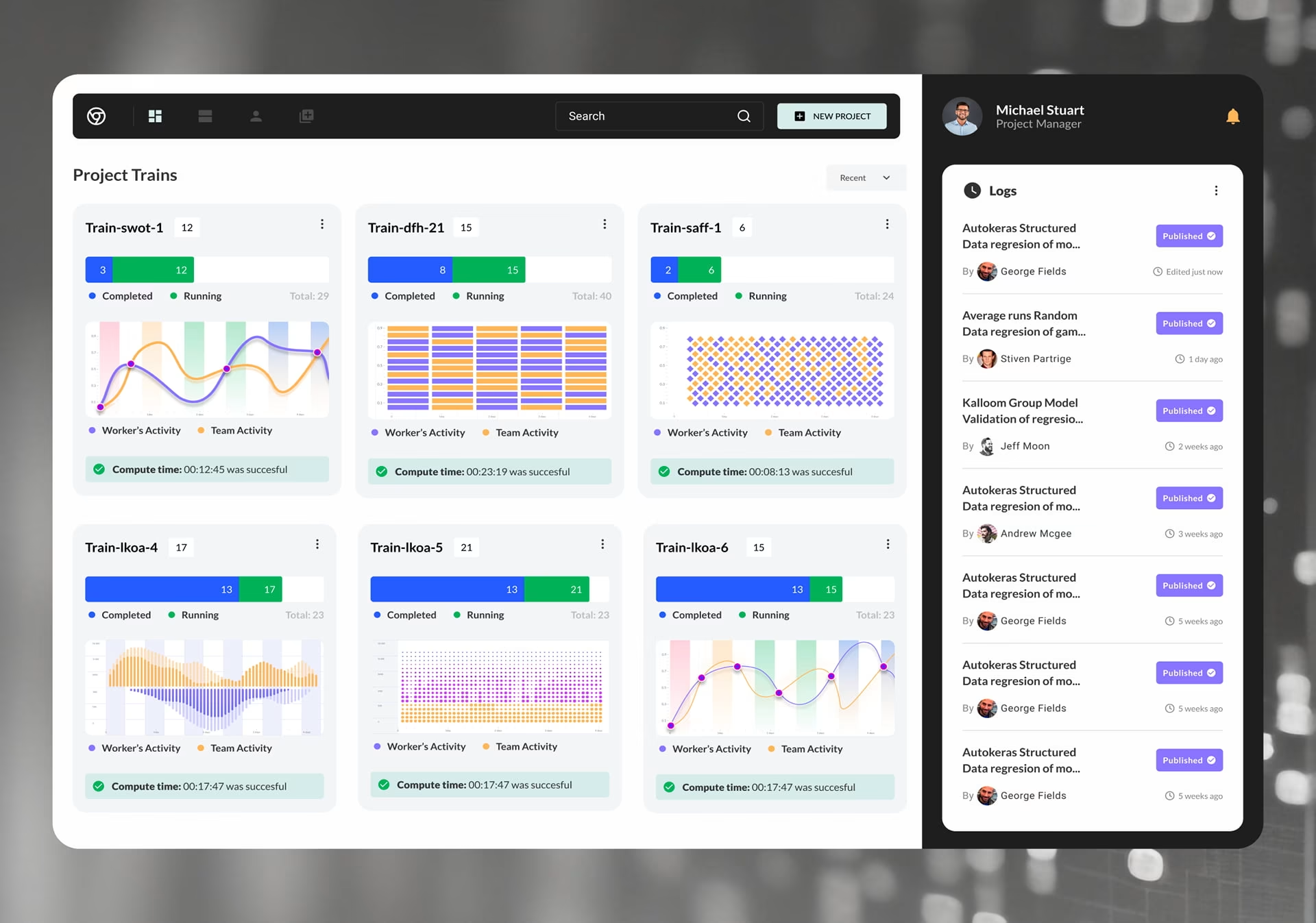

Interactive features added into the generative AI UI/UX design can give users more control over the generated output.

Consider adding features that let users give instant feedback, allowing the AI model to change its output based on their preferences. For example, users could rate the quality of the content or suggest ways to improve it. This feedback can then help refine the AI model’s algorithms for more tailored results.

A reinforcement learning approach can also work wonders in improving user control and customization. The AI model can learn from user corrections over time, adjusting its behavior to produce more accurate and relevant results. Say, if a user repeatedly corrects the AI model’s responses, it should be able to learn to adjust its approach. The generated content is then going to be more aligned with the user’s expectations.

#4 Ensuring Privacy and Data Security

The need to protect user privacy and data security in generative AI development and deployment is a no-brainer. Secure data handling practices, including encryption and anonymization, are a must to prevent unauthorized access to sensitive information.

Following important privacy laws like the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) is crucial, depending on where you are. Meeting data protection standards and conducting regular security checks can help find and fix weaknesses in the AI interface, lowering the risk of data breaches.

Several compliant organizations have actually noted a significant reduction in data breaches. A study shows that organizations can see up to a 70% drop in data breaches by following privacy laws, showing how effective these laws are at protecting sensitive information. Focusing on privacy and security helps developers gain users’ trust and ensures the ethical use of generative AI technologies.

Future Trends in Generative AI Interfaces

Like other new technologies, generative AI is changing quickly, and there are many exciting trends to watch in the coming years. One promising area is multi-modal AI, which combines different types of data—like text, images, and audio—to create advanced and useful results for everything from content creation to medical diagnosis.

Self-improving models are another forthcoming development. What makes them so exceptional is that these models can learn from their mistakes and constantly improve their performance over time. The result? More accurate and reliable AI systems that adapt to changing circumstances and user preferences.

More intuitive interfaces will enhance user experience and make generative AI technologies more accessible to a wider audience.

With an estimated growth rate of 35.6% over the next ten years, generative AI technology has great potential for growth. We can expect major improvements in how AI interacts with people, along with better and easier-to-use interfaces, leading to new and creative applications in various industries.

Conclusion and Next Steps

Generative AI interfaces are changing how we work, live, and play, but we need to overcome their limitations to fully unlock their potential. Key areas to focus on include improving understanding of context, addressing bias and ethical issues, enhancing user control and customization, and ensuring privacy and data security for better and easier-to-use AI systems.

Continuous improvement is the key here. Developers and organizations must stay up-to-date with the latest advancements in the field. Coupled with implementing the solutions this guide suggests, organizations can improve their experience with generative AI manifold, leveraging its capabilities for innovation more effectively.

If you need an experienced UX design agency to help you use generative AI technology effectively, Fuselab Creative is the right choice. Our time-tested principles and expert technicians can help you successfully navigate this emerging technology with ease. Connect with our tech team today to discuss your needs.