AI UX and UI design in regulated industries: a 2026 guide for healthcare, fintech, and government teams

AI design for regulated industries is the practice of building AI-powered interfaces in sectors such as healthcare, finance, and government. These are heavily constrained by regulatory frameworks like HIPAA, FDA, FFIEC, Section 508, and FedRAMP, and require integrating compliance-aligned features into the interface itself. As AI becomes more ubiquitous, regulation keeps pace; according to the Stanford HAI 2025 AI Index Report, FDA-cleared AI-enabled device software functions grew from 6 in 2015 to 223 in 2023, and 59 federal AI regulations were issued in 2024 alone, more than double the year before.

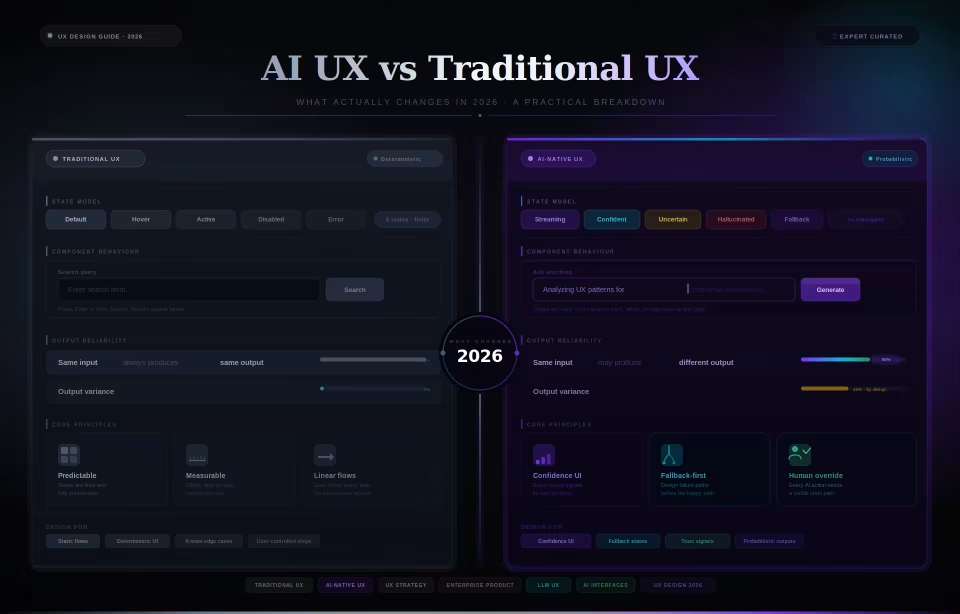

What AI design for regulated industries actually requires in 2026

AI design for regulated industries is the practice of building AI-powered interfaces in healthcare, finance, and government, where regulatory frameworks shape the interface itself rather than only the back-end. The discipline became distinct in 2024 and 2025 as the FDA, OMB, and state legislatures issued AI-specific requirements that changed what designers must surface in the workflow. Product owners, CDOs, and design leads building AI tools in regulated sectors are the audience.

Here’s what healthcare, fintech, and government have in common that most other industries don’t: in all three, a regulatory body has authority over what the interface must show, store, and how. The decisions made by software are subject to formal review, audit, and, occasionally, legal consequences. Think about what that actually means. A general AI product can treat the audit log as a backend concern, tuck it away in a database, and move on. A clinical decision support tool or a credit underwriting assistant can’t. The regulator reviews what the user saw, what the user did, and what was recorded. That’s a design problem.

The regulatory environment shifted significantly in 2024 and 2025, and most design teams haven’t fully caught up with what that means in practice. In December 2024, the FDA published its final guidance titled “Marketing Submission Recommendations for a Predetermined Change Control Plan for Artificial Intelligence-Enabled Device Software Functions”. The document defines how AI medical device manufacturers must plan and document model changes and impact throughout their products’ lifecycles. A month later, in January 2025, the agency issued a draft guidance, “Artificial Intelligence-Enabled Device Software Functions: Lifecycle Management and Marketing Submission Recommendations”, which lays out a full approach to lifecycle management, including guidance on addressing bias, transparency, and inclusivity.

While federal oversight matures, state-level legislation has become the immediate hurdle. Designers must track the specific status of these bills to avoid building for ghost regulations. For instance, while Utah’s Artificial Intelligence Policy Act (2024) requires proactive disclosure, in California the high-profile SB-1047 was vetoed in late September 2024 the same week Governor Newsom signed AB 3030, marking a state-level shift away from broad model-level liability and toward sector-specific transparency rules. Other states also have AI-specific laws, notably Illinois’s HB 3773, which prohibits discriminatory AI use in consequential decisions such as hiring, promotion, or discharge decisions; Nevada’s AB 406 (2025) restricts the use of AI for mental and behavioral healthcare delivery.

Put all of that together, and you realize that what’s at stake is not abstract. Compliance is now a design issue, not a backend concern. The cost of not designing for this environment shows up either as a compliance review failure (the interface gets flagged) or worse, users dropping the tool altogether. Both ultimately lead to an expensive, time-consuming rebuild of core workflows.

The three constraints that change the design playbook

Designing AI for regulated industries adds three constraints that don’t exist in this form in general AI product design: every AI decision must be auditable through a visible interface element (not a silent backend log); every output must follow patterns the relevant regulator can actually recognize and examine; and every workflow must include explicit accountability mechanisms for documenting who decided what.

Auditable decision-making is the constraint most teams discover too late. The assumption is that a backend audit log satisfies the requirement. It doesn’t. Regulators in healthcare, finance, and government inspect what the user saw when they made a decision; what the AI showed them; how confident the system was; what data fed into that output; and what the user did next. They inspect that through the interface, in the context of a workflow. The FDA’s January 2025 draft guidance makes this concrete: submissions for AI-enabled device software functions (AI medical devices) must document the human-AI workflow, meaning the interface must capture and reflect, in a structured way, how a human and the AI system interact at each decision point. That’s a design requirement, not a backend logging requirement.

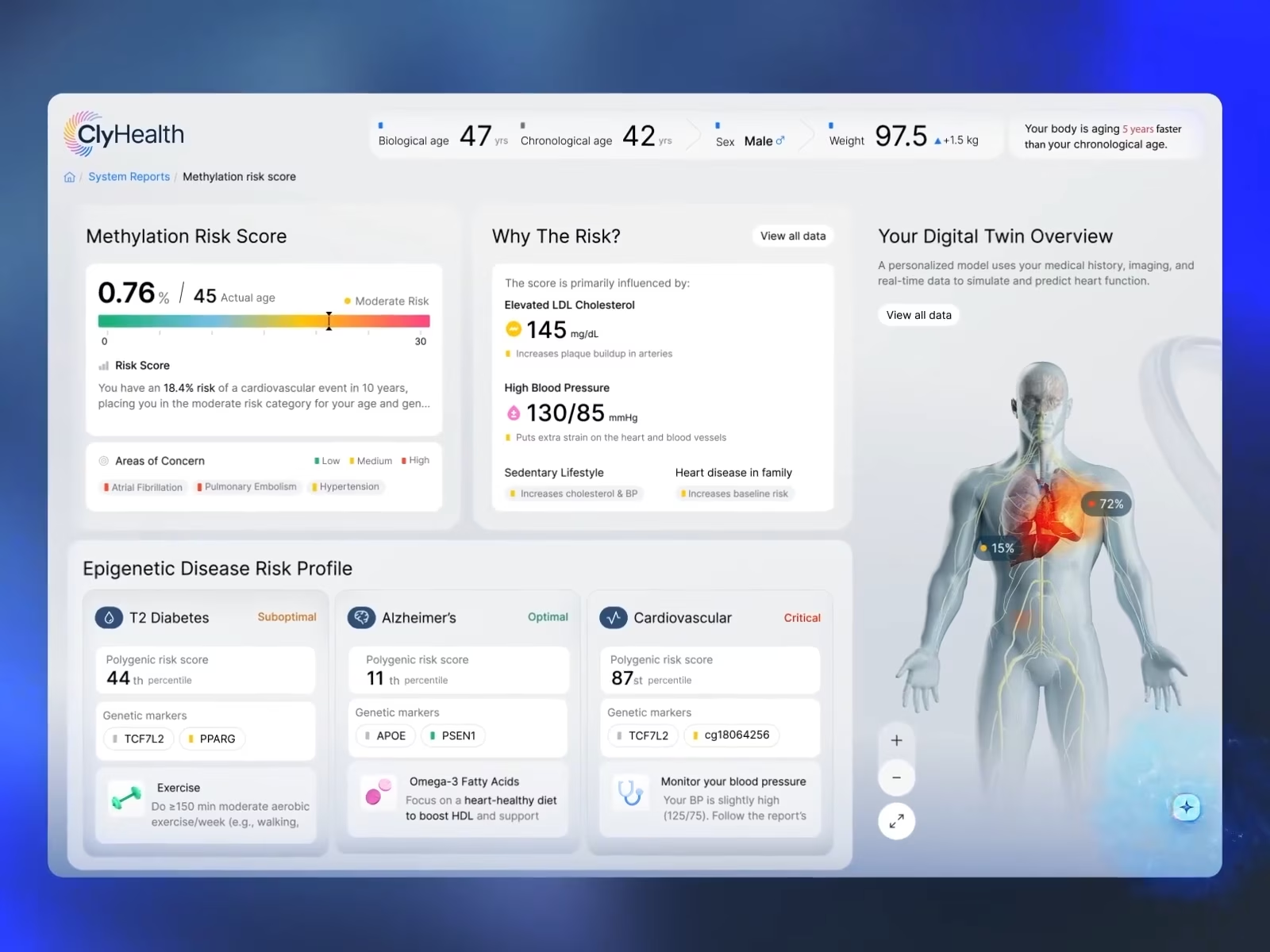

In our work on the ClyHealth clinical AI interface, the design pattern that produced an auditable workflow was the inverse of what most AI products do: instead of presenting a ranked list of probabilistic options, the interface surfaced a single recommendation with visible confidence and a one-tap override. The structural simplicity is what made the audit trail clean enough to hold up in chart documentation. The detailed treatment of that pattern is in the healthcare section below.

The second constraint is regulator-aligned UX patterns. The biggest mistake design teams make is to borrow interaction patterns from consumer AI, such as conversational interfaces, vague confidence indicators, or expandable reasoning panels. These work when the stakes of a wrong answer are low, and the user is the only reviewer. In regulated contexts, the regulator also serves as a reviewer. A healthcare professional doesn’t need a chatbot to explain probability ranges in conversation. They need confidence mapped to clinical documentation formats. A financial reviewer needs a structured explanation that satisfies ECOA’s specific-principal-reason standard. A government auditor needs a decision record, not a chat history. The question isn’t whether you have to show AI confidence ratings, override paths, or rationale surfacing; it is whether the workflow itself follows a pattern that supports regulatory reviews and audits.

The third constraint is explicit accountability. Which means every AI-influenced decision in a regulated context needs a clear record of which human authorized, accepted, or overrode it. That record cannot live only in a database; it has to be structurally visible in the interface, as part of the workflow. In practice, the override path is not a power-user feature or an edge-case flow. It is the credibility mechanism. It’s what lets a clinician, a financial reviewer, or a government case worker trust the AI’s output enough to act on it. Most teams design it last, after the primary flow is built and tested. That sequencing produces an override path that doesn’t hold up under compliance review, because it wasn’t designed as a primary path. Design it first.

AI in healthcare UX: HIPAA, FDA, audit-trail

AI healthcare UX in 2026 sits at the intersection of HIPAA, FDA guidance for AI-enabled device software, and Section 508 accessibility requirements. HIPAA and FDA push the interface toward visible audit trails and documented overrides; Section 508 layers accessibility requirements on top of every AI-generated output. The core challenge is no longer simply generating accurate AI outputs. It is about designing workflows in which every recommendation can be reviewed, overridden, documented, defended, and made accessible.

Healthcare AI teams often assume the difficult part is the model. On the contrary, the core challenge lies in designing an interface that marries trust with multiple regulatory requirements while remaining usable by clinicians under time pressure. HIPAA governs how patient data is stored, transmitted, and recorded. Section 508 requires that federal healthcare (and government-linked) tools meet WCAG 2.0 Level AA accessibility standards. And then there’s the FDA, which in the last 18 months has published two documents that changed what “compliant” means for clinical AI interfaces, and most design teams are still catching up. Unlike in traditional enterprise software, all three frameworks now appear directly in the interface layer in regulated sectors.

The FDA’s 2024–2025 guidance cycle changed the conversation significantly here. The December 2024 final guidance on Predetermined Change Control Plans formalized the documentation of planned post-deployment model updates. That alone has major UX implications, as interface behavior can no longer be treated as static when the underlying model evolves over time. The guidance has three sections. The Description of Modifications section requires manufacturers to document every planned change to the model, which, for designers, implies that the interface should expose the current model version in each AI output so reviewers can map each output to the corresponding modification record. The Modification Protocol section requires evidence that performance has been validated across the populations the model serves, which the interface translates into confidence displays grounded in measured subgroup performance rather than a single generic percentage. The Impact Assessment section requires documentation of how each change affects safety and effectiveness, which makes the override path a primary interaction whose every use produces a documented record. For designers, this means the model card is a designed interface object, not a legal footnote. It tells the clinician what the model was trained on, where it performs well, and where it doesn’t, and it needs to be accessible within the clinical workflow, not buried in a help section.

The January 2025 draft guidance on Lifecycle Management had additional direct implications for design teams, including further expanding the frame by introducing human-AI workflows and the Total Product Life Cycle. It laid out that FDA submissions include wireframes to show what information is shown to users, when, and how it’s presented; that AI outputs fit into clinical workflow; and that transparency should be incorporated from the earliest stage of device design through decommissioning. It also reemphasizes that updates and bias monitoring across patient subgroups should be displayed throughout the product lifecycle.

In our work on the ClyHealth clinical AI interface, we observed that clinicians don’t want a list of probabilistic options to deliberate over. They want a single recommendation they can verify or reject. We implemented the one-recommendation-per-step pattern with confidence always visible and one-tap override. No ranked option lists. No multi-step confirmation flows. The clinician sees the suggestion and confidence level, accepts or overrides it, and every interaction produces a structured record that maps directly to what the FDA means by human-AI workflow documentation. That simplicity wasn’t for aesthetics; it was a design decision that made the audit trail clean enough to withstand compliance reviews. Clinicians weren’t asking for options or flexibility. They wanted faster verification with a record they could stand behind. The interface gives them that, and the regulators get the documentation they need, too.

The failure we see most often in healthcare AI isn’t a misunderstanding of HIPAA; teams know HIPAA. It’s about discovering that backend logs don’t suffice for regulatory review, and that the interface doesn’t support the review-friendly workflow needed to pass compliance audits. The other consistent mistakes we see are borrowing confidence indicators from consumer AI products that don’t map cleanly to clinical documentation or charts. Teams also very often underestimate how aggressively Section 508 requirements shape clinician-facing systems in state and federally connected healthcare contexts.

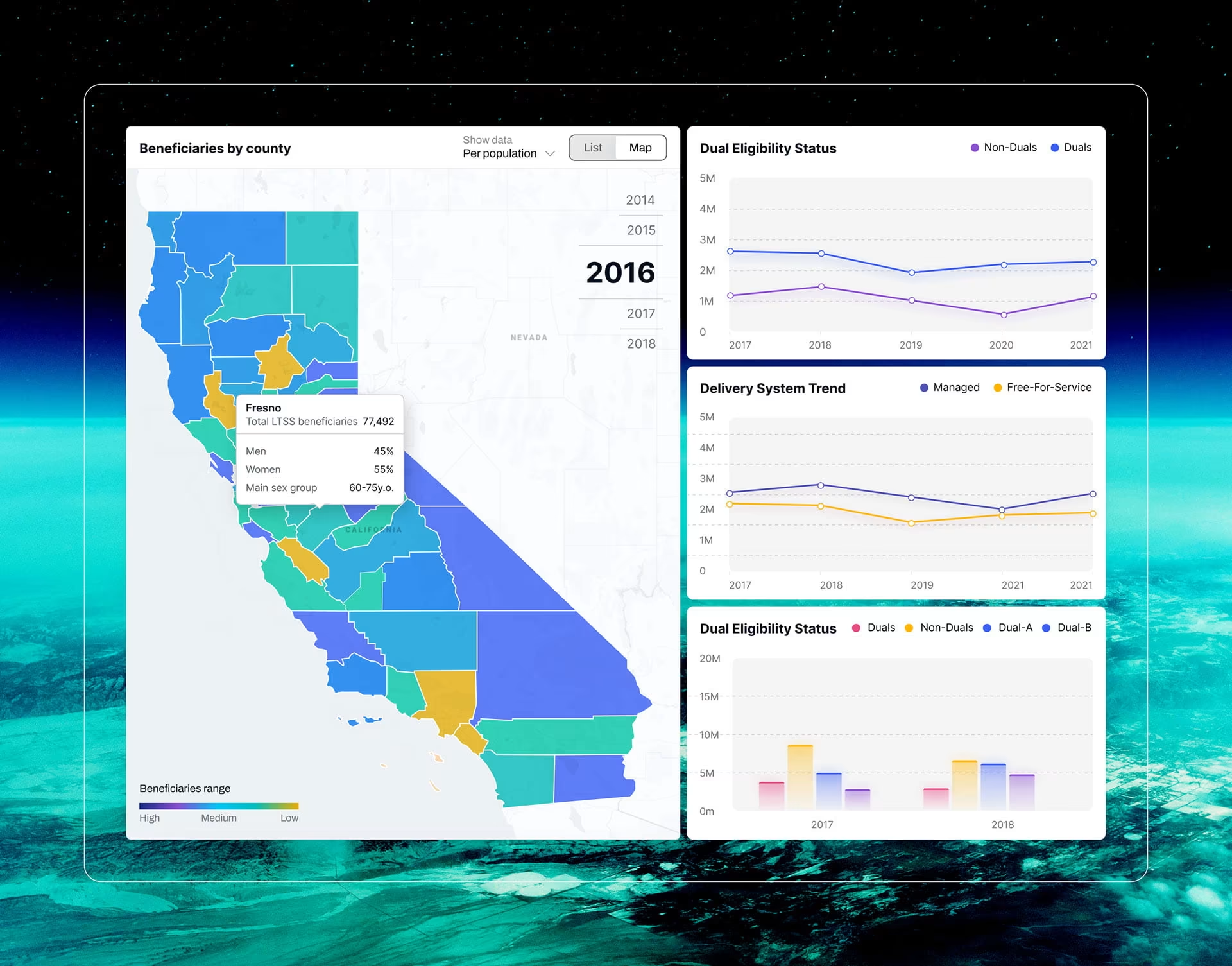

In our work on healthcare UX projects such as DHCS Medi-Cal, we found that Section 508 requirements consistently surfaced later than they should have, mostly because they were categorized as an accessibility workstream rather than a core UX architecture decision. Streaming AI output, dynamic content rendering, and AI-generated alt text all require architectural choices. By the time the accessibility review happened, those choices were already locked. Treating 508 as a cleanup pass is how you end up rebuilding core interaction patterns under a compliance deadline.

AI in fintech UX: FFIEC, audit logs, explainability

AI fintech UX means designing interfaces where every AI-influenced credit decision, fraud flag, or loan recommendation is explainable in terms a financial examiner can inspect. The regulatory frameworks, like ECOA, Regulation B, and CFPB guidance, set very specific requirements for what the interface must display and document when AI is involved in a credit decision. Additionally, FFIEC has laid out detailed training modules for its auditors and published the IT Examination Handbook to govern how compliance is reviewed, which is why explainability cannot be a UX afterthought.

The regulatory framework for fintech AI spans FFIEC (Federal Financial Institutions Examination Council) examination guidance, ECOA (Equal Credit Opportunity Act), and CFPB (Consumer Financial Protection Bureau) circulars. These aren’t parallel frameworks; they stack. FFIEC guidance shapes how financial institutions are examined operationally. ECOA governs what must be communicated to applicants when credit is denied or conditions are changed. And the CFPB has made explicit, in two circulars, that those ECOA obligations don’t soften just because an AI model made the decision. SEC and FINRA obligations shape additional documentation expectations depending on the product category. Then state-level AI legislation starts stacking on top of federal requirements.

ECOA’s requirement that creditors provide “specific principal reasons” for adverse actions becomes especially important here. A system cannot simply say, “The model flagged this application.” The workflow has to show the “why” in a way a reviewer can understand. And that changes the interface significantly. A vague confidence score or conversational explanation might feel great in a product demo, but it doesn’t stand up to the rigor of an audit. CFPB made this explicit for AI models specifically. In its 2022 and 2023 circulars, CFPB stated that creditors using complex algorithms, including AI and ML models, in any aspect of a credit decision must disclose the specific principal reasons for an adverse action. It went further, prohibiting creditors from using complex algorithms if doing so would prevent them from providing specific, accurate reasons. What it boils down to is that a black-box AI model is, by definition, non-compliant if it can’t display specific reasons in its interface. For the UX design team, this means that when AI influences a credit decision, the reason displayed isn’t a hover state, a tooltip, or a help article. It’s a documented part of the workflow and a structured output that captures the specific reasons for this decision.

In our work on regulated financial data interfaces with comparable compliance constraints, such as Fiserv’s financial data tools, we observed that audit trail and explainability surfaces are solvable design problems: the underlying data and signals exist, but entrenched interface UX practices often bury them or render them vague. The same pattern shows up in ECOA explainability. The model can usually provide specific principal reasons, but most fintech UX patterns surface them as fine print or post-decision letter content rather than as primary workflow content that the reviewer interacts with directly.

The built-in explainability also changes how audit logs need to behave in financial products. Backend logging alone is not enough because reviewers need to see what the AI flagged, why it flagged it, the specific principal reasons, and the reviewer’s decision. That record is what an FFIEC examiner or CFPB investigator will ask to see. It lives in the interface, not in a database query. The audit trail becomes part of the interface architecture.

AI in government UX: Section 508, FedRAMP, decision documentation

Government AI interfaces carry a regulatory stack that most design teams underestimate: Section 508 for accessibility, FedRAMP for cloud-deployed systems, and OMB memoranda requiring agencies to document how AI influences decisions affecting the public. The central design challenge is to build interfaces in which AI-assisted decisions remain accessible, reviewable, and accountable at every step of the workflow.

The regulatory framework for government AI spans three requirements. Section 508 of the Rehabilitation Act requires that content (including AI-generated) meet WCAG 2.0 Level AA at a minimum. FedRAMP authorization is required for cloud-delivered AI services to federal agencies, with authorization levels tied to the sensitivity of data the system processes. And OMB Memorandum M-24-10, “Advancing Governance, Innovation, and Risk Management for Agency Use of Artificial Intelligence”, required each agency to publish its AI use case inventories annually and identify which AI use cases are safety or rights-impacting.

In the AI context, Section 508 is where the genuinely hard design problems live. AI-generated content, such as text and image outputs and dynamic interface elements, must meet the same accessibility standards as any other federal content. That’s clear enough in principle. However, in practice, it throws up design challenges that don’t yet have settled answers. For example, the case of streaming text output. Most AI interfaces stream responses token-by-token rather than waiting for a complete response before rendering. For visually impaired users dependent on screen readers, it creates a chaotic and confusing user experience. Teams building government AI tools currently face a choice between two bad options: announce text after each token (individual words and fragments get announced extremely quickly, producing unusable screen reader output) or announce after the complete output is given (which delays the response, leaving visually impaired users wondering if something is wrong). There is no established WCAG standard for streaming-text announce-when-ready logic in AI interfaces. This is an open design problem that regulated-industry teams have to solve, not borrow from a best-practices document. AI-generated alt text has a parallel problem: alt text generated by the same model that produces the primary content cannot provide accurate accessibility validation, because the model’s errors and biases will appear in both the output and its description. Which means someone else needs to be in the validation loop.

Decision documentation under OMB M-24-10 introduces the second major workflow shift. It requires agencies to identify which AI use cases are safety- or rights-impacting and to apply minimum-risk management practices to those use cases. This includes training staff to oversee AI and step in when AI impacts rights or safety. In practice, this means that every AI-influenced workflow needs clear records showing which agency staff member reviewed the output, what decision they made, and why. The audit trail becomes part of the operational workflow itself.

As a GSA contract holder, we have navigated these requirements during procurement. In our work on government and government-adjacent data interfaces with similar compliance constraints, such as DHCS Medi-Cal and POGO data visualization, we observed that reviewers trusted systems more when workflow accountability was visible rather than hidden within administrative tooling, and users moved faster when they understood how decisions were documented.

Common mistakes when teams move from general AI design to regulated-industry AI

Most AI products in the regulated industry fail in predictable ways. The failure isn’t usually technical. It’s the design assumption that compliance is someone else’s problem, handled somewhere else in the stack, and that the interface just needs to be usable.

The most expensive and most common mistake is treating compliance as a backend concern. Here’s what happens: you have a database with audit logs that record decisions, data encryption and a legal sign-off. You are sure that compliance is handled until the reviewer or auditor comes calling. That’s when you realize that regulators don’t want backend logs; they want to see exactly what the user saw when they made a decision. But the interface is not designed to show this, and a quick fix will not solve the problem. This leaves you and your design team rebuilding core workflows while users are already in the system, and the compliance clock is ticking. This high-cost failure pattern is entirely preventable if the audit trail is treated as a primary design surface from the first sprint.

The second mistake is transplanting general AI patterns directly into regulated environments. ChatGPT-style confidence phrasing, conversational personas, and generic confidence indicators all work well in consumer contexts where the user expectations are minimal and the stakes of a wrong answer are low. However, in a healthcare setting, for example, a doctor needs exact confidence scores, not “the model is fairly confident.” Think about it, would you agree to have a medical procedure by a doctor who stated he is fairly confident about his surgery skills?

The third mistake is designing for accessibility last. Section 508, WCAG 2.1 AA, and ADA requirements don’t retrofit cleanly onto AI interfaces. Streaming text output, dynamic content rendering, AI-generated alt text, etc., require interaction design that touches the interface’s core architecture, not just the visual layer. We believe there are still way too many designers and developers not putting in the work to test with screenreaders themselves.

The fourth mistake is treating the model as the product. Some teams spend enormous energy on model selection, fine-tuning, and performance benchmarking, treating the interface as a thin presentation layer built quickly at the end. In regulated contexts, it’s exactly the opposite. Regulators don’t inspect the model; they inspect the interface and the workflow to check how AI was presented to the user and how the interaction went. Compliance review does not happen in the backend. It happens in the workflow.

How regulated-industry teams should approach AI product design

Regulated-industry AI teams in 2026 need to treat compliance as a core UX design practice rather than a post-launch review process. The strongest products are designed with features such as audit trails, override paths, decision documentation, accessibility requirements, and regulator-aligned workflows integrated from the first sketch, rather than retrofitted later.

Start with user research informed by regulatory context, not the model. Before sketching a single screen, document the specific requirements that apply to your context: HIPAA, FDA, and Section 508 for healthcare; FFIEC, ECOA, and applicable state laws for fintech; Section 508, FedRAMP, and OMB requirements for government. Treat them like a brief for your design process.

Then design the audit trail, the override path, and the decision documentation as core workflows. Do not treat them as edge cases or the last screens in the deck. The override path is the credibility mechanism that lets a clinician, a financial reviewer, or a government caseworker trust the AI’s output enough to act on it. An AI tool is asking them to stake their professional judgment on a recommendation, and without visibility into and control over it, they won’t use the product.

Build regulator-lens validation into the design process, not after it. Standard usability testing validates whether a user can complete the task. Regulator-lens validation asks whether a reviewer inspecting this workflow could understand exactly what the AI showed, what the user did, and what was documented. This validation runs alongside design iterations, not as a response to a compliance finding.

Conclusion

The regulatory ground for AI in healthcare, finance, and government has shifted faster in the last eighteen months than in the previous five years combined. Teams that design the audit trail, override paths, and decision documentation from the start ship products that pass compliance review and earn user trust. Teams that bolt these on after launch are heading towards a costly and time-consuming rebuild.

Teams scoping their next regulated-industry AI product can review how Fuselab approaches enterprise AI interface design.

Frequently asked questions

What is AI design for regulated industries?

AI design for regulated industries is the practice of building AI-powered interfaces for healthcare, financial, and government products where regulatory frameworks require the interface itself to surface auditability, compliance-aligned patterns, and explicit accountability mechanisms. HIPAA, FDA, FFIEC, ECOA, Section 508, FedRAMP, and OMB requirements govern what the interface must show and document. Teams designing for these contexts treat those requirements as part of the workflow design itself.

How is regulated-industry AI design different from general AI product design?

Regulated-industry AI design adds three things that general AI design mostly ignores. Every AI decision needs a visible audit trail, not a database log, but something the user can actually see. Every output needs to follow patterns that the regulator can inspect; you are not just designing for the user. And every workflow needs a clear record of who saw the AI’s recommendation, what they decided, and why.

How does AI healthcare UX differ from AI fintech UX?

AI healthcare UX must satisfy HIPAA, FDA, and Section 508 simultaneously, with audit trails that integrate into clinical chart documentation and override paths captured as structured clinical notes. AI fintech UX must satisfy FFIEC examinations, ECOA’s specific-principal-reasons requirement, and CFPB guidance, with audit logs surfaced to financial reviewers and decision documentation mapped to regulatory expectations. Both share the audit-trail and accountability pattern but differ in regulatory framework, user role, and required documentation format.

Is FedRAMP authorization required for all government AI products?

FedRAMP authorization is required when an AI product is delivered as a cloud service to federal agencies and handles federal information at the relevant impact level. The authorization tier (Low, Moderate, or High) depends on the sensitivity of the data the system processes. Though FedRAMP is increasingly used as a baseline outside the federal context for state and local government AI products, it is best to check the exact requirements with the contracting agency before taking any major architecture decisions.

How long does an AI design project for a regulated industry typically take?

Regulated-industry AI design projects last 5 to 9 months from research to ship-ready handoff in our experience. This is around 50% longer than a general AI design project. The additional time goes into regulatory mapping, building audit trail and override path designs, and compliance testing.

What should a regulated-industry AI design portfolio demonstrate?

A regulated-industry portfolio should show shipped products with visible audit trails, designed override paths, and structured decision documentation. This is evidence that the team has actually solved the compliance-as-design problem. Look for named projects in at least one regulated context, evidence of engagement with the relevant regulatory framework, and references to work that has undergone compliance review.

How do you choose an AI design agency for a regulated-industry product?

Choosing an AI design agency for a regulated-industry product requires documented experience with the specific regulatory framework that applies, named projects in the same or comparable industries, and a process that includes regulatory mapping at project kickoff rather than at compliance review. Agencies without a portfolio entry in the relevant regulated industry are general AI design agencies regardless of how strong their general AI work is. The cost of switching agencies mid-project after a compliance failure typically exceeds the cost difference of selecting the right specialist agency at the start.