Agent UX: designing UI for AI agents in 2026

UI design for AI agents, also called agent UX, is the practice of building interfaces where autonomous software systems take actions on behalf of users, requiring design patterns for transparency, status communication, override controls, and error recovery that traditional UI does not address. Gartner projects that 40% of enterprise applications will integrate task-specific AI agents by end of 2026, up from under 5% in 2025, and most of these implementations will need an interface layer that did not exist a year ago.

What AI agent interface design requires

Agent UX means building a visual and interactive layer for a system that acts autonomously rather than waiting for user commands. The interface must show what the agent is doing, explain why it chose a specific action, let the user override at any point, and recover gracefully when something goes wrong. Traditional form-and-button interfaces do none of this.

Most product teams approach agent projects with the same design process they use for conventional applications. They wire up screens, connect APIs, and add a chat component. What they skip is the transparency layer, the part that makes the agent’s reasoning visible and gives users a clear path to intervene. That missing layer is where most AI agent projects lose user trust within the first two weeks of production use.

We have built agent-adjacent interfaces across regulated industries where opaque system behavior creates compliance exposure. Our work with DHCS on the Medi-Cal enrollment system required every automated decision to be traceable by caseworkers who carried legal responsibility for outcomes. That constraint shaped the entire design approach, and it now applies to every enterprise agent handling consequential actions.

The shift is larger than most design teams acknowledge. Standard enterprise applications are built for human users clicking through interfaces. Agent-enabled applications need to serve two audiences simultaneously: the human who sets goals and monitors outcomes, and the software agent that interprets data, executes multi-step workflows, and interacts with external systems. Designing for one audience while ignoring the other produces interfaces that frustrate both.

Most competing guides on this topic stop at the conceptual shift. They describe principles and list considerations. What they skip is what happens when you sit down to design actual screens: how the transparency layer is structured, how override controls work at the step level, how confidence indicators are tested, and why most first attempts built on chatbot templates fail within weeks. That is what this guide covers.

Why traditional UI patterns fail for autonomous systems

Traditional UI assumes the user initiates every action and the system responds. AI agents reverse that relationship by initiating actions, making decisions, and changing state without being asked. An interface built on the traditional model has no pattern for showing unsolicited actions, no way to explain why they happened, and no path for the user to undo them without starting over.

The most common failure is what we call the “black box launch.” A team ships an agent with a clean interface showing inputs and outputs but nothing in between. The user asks the agent to reschedule a meeting but cannot see which conflicts it evaluated or why it chose Thursday over Wednesday. When the choice is wrong, the user has no information to correct it efficiently.

NNGroup’s research on explainable AI in chat interfaces found that users rarely verify the sources AI systems cite, despite claiming those citations increase their confidence. One participant said they trusted the chatbot because they could check its sources, then never clicked a single citation. The implication for agent design is direct: surface explanations do not build real trust. The interface must show reasoning at the decision level.

This is why the first design decision for an agent interface is not layout or navigation. It is how the system communicates what it is about to do, what it just did, and what the user can do about it. Every other design choice depends on getting that communication architecture right before touching a single screen.

When agent interfaces fail, the damage compounds differently than bugs in traditional software. A conventional app bug gets reported and patched. An agent that books the wrong flight or sends the wrong message causes the user to stop granting the system autonomy. Recovery requires not a fix but a redesigned interaction model that rebuilds the permission the user retracted. That is a harder problem than most teams anticipate.

Core design principles for AI agent interfaces

Four design principles separate functional AI agent interfaces from failed ones: transparency, user control, proactive status communication, and structured error recovery. Every agent interface that sustains adoption past the first month implements all four. Skip any one and users disengage within weeks.

Transparency means showing the agent’s reasoning at every decision point, not just the output. When an agent books a flight, the interface surfaces which options it evaluated, what criteria it ranked, and what tradeoffs it made. A confirmation screen showing only the booked flight is not transparency. It is a fait accompli. The difference between these two experiences determines whether users grant the agent more autonomy over time or less.

User control means override, pause, and redirect capabilities at every stage of a multi-step workflow. In our NASA mission dashboard work, operators needed to redirect automated monitoring sequences without restarting from the beginning. We built a step-level intervention model where any step could be paused or modified without affecting completed ones. That override architecture became the most valued part of the entire interface.

Proactive status means the agent communicates what it is doing before the user asks. Indicators like “searching three databases” or “comparing 14 flight options” maintain confidence during processing. Silence while the agent works is the fastest path to user anxiety. Even a two-line status update changes the experience of a thirty-second wait from “is it broken?” to “it is working on my request.”

Error recovery must explain what went wrong, provide context, and suggest a next step. Generic error messages with retry buttons are not sufficient for agent interfaces. The user needs to know whether the failure was in understanding the request, accessing the data, or executing the action, because the fix differs for each. We design error states as three-part messages: what happened, why, and what to try next.

On our DHCS project, CMS traceability requirements meant every automated enrollment decision needed a logged rationale reachable without leaving the caseworker’s primary screen. That felt restrictive during the design phase. In testing, it turned out to be the feature caseworkers valued most, because it protected them during compliance audits. Regulated industries force design discipline that every agent interface benefits from, whether compliance demands it or not.

How agent design differs from chatbot and assistant design

Agents take consequential actions autonomously. Chatbot interfaces answer questions. Assistants execute commands with explicit approval. The interface requirements for each are different, and conflating them is how most teams ship the wrong design. A product built on a chatbot template will not function as an agent interface without architectural changes that touch every screen.

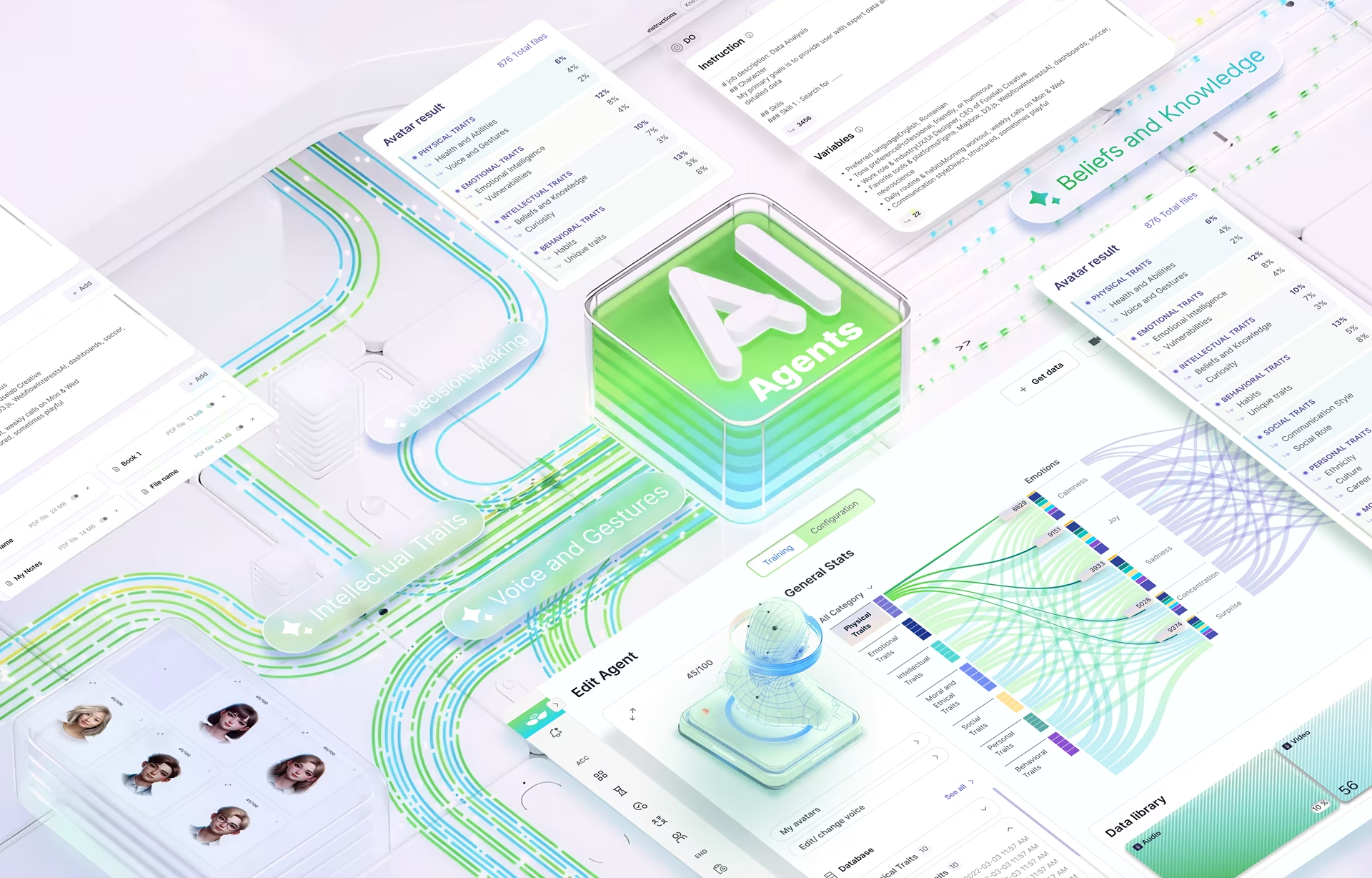

A chatbot needs a conversation thread, input controls, and response formatting. An assistant adds confirmation dialogs. An agent interface requires all of that plus a plan visibility layer, real-time progress tracking across multi-step workflows, intervention points for mid-process redirection, and an audit trail recording what the agent did and why. That is a fundamentally different information architecture.

Consider the practical difference. A chatbot responding to “find me a flight to Chicago” returns a list of options in a single message. An agent receiving the same request searches flights, checks calendar availability, compares loyalty programs, and holds a seat. Four autonomous actions happen between the user’s message and the next response. A chat template has no pattern for making those steps visible.

We have seen teams build their first agent interface on a chatbot template and add features incrementally. The approach fails because the template assumes a request-response cadence that agents violate constantly. When the agent takes four actions between user messages, the chat thread becomes a confusing log of unsolicited activity rather than a readable record of collaborative work.

The architectural fix is separating the conversation from the activity stream. The conversation thread is where the user sets goals and provides input. A dedicated activity panel shows the agent’s autonomous work in progress. Combining both into one stream produces an interface that fails as both a conversation and an activity tracker. That separation is the decision most teams miss on their first agent project.

Our Stardog Voicebox work, a conversational AI interface for enterprise knowledge graph queries, sits in the gray zone between chatbot and agent. Voicebox executes multi-step retrieval and synthesis on the user’s behalf, which pushes the design past chat patterns even though the surface looks conversational. The activity panel separation we described above came directly from that project, before it generalized into our agent design approach.

For teams whose project is actually a chatbot rather than an agent, our chatbot UI examples and best practices guide covers production patterns and common UX mistakes specific to that surface.

Where AI agent UI projects fail

Agent interface projects fail in predictable patterns, and the most common failure has nothing to do with visual design. It is shipping a capable agent with a frontend that gives users no visibility into what the system is doing. The interface treats the AI as a black box. Users respond by withholding autonomy, which defeats the purpose of building an agent.

The second failure pattern is designing around a demo workflow. In demos, the agent gets requests right immediately. In production, users phrase requests ambiguously, change direction mid-process, ask for things the agent cannot do, and need to understand partial results. Every one of those scenarios requires a designed response. Demo-optimized interfaces have none of them, and the gap shows within the first week of real use.

Gartner’s October 2025 strategic predictions forecast that “death by AI” legal claims will exceed 2,000 by end of 2026 due to insufficient risk guardrails. The interface is part of that risk surface. An agent taking consequential actions without user visibility creates legal exposure for the deploying organization. The interface is the governance layer that makes autonomous action auditable and defensible.

Our work with ClyHealth on a clinical AI interface showed this directly. Clinicians refused to use an AI recommendation system that surfaced suggestions without explaining its reasoning. The redesign showed one recommendation at a time with a supporting evidence panel on the right and a single-click override at the bottom. The AI accuracy did not change. The interface visibility did. That was the entire difference between rejection and adoption.

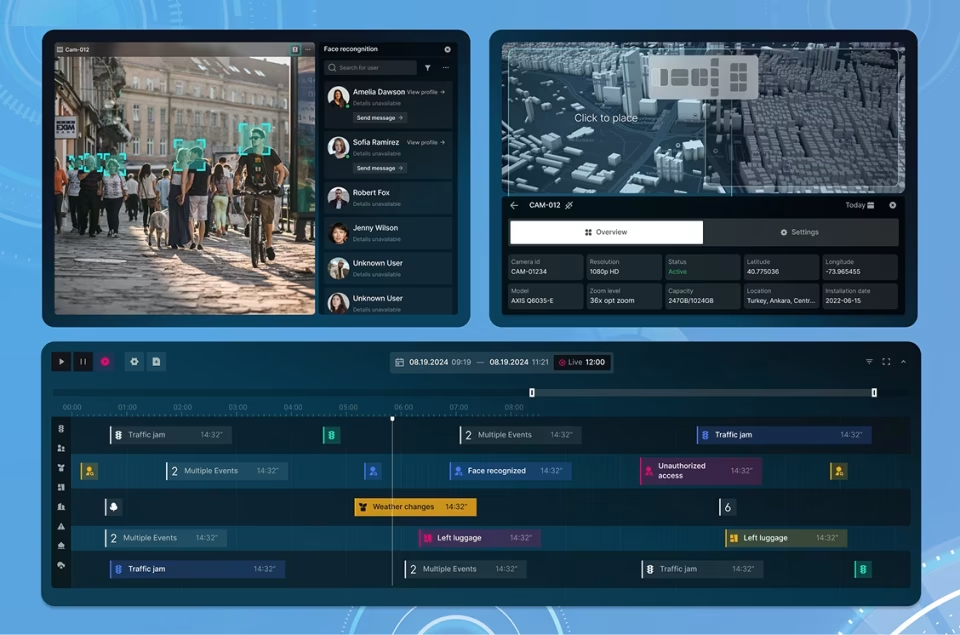

Building for a single agent when the roadmap calls for multi-agent coordination is the third failure. Gartner predicts one-third of agentic implementations will combine agents with different skills by 2027. NIH research projects we have worked on taught us this early: when multiple analysis processes ran simultaneously, researchers needed to see which process produced which result. Designing for concurrent workflows from the start is cheaper than retrofitting later.

A fourth pattern shows up in security and operations interfaces: shipping an agent that flags issues without showing the supporting signals. Our CyberDefend work was an enterprise threat detection interface where the first design surfaced flags as a feed analysts ignored. The redesign showed each flag with the signals that triggered it and a one-click path to escalate or dismiss. Trust rose because the reasoning became visible at the same speed as the alert.

Design patterns that work in production

Three design patterns consistently survive user testing and deployment in enterprise AI agent interfaces: plan-and-execute, confidence signaling, and progressive delegation. Each solves a specific problem that unstructured agent interfaces fail to address. Each has a distinct implementation path we have tested across projects.

Plan-and-execute shows the user a proposed action plan before the agent begins working. In our implementation, this takes the form of a vertical step list on the left side of the screen. Each step shows a one-line description of the intended action. The user approves the full plan, modifies individual steps, or removes steps before execution begins.

During execution, completed steps show a checkmark while the active step pulses with a progress indicator. Any upcoming step can still be edited or removed even after execution has started. This pattern gives the user a mental model of the agent’s full intent before actions begin. Without that preview, every autonomous action feels like a surprise the user did not consent to.

Confidence signaling attaches a visible indicator to every agent output. High-confidence outputs display a solid indicator and proceed without interruption. Low-confidence outputs display a hatched indicator and pause for user verification. We tested numeric percentages, color scales, and binary high-low indicators. The binary version outperformed the others because users decided faster with “I am confident” versus “I am not sure” than with “I am 73% confident.”

Progressive delegation starts the agent with limited autonomy and expands it as the user builds trust. In our Grid AI analytics interface, the agent initially required manual approval for every pipeline reconfiguration it suggested. After the operations team approved 40 consecutive suggestions, we introduced auto-execution for routine changes with a notification instead of an approval gate. Adoption was significantly higher than versions where full autonomy was available from day one.

NNGroup’s State of UX 2026 report identifies trust as a major design challenge for AI experiences, noting that users burned by premature AI features resist adopting new ones. Progressive delegation directly addresses this by letting the user’s own approval history set the pace of autonomy expansion. The system earns permission through demonstrated reliability rather than demanding it at launch.

Agent UX patterns: planning, tool use, memory, and recovery

Agent UX patterns are the interface decisions that turn raw agent architecture into something a user can supervise. Five patterns apply to every enterprise agent regardless of model or framework: planning visibility, tool-use disclosure, memory surfacing, multi-step workflow tracking, and recovery routing. Each one is a distinct interface decision the design team owns, and each one fails in a specific way when the agent is treated as a backend detail.

Planning visibility means the user sees the agent’s intended action sequence before execution begins. This is the interface manifestation of what engineers call plan-and-execute. The design decision is where the plan lives on screen, which steps are editable, and whether the user approves the full sequence or each step individually. Interfaces that skip plan preview force the user to reconstruct the agent’s logic from its outputs, which is slower and more error-prone than showing intent up front.

Tool-use disclosure surfaces which external system the agent called, what it returned, and whether the result can be inspected. Hiding tool calls produces outputs that feel authoritative but cannot be verified. We saw this directly in NIH research workflows where analysts needed to know which database returned which result. Showing tool names and return payloads at the decision moment, not in a collapsed log, is the difference between auditable output and a black box.

Memory surfacing is how persistent context appears to the user without becoming noise. The design question is when the agent should remember, when it should ask, and how the user corrects a remembered fact that is now wrong. Memory surfacing connects directly to generative UI work, where interface elements are generated from context the system holds about the user. Treating memory as invisible infrastructure is how products drift into feeling invasive without the team understanding why.

Multi-step workflow tracking needs a timeline view that survives interruption. A chat thread cannot serve as a workflow tracker because conversational cadence and workflow cadence run on different clocks. The fix is the same activity-panel separation introduced earlier in this guide, applied to the specific problem of long-horizon goals: show what was done, what is running, what is blocked, and what is next, in a single glance, without forcing the user to scroll through conversation history.

Recovery routing sends the user to the right fix path based on what actually failed. A misunderstood request needs the original prompt surface. A tool-call failure needs the tool boundary. A partial success needs a different recovery path than total failure. Generic retry buttons treat all errors identically, which is how agents lose users on the first real edge case. When recovery routing requires architectural changes on the agent side, design and AI agent development need to move together from the first discovery meeting.

What to get right when hiring for AI agent interface work

The most common hiring mistake in AI agent interface projects is evaluating agencies based on chatbot portfolios. A chatbot case study demonstrates conversational design. It does not demonstrate plan visibility, step-level override controls, multi-agent workflow tracking, or the error recovery architecture that agent interfaces require. These are different design problems requiring different skills and different testing methodologies.

Skipping UX research that specifically tests failure scenarios is the second mistake. Traditional usability testing checks whether users can complete tasks. Agent interface testing must also check what happens when the agent misunderstands a request, takes an unexpected action, or fails mid-workflow. Every one of those scenarios occurs in production. An agency that only tests the happy path ships an interface that breaks on the first real edge case.

Treating the interface as a frontend skin over an API rather than the governance layer is the third mistake. In regulated industries, the agent interface is the audit trail. Every agent action needs to be traceable, explainable, and overridable through the interface itself. An agency that does not ask about compliance requirements in the first discovery meeting does not understand what enterprise agent deployments demand.

Ask any agency you evaluate to show specific examples of transparency layers, confidence indicators, and override controls in shipped products with named clients. Ask what happened when their agent interface launched and users encountered the first unexpected action. The answer to that question reveals more about their AI design capability than any portfolio walkthrough.

Conclusion

AI agent interfaces are not chatbot skins or dashboard features with an AI label attached. Agent UX is a distinct design discipline requiring specific patterns for transparency, control, status communication, and recovery. The teams shipping successful agent products in 2026 treat the interface as the accountability layer between user intent and autonomous action, not an afterthought applied after the model works.

Frequently asked questions

What is UI design for AI agents?

UI design for AI agents is the practice of building interfaces for autonomous software systems that take actions on behalf of users. Unlike traditional UI, agent interfaces must communicate what the system is doing, explain its reasoning, provide override controls at every step, and recover gracefully from errors. The design challenge is maintaining user trust while the system operates independently.

How does AI agent UI design differ from chatbot design?

AI agent interfaces require transparency into multi-step autonomous workflows, real-time progress tracking, and intervention points where users can redirect mid-process. Chatbot interfaces handle request-response conversations with simpler patterns. An agent takes consequential actions without being asked, which demands visibility and control that chatbot templates do not provide.

What are the core principles of agent UX?

Agent UX rests on four principles: transparency into reasoning at every decision point, user control to override or redirect at any stage, proactive status communication during processing, and structured error recovery that explains failures and suggests next steps.

Why do most AI agent interfaces fail?

Most AI agent interfaces fail because teams treat the agent as a black box, showing inputs and outputs without revealing reasoning or process. Users cannot tell when the agent is confident versus guessing, cannot intervene mid-process, and receive generic error messages when things go wrong. Distrust builds faster than accuracy can overcome it.

How much does AI agent interface design cost?

AI agent interface design with a US-based specialist agency typically costs $50,000 to $200,000 depending on the number of agent workflows, transparency layer complexity, and multi-agent coordination requirements. Hourly rates range from $100 to $300. Projects that skip UX research at the front end consistently cost more in post-launch rework.

How long does an AI agent UI design project take?

An AI agent interface design project typically takes 12 to 20 weeks from discovery through handoff, longer than conventional UI projects because agent-specific patterns require dedicated design and testing cycles. Projects including error scenario testing and multi-agent workflow design add 4 to 6 weeks but prevent the most common post-launch failures.

What should I look for in an AI agent UI design portfolio?

A strong AI agent design portfolio shows shipped interfaces where autonomous systems take real actions. Look for transparency layers showing agent reasoning, override controls for multi-step workflows, confidence indicators on outputs, and error recovery design with named enterprise clients in regulated industries.