AI app builders vs design agency in 2026

AI app builders generate full-stack applications from text prompts, while custom AI UX design agencies produce the interfaces that real users depend on every day for operational work. The 2026 procurement question is not whether to use AI tools but where to use them in the build.

What AI app builders actually do in 2026

AI app builders are a fast-growing category of tools that generate working software from text prompts, but they include three distinct kinds of products that solve different problems at different points in the build process. UI-focused generators like Vercel’s v0 produce frontend components. AI-assisted coding environments like Anysphere’s Cursor accelerate development inside an existing codebase. Full-stack application generators like Lovable, StackBlitz Bolt, and Replit Agent generate database, backend, and frontend together from a single prompt. They get grouped together because they all generate code, but they are not the same tool for the same job.

The category has matured quickly, and within the broader role of generative AI in design work, app builders occupy a niche distinct from image or content generators. v0 outputs production-quality React and Next.js code and hands off cleanly to developers already inside the Vercel ecosystem; it is not designed to ship a whole application. Cursor is an AI-assisted IDE that helps developers write code in real time. It is not a tool for generating an application from scratch.

Lovable, Bolt, and Replit Agents are the actual full-stack app builders that can generate database, backend, and frontend together from a single prompt. Lovable integrates Supabase, handles authentication, and syncs to GitHub with two-way code access. Bolt positions itself as a prompt-to-full-stack app in the browser with strong initial speed. Replit Agent runs inside a full browser-based development environment with built-in hosting, more than 100 integrations, and an autonomous generation model. The three are converging on the same product from different anchors: Lovable from a Supabase-first stack, Bolt from raw browser speed, Replit Agent from its existing developer environment. For procurement, the relevant difference is which one your team can hand off cleanly when the work moves to custom design.

What the category shares is the absence of any domain knowledge. None of these tools knows your users, your data, your regulatory environment, your brand, or the specific logic that differentiates your product from a competitor’s. That gap is where the procurement decision actually lives. Whether the app builder output is the right starting point for your product depends on how much of that gap your team can absorb.

Where AI app builders genuinely excel

AI app builders are exceptionally good at rapid prototyping, internal tooling, quick SaaS scaffolding, and founder-led MVPs where speed matters more than long-term product durability. For those four categories, the decision is technically, financially, and operationally correct.

The strongest use case is rapid prototyping, producing a clickable, credible-looking application from a text prompt. Something that would have taken a designer two to three days to mock up can come out of Lovable or Bolt in under an hour. For founders testing an idea, that speed changes the math entirely. It gives them a prototype they can validate with real users with very little investment. A team testing a workflow hypothesis can generate five competing interface directions in one afternoon and discard four immediately. Once they have a direction, they can enter agency conversations with a concrete brief rather than a vague description. The cost difference at this stage is real: a month of app builder access for a pro plan at $25 costs less than a single hour of senior design time at $100 to $150 at a US-based agency.

Internal tools and back-office interfaces are the second category in which app builders consistently perform well. Admin dashboards, internal operations panels, and simple workflow tools, where the user is a colleague, brand requirements are minimal, and a functional output is enough, come out of these tools in acceptable shape. The cost and timeline savings over agency engagement are substantial, and the quality threshold is lower because the audience is internal.

Standard SaaS scaffolds are the third category that app builders handle well. In these settings, AI builders rely heavily on familiar interaction patterns: settings pages, onboarding flows and account management screens that already exist in training data. The output is rarely out of the ordinary, but it doesn’t need to be. For products competing on features and pricing rather than design differentiation, app builder output is more than they could have ever dreamed of, even a year ago.

Founder-led MVPs in non-regulated categories make the final case for tools-only work. A non-technical founder can validate an idea before a seed round by shipping something a real user can touch using Lovable or Replit Agent, without a development team and the attendant expense. The product will not scale to an enterprise level or pass a design system audit, but it is enough to test a concept as realistically as possible. If it doesn’t work, the founder learns that the hard way. If it does, the prototype has earned the investment in production-quality design.

App builders earned their adoption. They ship real work at the right point in the build. The procurement question is at which point, not whether to use them.

Where AI app builders break down

AI app builders break down when a product stops being disposable and starts being something users return to for real work. Five categories mark this threshold predictably across teams and industries: products users depend on operationally; enterprise data and dashboard systems; multi-screen workflows with persistent state and approvals; products requiring durable brand design systems across a portfolio; and products operating in regulated or compliance-driven environments. App builder output looks correct in a demo. A demo is not enough for any of these five.

The most common case is a prototype that quietly becomes production software, and users return to it daily. A team generates a quick internal proof of concept. Leadership loves the demo. Nobody wants to slow the momentum by rebuilding it properly, so the prototype gets expanded feature by feature until real users depend on it. Six months later, the product feels fragile and inconsistent. Nothing is technically broken, but users are quietly working around the product instead of through it: opening side spreadsheets to handle edge cases, building manual workflows the prompt never anticipated, asking engineering for one-off fixes that should be product features. The other version of this pattern is internal tools that grew an external user base. The brand and usability standards required for paying customers never kept pace with the tool’s app-builder origins, and adoption stalls without anyone explaining why.

The broader AI adoption pattern shows the same dynamic at industry scale. Stack Overflow’s 2025 developer survey found AI tool adoption among developers climbed to 84% in one year while trust in AI output dropped 11 percentage points to 29%, and MIT’s NANDA initiative’s 2025 GenAI Divide study found that 95% of enterprise AI pilots stall and deliver no measurable P&L impact, attributing failure largely to flawed enterprise integration where generic tools that don’t map to enterprise workflows get promoted to production.

This is exactly what happens when app builder output gets promoted without a redesign. App builders are excellent at generating convincing first-use experiences because first use is heavily represented in training data. Daily use is not. Recovery states break when users hit edge cases the prompt never saw, permission rules get tangled as roles multiply, AI confidence display becomes inconsistent across screens, and notification logic that worked for one user starts producing fatigue for many. All of it adds up to interaction debt that grows with each release.

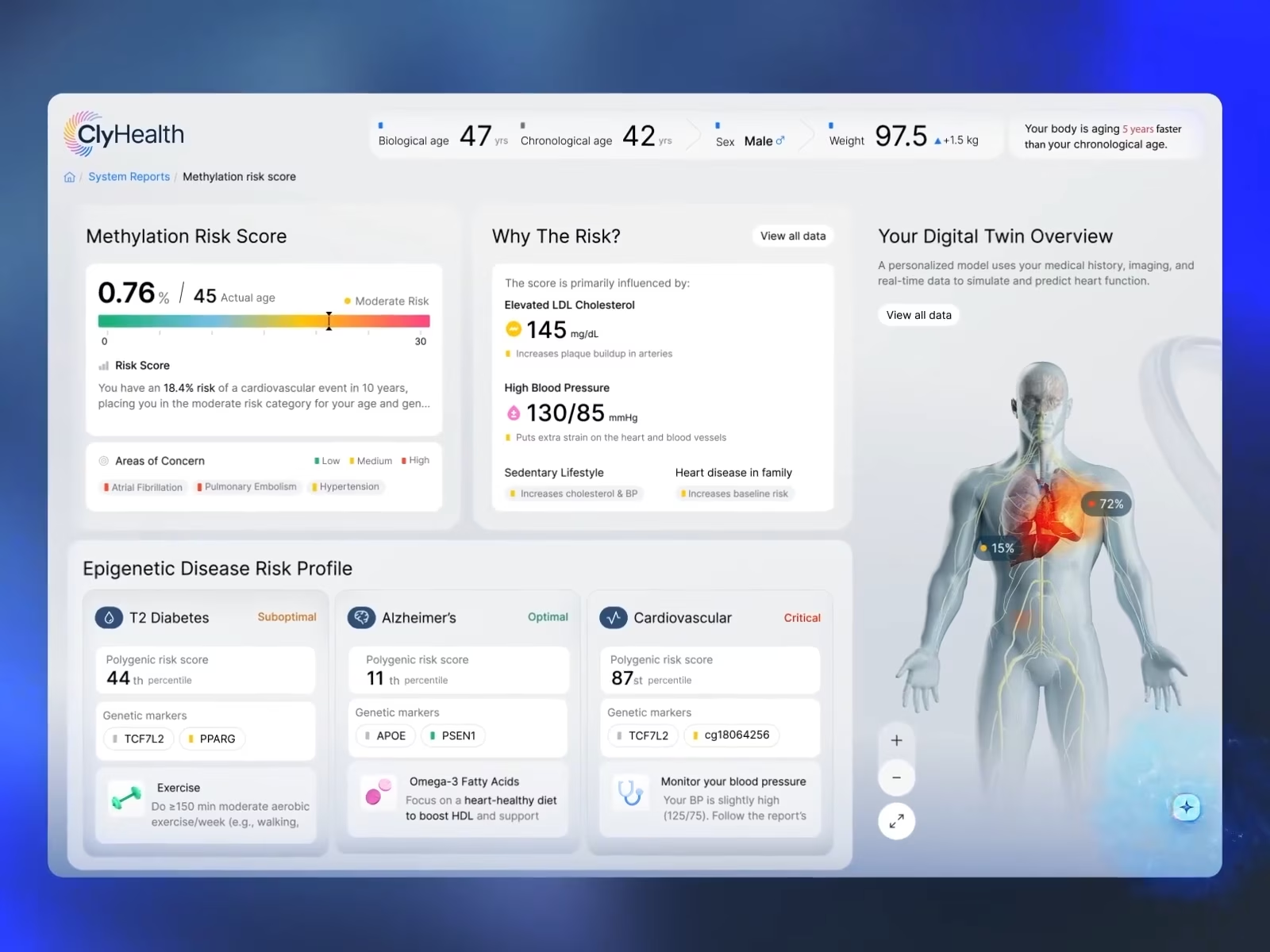

Stardog Voicebox illustrates the first category we listed above. We didn’t design the interface as decoration; it is an accountability layer that gives users confidence when they query enterprise knowledge graphs and make decisions based on what the AI returns. An app builder with no specific training signal for this kind of query display returns ambiguous results that the user has no basis to trust.

Enterprise data products are another place where the illusion breaks quickly. A generated dashboard usually stops at charts, filters, and cards because those patterns already exist everywhere online. What they cannot generate is the connective tissue between a data source, a visualization, and a user who needs to make a decision.

In our work on Grid AI’s ML operations dashboards, generating design components was the easy part. What made the platform work was designing workflows that solved role-based visibility, cross-system dependencies, drill-down logic, alert prioritization, state persistence across sessions, conflicting data sources, and escalation paths. App builders can generate the shell of that interface. They cannot generate the underlying operational logic because it is specific to the business and not present in commonly used patterns.

Multi-screen workflows are the next place where app builder output exposes its limits. These tools generate one screen well. A real product has hundreds of screens with dependencies and connections specific to your business logic, role permissions, approval flow, and what happens when a user closes a tab and returns tomorrow. A healthcare reviewer might pause a workflow midway through a decision, a procurement officer might send an approval sequence to another department, an analyst might return to an investigation three days later, expecting continuity of context. These workflows persist across days, departments, permissions, and approval chains, none of which is pattern-based as an app builder can generate.

Brand and design system requirements across a portfolio create a compounding problem that most teams discover too late. A tool can generate a button. It cannot generate your button: tokenized, tested for accessibility, and maintained by your team across multiple products over multiple releases. The first product may look fine because nothing exists to compare it against. As the product scales, the lack of a cohesive system shows. Components change across screens, accessibility behavior breaks, and every subsequent project inherits the first project’s undocumented decisions as technical debt. The fix is a rebuild.

Products shipping in regulated or compliance environments are the most demanding case, though a narrower buyer set than the categories above. Healthcare, fintech, and federal systems require alignment with frameworks such as HIPAA, FDA, FFIEC, and Section 508. These frameworks require interface-level evidence of compliance: audit trails, override paths, decision documentation, and accessibility patterns that automated builders cannot cover.

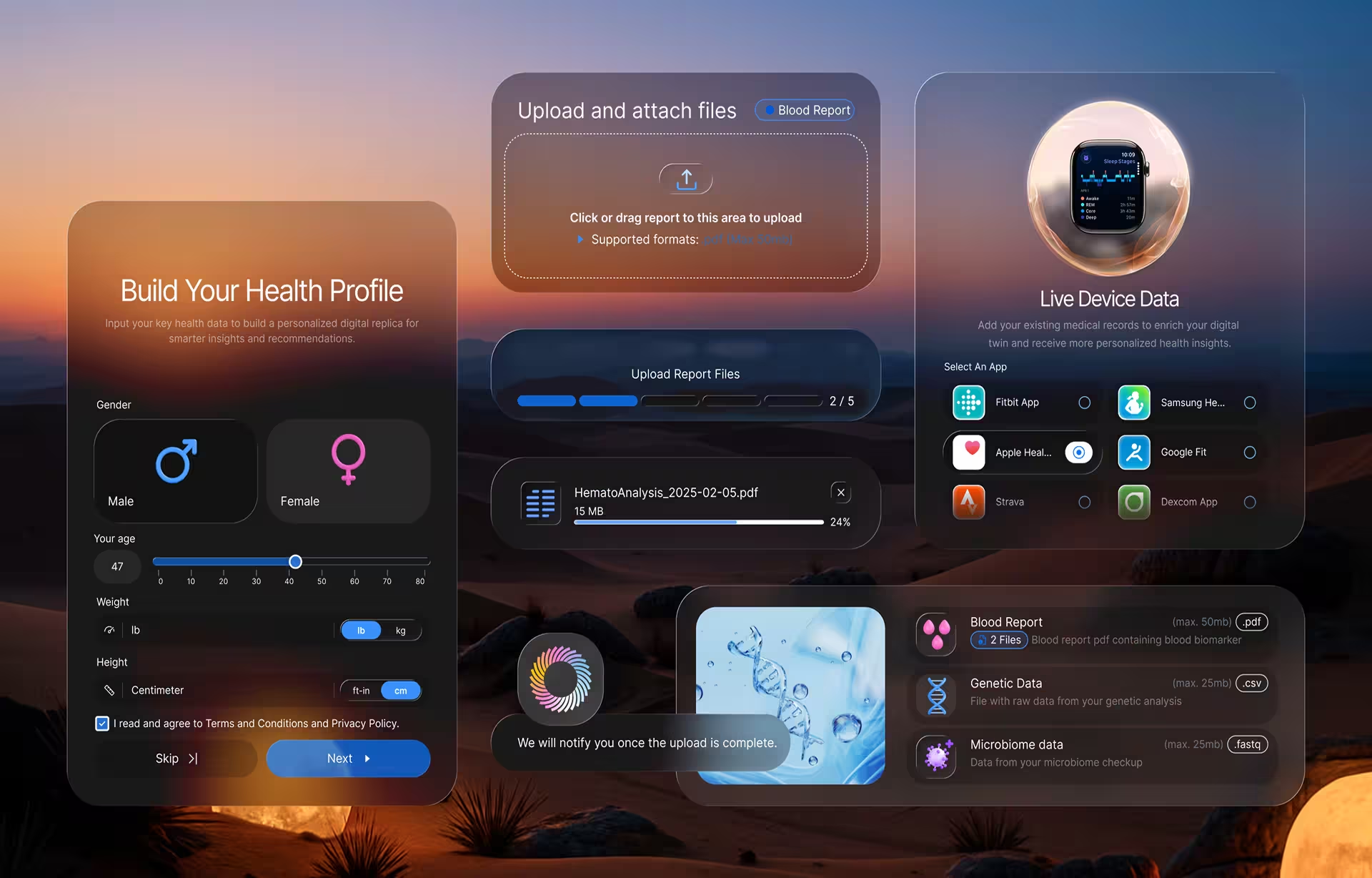

The ClyHealth engagement is a direct illustration. During the design phase, we mapped the HIPAA-compliant data flows, session management rules, and permission structures before a single visual design decision was made, because all of those had compliance dimensions that had to be resolved before layout was even a question. The deeper breakdown of what this category requires belongs inside the dedicated conversation about AI design for regulated industries, not inside a procurement decision article.

The pattern across all five categories is the same. App builders ship what is already in the training data well. Everything outside the training data is design work.

When a custom design agency becomes the right call

A custom design agency is the right call the moment an AI product moves from experimentation to operational dependency. The right window to engage is after the prototype proves the concept and before the product becomes operationally live. Engage earlier, and the team refines assumptions that may still change; engage later, and the work has already become a rebuild rather than a build.

What a serious custom AI interface design engagement adds is not prettier screens. It is an operational judgment. This is critically important, so sit up for a minute. A strong agency engagement starts with behavioral research before any design begins: how clinicians actually review edge-case diagnoses under time pressure, how financial reviewers handle ambiguity inside an approval chain, how compliance officers reconstruct the audit trail of an automated decision when regulators ask. That research feeds the rest of the engagement: validation testing of probabilistic outputs against real users in role, design systems that compound across products instead of getting rebuilt at every project, and direct accountability for what compliance review will see in the interface.

The deliverable that matters most for AI products specifically, and that no app builder produces, is judgment about where the AI should be visible to the user and where it should be invisible. Surfacing model reasoning helps in some products and hurts in others. A clinician deciding on a radiology finding needs to see the model’s confidence and the reasoning behind a flag; a customer support agent answering a routine question does not, and surfacing reasoning there only adds cognitive load and decision friction. App builders cannot make this call because the right answer depends on the specific user role, the operational stakes, and the consequences of a user trusting an output that turns out to be wrong. That is the work that defines whether an AI product earns daily use or quietly stalls.

The cost penalty for getting the timing wrong is real. Most teams wait for the obvious failures before engaging an agency: adoption stalls, compliance review surfaces a gap, six months of support tickets reveal a pattern. By then, users have been quietly working around the product for months. Workarounds are the earlier signal, and they are the actionable ones. Once users have adapted, the redesign has become a rebuild, and a rebuild costs substantially more than the original engagement would have, often by a significant multiple, because the foundation has to change, and everything built on top of it changes with it. Most teams that get this wrong get it wrong on timing, not on the choice between agency options.

Compliance review never inspects the model. Compliance review inspects the interface. That is always design work.

The decision framework: which approach for which work

Use AI app builders for disposable design: prototypes, internal tools, simple SaaS scaffolds, and founder-led MVPs in non-regulated categories. Use a custom design agency once the product crosses into serious territory, where users depend on it operationally. For most 2026 product teams, the honest answer is to use both, with a clear boundary between them.

The decision is not a hierarchy. App builders are not the budget option, and agencies are not the premium one. They are tools for different work. For early-stage exploration, app builders are often the best decision available. They compress weeks of product thinking and design into hours, helping teams validate a concept before spending money on expensive design and development. All of those advantages depend on one assumption: the work is still disposable. Once the product crosses into a serious category, continuing with app builder output stops being a cost-saving and becomes a deferred expense with interest.

The practical sequence matters as much as the framework. Start with app builders. Generate the prototype, test the concept and run early user interviews against something tangible. When the concept validates and the team commits to shipping for real, that is the handoff moment. Bring the agency in then, not earlier, not later. Teams that skip the prototype overpay for assumptions that change in the first user interview. Teams that skip the agency rebuild.

App builders accelerate the work that the model already knows. Agencies do the work that they can’t.

Conclusion

The procurement question for AI product teams in 2026 is not agency or tools. It is a throwaway prototype or a serious product; which approach should ship which work? App builders ship the prototype well. The agency ships what holds up after launch: the interfaces real users depend on, and the design system that survives a roadmap.

Teams whose AI product is moving from prototype to a serious product can book a scoping call to map out which features should ship from the app builders and which need custom design before launch.

Frequently asked questions

What are AI app builders, and how do they differ from older AI design tools?

AI app builders generate working application code rather than isolated visual assets or content. Tools like Vercel v0, Anysphere Cursor, Lovable, StackBlitz Bolt, and Replit Agent can generate interfaces, workflows, backend logic, databases, and deployment scaffolding from prompts. Older AI design categories focused more on image and copy generation, or on lightweight design assistance, rather than on full-stack application assembly.

Can AI app builders replace a UX agency in 2026?

AI app builders can replace agency work for prototypes, internal tools, simple SaaS scaffolds, and founder-led MVPs where speed matters more than long-term durability. They struggle once a product becomes operational infrastructure with real users, enterprise workflows, persistent state, compliance requirements, or systems that need to scale across multiple releases. Most mature product teams now use both approaches at different stages of the product lifecycle.

How does the AI app builder output compare to a custom design in terms of accessibility and Section 508 compliance?

AI app builders can generate interfaces that meet basic accessibility conventions for standard components, but they fall short on AI-specific accessibility requirements, such as screen-reader handling for streaming text, accurate alt text for AI-generated content, dynamic output handling, and voice-first interfaces. Custom design agencies build accessibility into the interface architecture from the start, which matters in federal, healthcare, and enterprise environments where accessibility reviews are operational requirements rather than optional improvements.

How does the cost of AI app builders compare to a design agency engagement?

AI app builders range from $0 on free tiers to $200 per seat per month. A US-based specialist agency working on a serious AI product typically costs $25,000 to $150,000 per project, with hourly rates from $100 to $300. The meaningful comparison is not subscription cost versus project cost, but total cost: builder subscription plus rebuild work if the output cannot ship, versus agency cost for production-ready work the first time.

When should a team hire a design agency for an AI product instead of using AI app builders?

The transition to a design agency usually occurs when a team realizes they are maintaining a product rather than exploring an idea. The trigger is rarely a strategic decision; it shows up as the moment when the cost of working around the AI-generated product exceeds the cost of doing the work properly. At that point, the prototype has crossed into operational territory, and continuing with builder output becomes a deferred expense rather than a saving.

Can v0, Cursor, or Lovable produce production-ready code for enterprise AI products?

v0, Cursor, and Lovable can generate working software quickly, but production-ready enterprise systems require more than functional output. Enterprise AI products often require workflow continuity, role-based logic, auditability, accessibility handling, design-system governance, and operational trust patterns that extend far beyond the generated frontend code. Most organizations treat builder output as a starting layer rather than the final production layer.